预约演示

更新于:2025-05-07

Hanalytics Pte Ltd.

更新于:2025-05-07

概览

标签

神经系统疾病

心血管疾病

其他疾病

小分子化药

疾病领域得分

一眼洞穿机构专注的疾病领域

暂无数据

技术平台

公司药物应用最多的技术

暂无数据

靶点

公司最常开发的靶点

暂无数据

| 疾病领域 | 数量 |

|---|---|

| 神经系统疾病 | 1 |

| 排名前五的药物类型 | 数量 |

|---|---|

| 小分子化药 | 1 |

| 排名前五的靶点 | 数量 |

|---|---|

| 5-HT receptor(5-羟色胺受体) | 1 |

关联

1

项与 Hanalytics Pte Ltd. 相关的药物作用机制 5-HT receptor调节剂 [+1] |

原研机构- |

非在研适应症 |

最高研发阶段临床2期 |

首次获批国家/地区- |

首次获批日期- |

100 项与 Hanalytics Pte Ltd. 相关的临床结果

登录后查看更多信息

0 项与 Hanalytics Pte Ltd. 相关的专利(医药)

登录后查看更多信息

1

项与 Hanalytics Pte Ltd. 相关的文献(医药)2020-11-01·European Heart Journal

Building a predictive model for warfarin dosing via machine learning

作者: Tan, O ; Fam, J.M ; Hon, J.S ; Lee, S.Y.A ; Yeo, K.K ; Moh, R ; Wu, J.Z ; Chiang, P ; Lau, Y.H

2

项与 Hanalytics Pte Ltd. 相关的新闻(医药)2024-01-25

The National Institute for Health and Care Excellence (NICE) has recommended two artificial intelligence (AI)-powered software tools for use in the NHS for stroke diagnosis.

The two softwares – e-Stroke and RapidAI – were selected after the health technology assessment agency found some evidence of faster and better access to treatment after using the software.

Currently the leading cause of disability, responsible for more than 100,000 cases in the UK every year, a stroke is a life-threatening medical condition that occurs when the blood supply to parts of the brain is cut off.

NICE advised that the software tools can be used within the NHS “while further evidence is generated to help better determine their cost-effectiveness”.

The institute also noted that some form of AI was deployed in 99 out of 107 stroke units in England.

e-Stroke, developed by Brainomix, was implemented in hundreds of hospitals in the UK, as well as in Europe, Asia and the US.

The software provides real-time interpretation of brain scans to specialist and non-specialist clinicians to guide treatment and transfer decisions for stroke patients.

Currently, the cost of the software’s licence for a comprehensive stroke centre is around £30,000 annually and around £15,000 per year for an acute stroke centre.

Ischemaview’s RapidAI platform also works to combine images and workflow to help doctors make faster triage or transfer decisions.

Averaging around £20,000 a year per centre, the platform has been rolled out across several NHS trusts, including University Hospitals Birmingham NHS Foundation Trust.

NICE has also recommended ten additional AI software programmes that can only be used in research. These include Accipio, BioMind and Neuro Solution.

Further research is needed on these softwares to support the review and reporting of CT scans for people who have had a suspected stroke.

The recommendation builds on Brainomix and Visionable’s partnership, which focused on advancing the e-Stroke software for stroke care in November last year.

Previously trialled at Ipswich Hospital, Visionable’s digital healthcare collaboration platform and Brainomix’s e-Stroke platform outperformed national benchmarks on the number of patients assessed by a stroke consultant within 24 hours, at around 90% versus 80%.

2022-03-09

Biomind Labs Inc. (“Biomind Labs” or the “Company”) (NEO: BMND) (OTC: CRSWF) (FSE: 3XI), a leading biotech company focused on innovation and research on endogenous tryptamines (biomolecules acting as psychoneuroplastogens) for the treatment of mental health disorders and beyond, is pleased to announce that its second Phase II clinical trial on N, N-dimethyltryptamine (“DMT”) for treatment-resistant depression has been approved by the Brazilian Institutional Review Board (the “IRB”).

“Just a few months ago, we announced the approval of our first Phase II clinical trial with an intramuscular formulation of DMT for treatment-resistant depression. Today with great enthusiasm we announce the IRB’s approval of our second Phase II clinical trial with an inhaled formulation of DMT, which will may allow us to identify the most effective method of administration for our DMT candidate in patients with depression”, commented Alejandro Antalich, CEO of Biomind Labs.

“The effects of intramuscular DMT last for about one hour, while the inhaled formulation is expected to shorten these effects to a timeframe between ten to fifteen minutes. Our goal is to find significant antidepressant effects with the shortest experience, pursuing one of our main pillars as a company, affordability. Our goal is to develop effective and safe novel pharmaceuticals that are affordable to patients regardless the income level. We understand that the long-lasting psychedelic effects make it difficult to create adequate clinical protocols to serve a larger number of patients, and this is the main reason why we focus on fast-acting psychedelics”, concluded Antalich.

In this second Phase II clinical trial, the Company will test a new approach of psychedelic therapies, a psychiatry intervention-based model, allowing a rapid and feasible merge of fast-acting psychedelic medicines into clinical practices already in existence. Consequently, provided that this second Phase II clinical trial is successful, such practices may receive a new tool, allowing practitioners to prescribe their patients specialized psychedelic medicines that may boost ongoing treatments.

This second Phase II clinical trial is scheduled to begin in the upcoming weeks. The trial will be conducted by the Company’s Scientific and Clinical Advisor Neuroscientist Dr. Dráulio Araújo and will include 40 individuals. Given the safety profile, the absence of overdose, tolerance and previous results from the first randomized, placebo-controlled trial to test a psychedelic substance in treatment-resistant depression led by Dr. Araújo, Biomind Labs continues to reinforce the Molecule Clinical Development Dossier of its novel pharmaceuticals, enabling a potentially successful molecule-to-market lifecycle while minimizing the risks of failure.

难治性抑郁症二甲基色胺

100 项与 Hanalytics Pte Ltd. 相关的药物交易

登录后查看更多信息

100 项与 Hanalytics Pte Ltd. 相关的转化医学

登录后查看更多信息

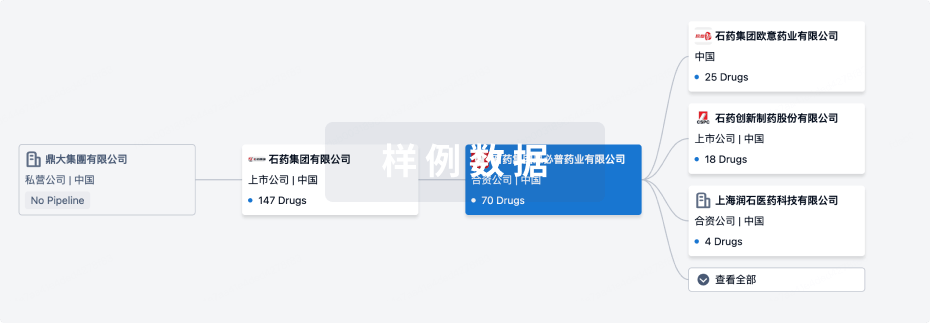

组织架构

使用我们的机构树数据加速您的研究。

登录

或

管线布局

2026年05月21日管线快照

管线布局中药物为当前组织机构及其子机构作为药物机构进行统计,早期临床1期并入临床1期,临床1/2期并入临床2期,临床2/3期并入临床3期

临床2期

1

登录后查看更多信息

当前项目

| 药物(靶点) | 适应症 | 全球最高研发状态 |

|---|---|---|

二甲基色胺 ( 5-HT receptor ) | 难治性抑郁症 更多 | 临床2期 |

登录后查看更多信息

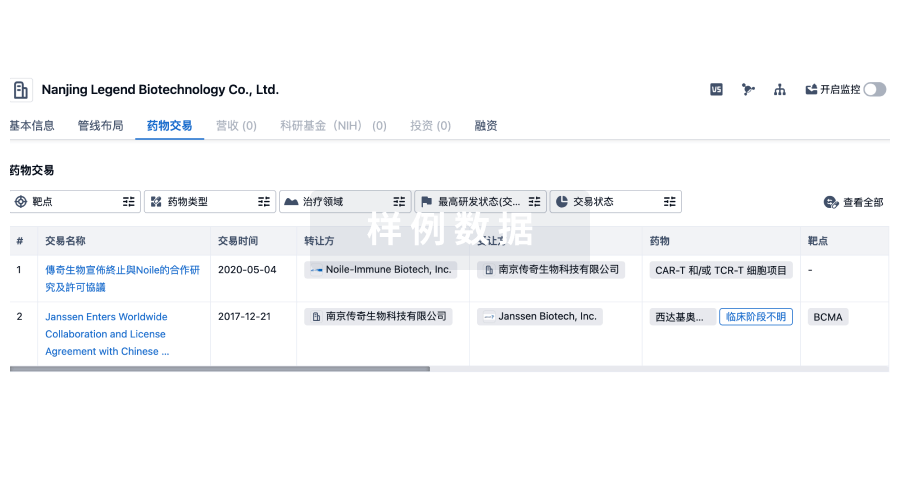

药物交易

使用我们的药物交易数据加速您的研究。

登录

或

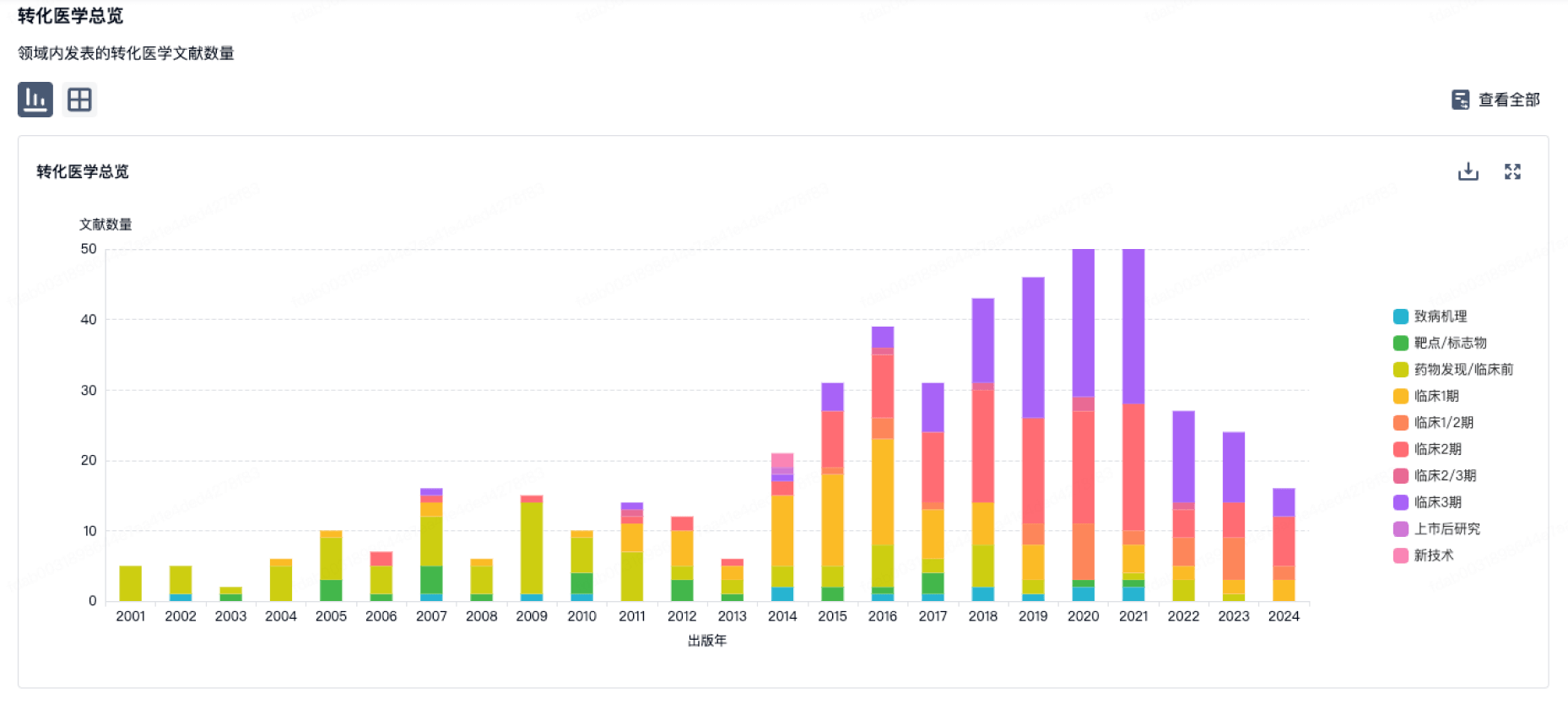

转化医学

使用我们的转化医学数据加速您的研究。

登录

或

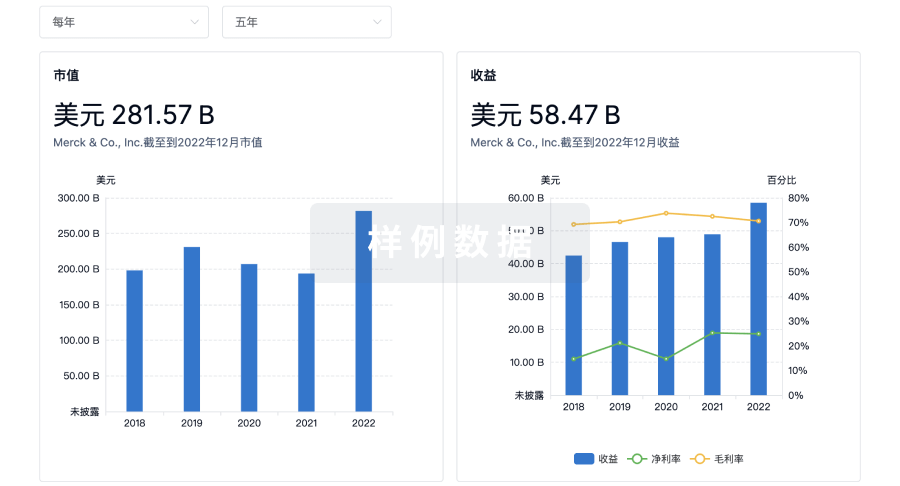

营收

使用 Synapse 探索超过 36 万个组织的财务状况。

登录

或

科研基金(NIH)

访问超过 200 万项资助和基金信息,以提升您的研究之旅。

登录

或

投资

深入了解从初创企业到成熟企业的最新公司投资动态。

登录

或

融资

发掘融资趋势以验证和推进您的投资机会。

登录

或

生物医药百科问答

全新生物医药AI Agent 覆盖科研全链路,让突破性发现快人一步

立即开始免费试用!

智慧芽新药情报库是智慧芽专为生命科学人士构建的基于AI的创新药情报平台,助您全方位提升您的研发与决策效率。

立即开始数据试用!

智慧芽新药库数据也通过智慧芽数据服务平台,以API或者数据包形式对外开放,助您更加充分利用智慧芽新药情报信息。

生物序列数据库

生物药研发创新

免费使用

化学结构数据库

小分子化药研发创新

免费使用