预约演示

更新于:2026-04-28

Shantou University

更新于:2026-04-28

概览

标签

肿瘤

神经系统疾病

其他疾病

小分子化药

化学药

外泌体药物

疾病领域得分

一眼洞穿机构专注的疾病领域

暂无数据

技术平台

公司药物应用最多的技术

暂无数据

靶点

公司最常开发的靶点

暂无数据

| 排名前五的药物类型 | 数量 |

|---|---|

| 小分子化药 | 6 |

| 化学药 | 2 |

| 外泌体药物 | 1 |

| siRNA | 1 |

| 多糖药物 | 1 |

关联

10

项与 汕头大学 相关的药物作用机制 NET抑制剂 [+1] |

原研机构 |

最高研发阶段批准上市 |

首次获批国家/地区 美国 |

首次获批日期2004-08-03 |

作用机制 30S subunit抑制剂 |

原研机构- |

非在研适应症 |

最高研发阶段批准上市 |

首次获批国家/地区- |

首次获批日期1959-01-01 |

靶点 |

作用机制 PIM2抑制剂 |

原研机构 |

在研适应症 |

非在研适应症- |

最高研发阶段临床1期 |

首次获批国家/地区- |

首次获批日期- |

41

项与 汕头大学 相关的临床试验NCT07339371

A Prospective Cohort Study on Comprehensive Care of Sleep Disordered Breathing (Care-SDB Study)

The Comprehensive Care of Sleep Disordered Breathing study (Care-SDB) is a prospective, multi-center, registry-based cohort study designed to investigate the integrated management of sleep-disordered breathing (SDB). The investigators aim to establish a nationwide SDB cohort and biobank to identify prognostic biomarkers, explore pathogenic mechanisms, and evaluate optimal treatment models. A total of 11,100 adult patients with recent sleep monitoring will be enrolled and followed longitudinally for up to 5 years. Data collection, including clinical outcomes and major adverse events, will be managed via a unified Electronic Data Capture platform. The results from Care-SDB are expected to provide critical evidence-based guidance for the risk stratification, standardized intervention, and personalized management of patients with sleep-disordered breathing.

开始日期2026-01-01 |

申办/合作机构 首都医科大学附属北京安贞医院 [+10] |

ChiCTR2500115836

Immersive Cultivation of Medical Students' Procedural and Communication Skills Empowered by Voice-Interactive AI Agents

开始日期2025-12-31 |

申办/合作机构 |

ChiCTR2500115149

Dose–Response Relationship Between Frailty and Feeding Intolerance and Functional Prognosis in Critically Ill Older Adults

开始日期2025-12-01 |

申办/合作机构 |

100 项与 汕头大学 相关的临床结果

登录后查看更多信息

0 项与 汕头大学 相关的专利(医药)

登录后查看更多信息

4,404

项与 汕头大学 相关的文献(医药)2026-08-01·TALANTA

Automated analysis of ultra-trace 236U and natural uranium isotopes via integrated sample preparation and ICP-MS/MS detection

Article

作者: Liu, Xuemei ; Wang, Hai ; Wu, Junwen ; Chen, Jisheng ; Luo, Yue ; Yang, Chuting ; Bu, Wenting ; Chen, Bingjun ; Ni, Youyi

Highly efficient and accurate analysis of ultra-trace anthropogenic 236U in the presence of natural uranium isotopes is essential for nuclear forensics and environmental monitoring. In this study, we developed an integrated analytical method for the determination of 234U, 235U, 236U, and 238U by combining highly automated sample preparation with highly sensitive ICP-MS/MS detection. Automated total dissolution was employed to ensure complete extraction of both endogenous and exogenous uranium from solid matrices, while reproducible chemical separation was achieved using an automated platform equipped with regenerable UTEVA resin. This optimized procedure yielded exceptionally low operational blanks for 236U at femtogram level. Moreover, the final eluent volume was minimized to 1.5 mL, allowing direct introduction into the ICP-MS/MS. By utilizing a membrane desolvation sample introduction system and a novel mass-shift mode (targeting UO2+ species), the ICP-MS/MS sensitivity exceeded 4.1 × 106 cps/ppb, while the 235UH + interference formation rate for 236U was suppressed to 7.1 × 10-10. Consequently, the achieved detection limits for 236U and the 236U/238U atom ratio were as low as 1.85 fg/g and 5 × 10-12, respectively. This method was successfully applied to determine the uranium isotopic composition in sediments from the adjacent sea area of the Daya Bay Nuclear Power Plant. These results demonstrate that the proposed method provides a robust and high-throughput solution for accurately quantifying of ultra-trace 236U in environmental samples, such as those impacted by the global fallout.

2026-06-01·WATER RESEARCH

Atomically engineered Fe/Mn catalysts enable ultrafast self-sustaining water purification via oxidant-free Fenton-like electron-transfer reaction

Article

作者: Wu, Mengjie ; Zhao, Chenchen ; Wu, Chuyang ; Chen, Zhanli ; Wang, Tianqi ; Zhang, Ping ; Xu, Xing ; Jiang, Fei ; Zou, Youqin

Green Fenton-like reactions based on monometallic single-atom dual-reaction-center systems have emerged as promising strategies for sustainable water purification owing to their oxidant-free operation and low energy demand. However, their practical deployment is often hindered by limited degradation kinetics and unresolved mechanisms. Here, we report a carbon nitride-supported Fe/Mn bimetallic catalyst composed of Fe nanoclusters and adjacent Mn single atoms (FeMn-CN) that enables ultrafast and self-sustaining pollutant degradation under oxidant-free conditions. The catalyst achieves 99.5 % removal of bisphenol A (BPA) within 3 min, setting an ultrahigh rate to date among self-sustaining water purification systems. Combined experimental investigations and density functional theory calculations reveal that the reaction proceeds predominantly via a direct electron-transfer pathway. Fe nanoclusters serve as primary electron acceptors, extracting electrons from BPA without external oxidants, while adjacent Mn single atoms coordinated in Mn-N4 sites synergistically enhance the electron-accepting capacity of Fe and substantially lower the charge-transfer energy barrier. This atomic-level cooperation endows FeMn-CN with exceptional activity across a broad pH range (pH 2-10), strong tolerance to complex water matrices, high selectivity toward electron-rich contaminants and facile regeneration. Furthermore, continuous-flow reactor tests and life-cycle assessment collectively verify the scalability and superior environmental sustainability of the FeMn-CN process. This work establishes an atomic-level design principle for electron-transfer-dominated self-sustaining remediation materials, advancing the development of next-generation oxidant-free water purification technologies.

2026-06-01·FOOD CHEMISTRY

Farmed Atlantic salmon (Salmo salar L.) and the consumer: Variation in the nutritional composition of raw fillet cuts

Article

作者: Struthers, W A ; Betancor, M B ; Gong, X ; Sprague, M ; Scobbie, A ; Calloni, S ; Tocher, D R ; Di Toro, J

The chemical composition of farmed Atlantic salmon can vary according to both body-size and diet composition. Since consumers typically purchase portions, understanding nutrient distribution is important. Twenty-one similar-sized salmon, fed the same feed, were filleted and divided into five equal-length cuts for analysis. Lipid content, class and fatty acid composition varied throughout the fillet with tails supplying the least amount (g.100 g-1) of EPA and DHA omega-3 fatty acids. Additionally, considerable variation was observed between the two industry-standard cuts, which combined represented the fillet average well. Protein and microminerals were relatively stable across the fillet with subtle differences unlikely to impact consumers. Carotenoid pigment decreased from head to tail, reflecting lipid and protein, contrary to previous studies. Results are of interest and relevance to producers and retailers in terms of understanding variances in fillet composition, refining nutritional labelling and accurately informing consumers about the nutrient profile of salmon portions.

47

项与 汕头大学 相关的新闻(医药)2026-04-21

点击蓝字

关注我们

当 8 年糖尿病史的张先生,空腹血糖从 13mmol/L 降至正常、降糖药剂量减半,重新找回十年未有的精力时,这不仅是一组数字的改变,更是糖尿病治疗从 “控糖” 到 “治本” 的全新突破。

2025 年国际糖尿病联盟数据显示,全球成人糖尿病患者达 5.89 亿,中国以 1.48 亿患者居世界首位,患病率 13.8%,且发病年龄持续年轻化,传统降糖治疗的瓶颈亟待突破。

传统治疗聚焦胰腺与胰岛素,却深陷 “药不能停,血糖仍高” 的困境,难以解决胰岛素抵抗和胰岛功能衰退的核心问题。

而菌群重建(FMT)的出现,让肠道微生态成为糖尿病干预的新靶点,为疾病逆转带来革命性希望。

01

肠道菌群:糖尿病的 “幕后调控者”

长期控糖聚焦胰腺,却忽视肠道菌群的核心调控作用 —— 血糖波动是表象,肠道微生态失衡才是根源。

斯坦福大学《Cell》研究证实,发酵食品可显著提升菌群多样性、降低炎症因子,而高纤维饮食无此效果。

这揭示了核心规律:菌群的 “丰富与平衡” 是缓解全身慢性炎症的关键。

▲截图源自国际期刊《Cell》

糖尿病患者肠道有益菌锐减,肠道屏障受损,内毒素入血引发炎症,进而导致胰岛素抵抗,肠道菌群紊乱正是糖尿病发生发展的重要诱因。

02

临床突破:菌群重建让 “停药减药” 成为现实

菌群失衡与血糖调控的恶性循环,是传统治疗的最大瓶颈,而菌群重建通过将健康供体的功能菌群标准化处理后移植入患者肠道,重建健康微生态,创造了传统药物难以企及的疗效。

案例一:从“三高”重负到全面逆转

42 岁的荣先生,220 斤的 “三高” 体质,5 年 2 型糖尿病史,糖化血红蛋白 9.2%,还伴有糖尿病周围神经病变,胰岛素抵抗指数高达 9.85。接受菌群重建后:

他的体重下降 5 公斤

胰岛素抵抗指数恢复至 1.20

糖化血红蛋白降至 6%

神经病变指标转阴

3 个疗程后停用所有降糖药,仅靠饮食运动即可控糖。

案例二:八年“老糖友”的药物减半之路

59 岁的张先生,8 年糖尿病史,空腹血糖长期徘徊在 11-13mmol/L,每日服用两种降糖药仍感疲惫腹胀。通过口服耐胃酸包埋的菌胶囊进行菌群重建:

1 个月腹胀消失、血糖趋稳

3 个月空腹血糖降至 6.5mmol/L

降糖药剂量减半,精力重回十年前。

这些案例并非孤例,荷兰《Gut》杂志研究早已证实,健康菌群移植可使胰岛素抵抗患者的全身胰岛素敏感性提升 23%,肝脏敏感性提升 34%。

03

四重机制:从根源打破糖尿病恶性循环

菌群重建的本质是肠道微生态的 “系统性生态重构”,通过多靶点、根源性干预,打破糖尿病的病理循环,核心在于四大通路:

修复肠道屏障:有益菌强化肠道防护,减少内毒素入血,从源头降炎症;

调节免疫稳态:抑制促炎菌、下调炎症因子,恢复胰岛素信号传导;

增强内源性降糖:降低 DPP-4 酶活性,延长 GLP-1 半衰期,放大自身降糖力;

调控全身代谢:调节胆汁酸、抑制肝脏糖异生,与二甲双胍联用增效。

汕头大学医学院研究证实,接受菌群重建的患者,肠道内褐色绿琉球菌等有益菌丰度显著增加,且与胰岛素抵抗改善呈正相关。

04

未来已来:从标准化移植到精准“菌群处方”

宏基因组学与 AI 技术推动下,糖尿病管理迈入菌群图谱个体化修复时代,未来菌群重建将升级为靶向配方,甚至打造肠道基因工程菌 “生物制药工厂”。

该技术已获 FDA 批准用于艰难梭菌感染,同时在炎症性肠病、帕金森病、抑郁症等慢病领域展开大量临床研究。正推动医学从疾病管理向生态健康维护转型。

对于亿万糖尿病患者而言,菌群重建带来的不仅是一种新疗法,更是一种新认知:人体的健康,取决于体内万亿微生物的生态平衡。重建肠道微生态,或许就是解锁糖尿病逆转、重获全身健康的关键钥匙。

⚠️温馨提示:文中方案均在专业指导下进行,个体有差异,具体需要专业人员评估后看适不适用

❤️若您或家人正遭受被肠道问题的困扰,可扫描下方二维码或点击文章底部阅读原文,一对一为你分析,制定专属方案!

2026-04-20

文献解读

近日,上海交通大学医学院附属瑞金医院麻醉科罗艳主任团队在Brain Behavior and Immunity发表题为 “Targeting AARS1-dependent lactylation improves neuronal process plasticity and mitigates cognitive deficits in sepsis-associated encephalopathy” 的研究论文。首次系统揭示了脓毒症状态下,大脑海马区乳酸积累,通过AARS1依赖的乳酸化修饰(Kla),异常激活ATRIP-ATR信号通路,导致神经元突触损伤与可塑性下降的分子机制。更重要的是,研究发现了两种极具转化潜力的靶向干预策略:使用小分子化合物AZD6738抑制ATR活性,或利用天然氨基酸L-丙氨酸竞争性抑制AARS1与乳酸结合,均可在动物模型中有效恢复神经可塑性,挽救认知与行为缺陷。这项研究不仅为SAE的治疗提供了新的理论依据,更为脑内乳酸代谢异常相关的神经退行性疾病开辟了全新视角。

脓毒症(Sepsis),一种由感染引发的全身性炎症反应综合征,是全球重症监护病房(ICU)患者死亡的主要原因之一,也是一种全球范围内严重威胁生命的感染性疾病。据统计,全球每年有近5000万人罹患脓毒症,其中高达70%的脓毒症幸存者会留下长期的神经后遗症,即脓毒症相关脑病(Sepsis-associated encephalopathy, SAE),表现为记忆衰退、焦虑、抑郁等认知功能障碍,严重影响生活质量。然而,其核心病理机制一直悬而未决,临床上也缺乏特异性治疗手段。

文中使用了AniLab® LabState®实验动物视频分析系统

研究团队首先建立了经典的盲肠结扎穿刺(CLP)小鼠SAE模型,发现乳酸在脑内神经元突起中特异堆积:通过生化检测和新型AAV-FiLa病毒荧光乳酸传感器活体成像均证实,与假手术组相比,脓毒症小鼠大脑海马区神经元突起(轴突和树突)内乳酸水平显著升高,而非神经元胞体或其他脑区。同样发现乳酸化修饰同步上调:利用特异性抗体,检测出伴随乳酸累积,全局蛋白质的赖氨酸乳酸化(Kla)修饰在神经元突起中显著增强。此外,团队也发现神经结构与功能严重受损:通过高精度成像技术(Golgi高尔基染色、透射电镜)显示,模型小鼠的神经元突起总长度缩短、分支复杂度降低、树突棘密度显著减少,并出现髓鞘结构破坏等超微结构损伤。以上结果明确了乳酸积累 → 蛋白乳酸化 → 神经元结构损伤的因果链。

CLP诱导神经元突起中乳酸蓄积及乳酸化修饰,并伴随神经元结构损伤。

为了寻找乳酸化修饰的“执行者”与“受害者”,研究团队进行了一系列严谨实验:锁定关键乳酸转移酶AARS1:通过对多种候选酶的筛选,团队鉴定出 “丙氨酰-tRNA合成酶1(AARS1)” 是神经元突起中催化乳酸化修饰的主导酶。体外重构实验证实,AARS1能以乳酸为底物,直接催化蛋白发生乳酸化。特异性抑制AARS1或敲低其表达,能显著减轻SAE引起的神经元损伤。

为了找到关键乳酸化底物ATRIP,团队利用免疫沉淀-质谱(IP-MS)技术,锁定了ATR互作蛋白(ATRIP),并确认它是AARS1的直接作用靶点之一。AARS1会催化ATRIP第127位赖氨酸(K127)发生乳酸化。

ATRIP K127位点的乳酸化修饰,如同一个“分子开关”,显著增强了ATRIP与ATR蛋白的结合亲和力,从而非依赖于DNA损伤地异常激活了ATR激酶及其下游信号通路。激活的ATR信号通过驱动神经元的过度自噬,最终导致了PSD95(突触支架蛋白)表达下调、β-APP(神经元损伤标志物)累积等一系列突触功能破坏和神经突起损伤事件。脓毒症带来的全身高乳酸血症,使过量乳酸涌入大脑海马神经元。这些乳酸被“兼职”酶AARS1利用,给ATRIP蛋白“打”上乳酸化标签。被“标签”的ATRIP异常活跃,不断激活ATR“报警器”,后者启动“自毁程序”(过度自噬),破坏了神经元的正常连接和结构。

AARS1介导的Atrip蛋白K127位点乳酰化激活Atrip/ATR通路,导致严重急性脑病(SAE)中的神经元突起损伤。

基于以上精细机制,研究团队测试了两条互补的干预路径,结果令人振奋:

靶向下游通路——抑制ATR激酶

团队选用了一款已进入肿瘤临床试验的ATR抑制剂AZD6738。口服给药后,SAE小鼠海马中ATR活性显著被抑制,神经元损伤标志物β-APP下降,突触蛋白PSD95恢复。更重要的是,小鼠在新物体位置、新物体识别和情境恐惧记忆测试中的认知表现得到了显著改善,焦虑样行为也明显减轻。

靶向上游酶活——竞争性抑制AARS1

团队巧妙地利用了L-丙氨酸——AARS1在合成丙氨酰-tRNA过程中的天然氨基酸底物。因其结构与乳酸相似,L-丙氨酸能够竞争性占据AARS1的结合位点,阻断乳酸与AARS1的结合。脑内注射L-丙氨酸后,SAE小鼠海马的ATRIP乳酸化水平、ATR通路激活程度显著降低,并同样呈现出神经结构修复、认知行为功能恢复的良好效果。

此研究提出的ATR抑制剂和L-丙氨酸,前者代表精准的靶向药物干预,后者则提供了一种潜在安全、基于天然代谢产物的营养或药物干预思路,临床转化前景广阔。

AARS1介导的atrip在K127位点的乳酰化作用激活atrip/ATR通路,导致严重急性脑病(SAE)中的神经元突起损伤。

综上所述,这项工作首次系统阐释了脑内乳酸代谢异常——蛋白质翻译后修饰(乳酸化)——DNA损伤应答通路“兼职”功能失调——神经结构和功能损伤之间的完整因果链条。它不仅为SAE的药物研发提供了两个极具希望的切入点,更重要的意义在于,它将“乳酸代谢稳态”与“神经可塑性维护”两个关键领域深度联结。

考虑到在阿尔茨海默病、帕金森病、抑郁症等多种神经系统疾病中均发现了大脑乳酸水平的异常累积,本研究揭示的AARS1介导的乳酸化及下游信号轴,可能代表了一条全新的、跨疾病的共同病理机制和极具潜力的治疗窗口。

未来,对ATR抑制剂AZD6738的临床前安全性评价,以及对L-丙氨酸最佳给药方式和长期疗效的深入研究,有望将这一基础研究的重要发现,逐步推向临床应用,为数百万脓毒症相关脑病患者带来福音。

上海交通大学医学院附属瑞金医院麻醉科罗艳主任医师、李银娇医师为论文的共同通讯作者;罗士元医师、吕卓辰医师为论文的共同第一作者。

罗艳,医学博士、主任医师、教授、博士研究生导师,上海交通大学医学院附属瑞金医院麻醉科主任,上海交通大学医学院麻醉与危重病学系教研室副主任。任中国医师协会麻醉学医师分会副会长、中国人体健康科技促进会麻醉与围术期科技专业委员会主任委员、世界华人麻醉医师协会副会长、上海市医学会麻醉专科分会副主任委员、上海市医师协会麻醉科医师分会、疼痛科医师分会副会长、上海市口腔医学会口腔麻醉学专业委员会副主任委员。曾获上海市青年五四奖章、上海市巾帼建功奖等荣誉,主持和参加原卫生部和国家自然科学基金面上项目等多项课题的研究。

吕卓辰 主治医师,上海交通大学医学院附属瑞金医院麻醉科;研究方向:围术期脑功能保护。

李银娇 住院医师,上海交通大学医学院附属瑞金医院麻醉科;研究方向:围术期脑功能保护。

罗士元 住院医师,上海交通大学医学院附属瑞金医院麻醉科;研究方向:脓毒症相关性脑病与围术期认知功能障碍的机制研究。

本文来源:Luo, S. Y., Lü, Z. C., Luo, Y., & Li, Y. J. (2026). Targeting AARS1-dependent lactylation improves neuronal process plasticity and mitigates cognitive deficits in sepsis-associated encephalopathy. Brain Behavior and Immunity, 135 (2026), 106493.

本文网址:https://doi.org/10.1016/j.bbi.2026.106493

产品介绍

LabState® Vision实验动物视频分析系统

LabState® Vision 实时硬件控制(RTPP实时位置偏爱)

视频来源:Dai, Z., Liu, Y., Nie, L. et al. Locus coeruleus input-modulated reactivation of dentate gyrus opioid-withdrawal engrams promotes extinction. Neuropsychopharmacol. 48, 327–340 (2023). https://doi.org/10.1038/s41386-022-01477-0

LabState® Suite for Windows是一套专业用于实验动物行为实时控制分析的软件套装,集硬件控制、图像分析、实验方法设计、数据收集为一体。该软件套装包含三个部分:LabState®(图形化硬件实时控制)、LabState® Vision(图像分析)、 LabState® Monitor(长时间生活监测如舔水、摄食、体重、运动)。不同模块的组合可用于不同实验动物行为分析,用户可以自行编辑各类行为任务,用于操作行为(如自身给药、药物辨别、五孔注意力、听觉和嗅觉辨别等)、条件性恐惧、穿梭箱、声惊厥反射、触摸屏任务、条件性位置偏爱、自发活动、强迫游泳、甩尾实验等动物行为实验研究。

关于我们

Product

LabState® 行为软件套装(LabState® Suite for Windows)。LabState®主要用于实验动物操作条件反射行为、条件性恐惧记忆、穿梭箱、声惊吓发射、图像轨迹分析(如自发活动、条件性位置偏爱、强迫游泳、甩尾实验)等实验系统实时控制和分析。我们以高性价比的价格给客户提供尽可能完善的行为学研究仪器设备以及技术支持,服务于国内生命科学研究。

Notable Users

北京大学中国药物依赖性研究所、军事医学科学院毒物药物研究所、国家北京药物安全评价研究中心、军事医学研究院生物医学分析中心、公安部禁毒情报技术中心、中国科学院心理研究所、中国科学院上海药物研究所、中国科学院神经科学研究所、中国科学院遗传发育研究所、中国科学院昆明动物所、中国科学院深圳先进技术研究院、北京生命科学研究所、北京大学生命学院、北京大学神经科学研究所、清华大学生命学院、浙江大学基础医学院、浙江大学求是高等研究院、浙一医院、浙二医院、复旦大学脑科学转化研究院、宁波大学医学院、中国科技大学、华中科技大学同济医学院、温州医科大学、中南大学湘雅医学院、中山大学中山医学院、福建医科大学、国家烟草质量监督检验中心、江汉大学、汕头大学、湖北理工学院、兰州大学、暨南大学、上海市精神卫生中心、上海第一医院、华东师范大学、上海大学、华南师范大学、西安交通大学、山西中医药大学、陕西师范大学、西湖大学(西湖高等研究院)、武汉科技大学、中国医科大学、浙江中医药大学、同济大学、上海交大医学院等(排名不分先后)

www.anilab.cn

AniLab Scientific Instruments(Ningbo)Co., Ltd.

邮箱:sales@anilab.cn

临床研究

2026-04-17

·眼科空间

文章来源:中华医学会眼科学分会神经眼科学组,中国研究型医院学会神经眼科专业委员会. 中国脱髓鞘性视神经炎靶向生物制剂治疗专家共识(2026年)[J]. 中华眼科杂志,2026,62(4):247-260. DOI:10.3760/cma.j.cn112142-20250525-00247.

摘要

脱髓鞘性视神经炎(DON)是一种累及视神经、与自身免疫反应相关的炎性脱髓鞘病变。近年随着生物技术飞速发展,新型靶向生物制剂在DON治疗中展现出独特优势,且获得批准用于临床的靶向生物制剂种类逐渐增多,而其长期应用的相关感染风险和不良反应不容忽视,如何合理、有效、安全使用各种靶向生物制剂治疗DON是临床医师必须应对的新挑战。中华医学会眼科学分会神经眼科学组联合中国研究型医院学会神经眼科专业委员会,总结和分析靶向生物制剂的最新临床研究结果,结合其在中国患者人群中的临床应用经验,针对靶向生物制剂治疗DON的适应证、疗效、感染风险、使用方法、特殊人群以及安全性等多方面进行充分讨论,达成共识性意见,以期为中国临床使用靶向生物制剂规范化治疗DON提供理论依据和实践指导。

关键词:脱髓鞘疾病;视神经炎;生物因子;治疗;多数赞同

版权归中华医学会所有。

未经授权,不得转载、摘编本刊文章,不得使用本刊的版式设计。

除非特别声明,本刊刊出的所有文章不代表中华医学会和本刊编委会的观点。

脱髓鞘性视神经炎(demyelinating optic neuritis,DON)为视神经炎的常见类型,是一种主要累及中青年群体、与自身免疫反应紧密相关的炎性脱髓鞘病变。临床表现为急性或亚急性视力下降、眼痛、色觉异常及视野缺损等,严重损伤视觉功能,极大降低患者的生活质量 [ 1 ] 。DON包括特发性脱髓鞘性视神经炎(idiopathic demyelinating optic neuritis,IDON)、视神经脊髓炎谱系疾病(neuromyelitis optica spectrum disorder,NMOSD)相关性视神经炎(neuromyelitis optica spectrum disorder related optic neuritis,NMOSD-ON)、髓鞘少突胶质细胞糖蛋白(myelin oligodendrocyte glycoprotein,MOG)抗体相关性视神经炎(myelin oligodendrocyte glycoprotein antibodies associated optic neuritis,MOG-ON)、多发性硬化(multiple sclerosis,MS)相关性视神经炎(multiple sclerosis related optic neuritis,MS-ON)、慢性复发性炎性视神经病变(chronic relapsing inflammatory optic neuropathy,CRION)等不同亚型 [ 1 ] 。DON分为急性期和维持期,治疗的核心在于抑制异常免疫反应,促进神经组织自我修复。急性期指疾病突然发作、出现新的神经功能损伤症状的阶段,传统治疗方法主要为糖皮质激素冲击治疗、血浆置换、免疫球蛋白治疗等,治疗目标是快速控制炎性反应,减轻损伤,促进功能恢复。维持期是指疾病急性期发作后的相对稳定阶段,目前主要常规治疗方法为糖皮质激素、传统免疫抑制剂(吗替麦考酚酯、硫唑嘌呤等)治疗,治疗目标是预防疾病复发,降低残疾累积风险。尽管这些治疗方法可一定程度缓解病情,但部分患者治疗效果不理想,且同时面临药物的不良反应和治疗风险。

近年来,新型靶向生物制剂逐步进入临床研究阶段。其具有选择性高、亲和力强以及半衰期长等显著优势,可高效靶向作用于特定的免疫细胞或免疫分子,从而发挥治疗功效。利妥昔单克隆抗体(单抗)(rituximab,RTX)作为较早用于治疗DON的靶向生物制剂之一,在临床应用中显示出良好的治疗效果 [ 2 , 3 , 4 , 5 ] ,且被国内外多个共识、指南推荐可用于治疗NMOSD-ON和MOG-ON [ 1 , 6 , 7 , 8 ] 。随着药物研发以及临床研究不断深入,越来越多靶向生物制剂,如萨特利珠单抗(satralizumab)、奥法妥木单抗(ofatumumab)、伊奈利珠单抗(inebilizumab)、依库珠单抗(eculizumab)等相继获批应用于临床 [ 6 ] ,并显示出各自独特的优势和潜力,为临床相关诊疗工作提供了更为多元化、个性化的选择空间,向DON个体化精准治疗迈出了坚实的一步。

目前共有6种靶向生物制剂用于治疗不同亚型DON( 表1 )。RTX和白细胞介素6(interleukin-6,IL-6)受体单抗托珠单抗治疗视神经炎属于超适应证用药。3种靶向生物制剂(萨特利珠单抗、伊奈利珠单抗和依库珠单抗)获批用于年龄≥12岁的青少年(仅萨特利珠单抗)和成人水通道蛋白4(aquaporin-4,AQP-4)抗体阳性NMOSD的维持期治疗;奥法妥木单抗获批用于治疗MS和临床孤立综合征。然而截至目前,针对DON仍缺乏标准化治疗方案。鉴于此,中华医学会眼科学分会神经眼科学组和中国研究型医院学会神经眼科专业委员会组织相关领域专家,经过充分讨论,围绕DON靶向生物制剂标准化治疗方案达成共识性意见,旨在为临床开展相关工作提供切实有效的建议和参考依据。

表1靶向生物制剂临床应用适应证及获批情况

注:AQP-4为水通道蛋白4,NMOSD为视神经脊髓炎谱系疾病,NMOSD-ON为NMOSD相关性视神经炎,DON为脱髓鞘性视神经炎,MS为多发性硬化,MS-ON为MS相关性视神经炎;单抗为单克隆抗体,国家医保目录为《国家基本医疗保险、工伤保险和生育保险药品目录》

一、

靶向分化群(cluster of differentiation,CD)20单抗(又称抗CD20单抗)

靶向CD20单抗可与B淋巴细胞表面的CD20结合,特异性杀伤表达CD20的B淋巴细胞,进而消除B淋巴细胞,阻断炎性反应,减少细胞因子和自身抗体产生,从而达到控制病情的目的。其广泛用于治疗中枢神经系统脱髓鞘疾病。如静脉输注的人鼠嵌合型IgG单抗RTX是B淋巴细胞耗竭治疗的代表性靶向生物制剂,2002年在我国获批用于临床治疗B淋巴细胞淋巴瘤。目前,越来越多证据支持RTX治疗DON的安全性、耐受性和有效性 [ 9 , 10 , 11 , 12 , 13 ] ,但目前仍属于超适应证用药。新一代全人源靶向CD20单抗奥法妥木单抗可皮下注射,其安全性和耐受性大幅提升。

(一)RTX

1.临床适用疾病类型

(1)NMOSD-ON:是DON最常见的亚型。RTX治疗AQP-4抗体阳性NMOSD的多中心随机对照试验(randomized controlled trial,RCT)研究结果显示,对照组有7次复发事件,RTX治疗组无复发病例;RTX治疗可显著降低 AQP-4抗体阳性NMOSD的复发率 [ 2 ] 。多项非RCT队列研究结果均证实,RTX常规剂量或低剂量治疗NMOSD-ON,均可降低年复发率(研究期间所有患者的复发总次数与所有患者的总随访年数比值;比值越低代表疾病控制越好)和扩展残疾状态评分量表(expanded disability status scale,EDSS)评分 [ 3 , 4 , 5 , 9 , 10 , 11 ] 。

(2)MOG-ON:荟萃分析和观察性队列研究结果均显示,MOG抗体相关性疾病(myelin oligodendrocyte glycoprotein antibody-associated disease,MOGAD)使用RTX治疗停止后的年复发率较治疗前显著降低。但部分MOGAD患者在RTX治疗期间仍复发,其相关机制有待进一步探讨 [ 12 , 13 ] 。

(3)MS-ON:MS的B淋巴细胞倾向于促炎性分布,驱动脑皮质病理改变,并导致神经功能障碍。RTX作为可选择的疗法,通过清除外周血中的B淋巴细胞,减少自身免疫反应攻击神经髓鞘,从而发挥治疗作用,有效抑制MS复发 [ 14 , 15 ] 。目前MS-ON并不属于RTX获批的适应证,RTX在MS治疗中的应用还需要更多高质量研究进一步明确疗效和安全性。鉴于目前获批的MS免疫修饰药物逐渐增多,在使用时应由经验丰富的医师根据患者具体情况,权衡利弊后谨慎决策。

(4)IDON和CRION:有关RTX治疗IDON和CRION的研究报道较少,既往研究结果证实,RTX可降低IDON和CRION的复发率 [ 16 , 17 ] 。

2.使用方法和疗效监测

(1)常规静脉输注方案:按体表面积375 mg/m 2静脉输注,每周1次,连用4周;或1 000 mg静脉输注,共2次(间隔2周) [ 2 , 5 , 9 , 10 ] ;(2)小剂量静脉输注方案:每周100 mg/次,连续4次;或200 mg/次,连续2次,间隔2周 [ 13 , 18 ] ;(3)每次用药前需要联合使用预防静脉输注反应药物,包括糖皮质激素、抗组胺药(如苯海拉明)和解热镇痛药(如对乙酰氨基酚)。

维持期建议监测B淋巴细胞亚群,若CD19阳性B淋巴细胞比例>1%,则建议重复静脉输注初始方案同等剂量药物;条件允许下优选监测CD27阳性记忆B淋巴细胞再生状况,进行重复静脉输注;无条件监测B淋巴细胞时,可每6个月重复静脉输注,但预防效率较低 [ 17 ] 。并非所有患者均对RTX治疗有效,再治疗间隔时间也各不相同。 FCGR3A- FF基因型与RTX治疗2年内B淋巴细胞缺乏风险、再治疗间隔缩短及临床复发次数≥1次相关,对RTX治疗反应不佳的 FCGR3A- FF基因型在中国人群中占比高达43.0% [ 19 ] 。

推荐意见1:

对于AQP-4抗体阳性NMOSD-ON,可在适应证内药物(萨特利珠单抗、伊奈利珠单抗和依库珠单抗)不可及的情况下,选择RTX治疗作为维持期一线治疗方案;建议复发性MOG抗体阳性DON、MS-ON可选择RTX治疗以降低复发率。

(二)奥法妥木单抗

奥法妥木单抗为一种重组人单克隆免疫球蛋白G1(IgG1)抗体,可与B淋巴细胞表面的CD20结合。奥法妥木单抗与大(145~161位氨基酸)和小(74~80位氨基酸)细胞外环残基表位结合,2个表位稳定连接,结合力更高且脱靶速度更低。体外研究和临床试验的荟萃分析结果显示,奥法妥木单抗的疗效不受 FCGR3A基因多态影响,对所有患者有效。因此,奥法妥木单抗对B淋巴细胞疾病具有更强的疗效 [ 20 ] 。

1.临床适用疾病类型

奥法妥木单抗于2021年12月在中国获批应用于临床,于2023年1月获批用于治疗成人复发型MS,包括临床孤立综合征、复发缓解型MS和活动性继发进展型MS,并正式纳入《国家基本医疗保险、工伤保险和生育保险药品目录》(国家医保目录)。

全球关于靶向CD20单抗的大型Ⅲ期双盲、多中心临床研究纳入1 800余例MS患者,用药持续时间最长为30个月,安全性随访或扩展研究将持续到5年,奥法妥木单抗组的年复发率为0.1,残疾进展风险较特立氟胺组低34%。MRI显示连续使用奥法木单抗治疗,可持续5年显著抑制病变进展 [ 21 , 22 , 23 ] 。

2.使用方法和疗效监测

奥法妥木单抗的推荐剂量:(1)在腹部、大腿或上臂外侧通过皮下注射奥法妥木单抗,于第0、1和2周皮下注射20 mg,从第4周开始,每月1次皮下注射20 mg [ 23 ] 。(2)在个体化治疗的维持期,可监测B淋巴细胞亚群,若CD19阳性B淋巴细胞比例>1%,则建议重复注射初始方案同等剂量药物。

推荐意见2:

奥法妥木单抗适用于复发型MS-ON,包括临床孤立综合征、复发缓解型MS、活动性继发进展型MS,可作为长期免疫治疗的一线首选药物。

二、

靶向CD19单抗

伊奈利珠单抗是一种对CD19具有高度亲和力的人源化IgG1型单抗。由于浆母细胞和部分浆细胞可表达CD19,因此伊奈利珠单抗可直接靶向作用于产生致病性AQP-4抗体的浆细胞,而靶向CD20单抗则无此作用。伊奈利珠单抗的人源性IgG可提高其耐受性,降低免疫原性。伊奈利珠单抗的去岩藻糖基化结构可使其Fc(fragment constant)段与效应细胞Fcγ受体的亲和力增加10倍,并增强抗体依赖性细胞介导的细胞毒性作用 [ 24 ] 。

1.临床适用疾病类型

伊奈利珠单抗于2022年3月在中国获批应用于临床,于2023年1月获批用于治疗成人AQP-4抗体阳性NMOSD-ON,并正式纳入国家医保目录。在伊奈利珠单抗对比安慰剂治疗NMOSD的Ⅱ和Ⅲ期研究中,213例AQP-4 抗体阳性 NMOSD患者被随机分配为161例接受伊奈利珠单抗治疗,52例接受安慰剂治疗。与安慰剂组比较,伊奈利珠单抗组的首次复发间隔时间更长,发作风险(77.3%)更低(风险比为0.227, P<0.000 1) [ 24 ] 。AQP-4抗体阳性NMOSD患者使用伊奈利珠单抗治疗,4年无复发率可达83%;不良事件发生率与使用安慰剂者相当 [ 25 ] 。

在16例AQP-4抗体阴性NMOSD患者中,12例接受伊奈利珠单抗治疗(包含6例MOGAD患者),4例接受安慰剂治疗(包含1例MOGAD患者),在临床试验全部结束后,基于收集数据进行的探索性分析结果显示,无论是从双盲期(临床试验的核心阶段)还是开放标签扩展期(双盲期结束后开启的阶段,所有受试者均明确接受试验药物治疗)开始接受伊奈利珠单抗治疗,AQP-4抗体阴性NMOSD患者的年复发率从治疗前的1.700降至0.048,B淋巴细胞耗竭也达到预期水平,耐受性良好 [ 26 ] 。因此,伊奈利珠单抗治疗AQP-4抗体阴性NMOSD患者可能安全有效。由于研究的样本量有限,故需要进一步扩大样本量进行深入探讨。

2.使用方法和疗效监测

初始负荷剂量为第0、2周静脉输注300 mg;自首次用药开始,每6个月静脉输注300 mg。分层分析结果显示,该方案的疗效不受患者的病程、体质量、疾病程度和既往治疗方法影响 [ 27 , 28 ] 。为减少输注反应的频率和程度,每次用药前需要联合使用预防输注反应药物,包括糖皮质激素、抗组胺药(如苯海拉明)和解热镇痛药(如对乙酰氨基酚)。

推荐意见3:

1.伊奈利珠单抗治疗成人AQP-4抗体阳性NMOSD-ON具有明确的有效性和安全性,并可显著抑制复发,可作为长期免疫治疗的一线首选药物。

2.对于AQP-4抗体阴性NMOSD-ON,在传统治疗(如硫唑嘌呤、吗替麦考酚酯等)无效时,可在患者知情同意后,权衡利弊使用伊奈利珠单抗,作为预防复发的长期免疫治疗药物。

三、

靶向IL-6受体单抗

(一)托珠单抗

托珠单抗是首个针对IL-6受体的人源化单抗,通过抑制IL-6,在阻断T细胞活化、浆细胞免疫球蛋白分泌、巨噬细胞活性等过程中发挥作用。目前其治疗DON属于超说明书用药。为静脉输注剂型。

1.临床适用疾病类型

(1)NMOSD-ON:托珠单抗可通过多种途径影响NMOSD的疾病活动,减少AQP-4抗体产生,抑制促炎T细胞分化,并降低血-脑屏障的通透性 [ 29 , 30 , 31 ] 。多项研究结果表明,托珠单抗在预防NMOSD复发方面显示出良好的效果,使用托珠单抗治疗停止后无复发者的比例可达68%~76%,随访的年复发率可降低至0.2~0.3 [ 32 ] 。中国首个有关托珠单抗的研究纳入118例NMOSD患者,结果显示与硫唑嘌呤相比,托珠单抗可显著减少NMOSD复发,并改善EDSS评分,尤其对伴其他自身免疫性疾病的NMOSD易复发患者,其机制在于托珠单抗通过阻断IL-6信号通路,进而抑制了由IL-6介导的全身性及中枢神经系统的免疫炎性反应 [ 33 ] 。

(2)MOG-ON:研究结果表明,托珠单抗作为单药或联合用药治疗,均可有效降低MOGAD患者的复发率 [ 29 , 30 , 31 , 32 , 33 ] 。Ringelstein等 [ 30 ] 开展研究,共纳入MOGAD患者14例,使用托珠单抗治疗停止后,年复发率从1.75降至0,EDSS评分从2.75降至2.00,治疗期间60%患者未复发,且耐受性良好,因此托珠单抗治疗难治性MOGAD安全有效。对吗替麦考酚酯和RTX治疗失败的10例MOG-ON患者进行托珠单抗治疗,7例患者病情稳定 [ 34 ] ;托珠单抗对于其他免疫疗法治疗无效的复发性MOGAD,可能是一种有效的治疗选择 [ 34 , 35 ] 。

2.使用方法

托珠单抗的成人推荐剂量是8 mg/kg,用0.9%无菌生理盐水稀释至100 ml静脉输注,输注时间应大于1 h。输注前30 min肌肉注射苯海拉明20 mg,同时进行心电监护,检测心率、呼吸频率、血压等生命体征变化。每4周静脉输注1次。

出现肝酶水平异常,中性粒细胞和血小板计数降低时,可减小剂量至4 mg/kg;也可采用皮下注射方式给药,每周1次,162 mg/次。

推荐意见4:

1.建议托珠单抗可作为成人AQP-4抗体阳性NMOSD-ON的长期免疫治疗药物。

2.对于AQP-4抗体阴性NMOSD-ON患者,在应用传统免疫抑制剂治疗失败时,经综合考量利弊和患者知情同意后,尝试使用托珠单抗预防复发。

3.对于年龄≥12岁的青少年及成人MOG-ON患者,在其他免疫治疗无效并经综合考量利弊和患者知情同意后,可尝试使用托珠单抗预防复发。

(二)萨特利珠单抗

萨特利珠单抗是一种靶向IL-6受体的人源化IgG2的单抗,与托珠单抗不同,其利用新的抗体循环技术,经抗体工程技术修饰后,表现出酸碱度依赖性IL-6受体结合亲和力,且与未经修饰的IgG2抗体比较,其对新生儿Fc受体具有更强的结合力,这两点均有助于延长其在血浆中的保留时间。此外,萨特利珠单抗结合人Fcγ受体的亲和力与未经修饰的IgG2抗体相当或较低,使诱导抗体依赖性细胞介导的细胞毒性作用和补体依赖性细胞毒性作用(complement-dependent cytotoxicity,CDC)的可能性减小。萨特利珠单抗可通过与膜结合IL-6受体或可溶性IL-6受体结合,阻断IL-6信号传导,从而发挥治疗NMOSD的作用 [ 36 ] 。

1.临床适用疾病类型

(1)NMOSD-ON:截至2025年4月,萨特利珠单抗已在95个国家和地区获批,可单药或与传统免疫抑制剂联合用药,治疗年龄≥12岁的青少年及成人AQP-4抗体阳性NMOSD。2021年4月30日在中国获批用于临床,2023年12月正式进入国家医保目录。萨特利珠单抗在中国获批的适应证为年龄≥12岁的青少年及成人AQP-4抗体阳性NMOSD。

目前已完成两项针对NMOSD的全球多中心随机双盲安慰剂对照Ⅲ期临床试验,分别为SAkuraSky研究(NCT-02028884)和SAkuraStar研究(NCT02073279) [ 37 , 38 ] 。SAkuraSky研究主要探讨使用萨特利珠单抗联合基线治疗(原有的稳定免疫抑制剂治疗)治疗NMOSD的有效性,共纳入83例患者,年龄12~74岁,按1∶1随机分配至萨特利珠单抗组( n=41)和安慰剂组( n=42),AQP-4抗体阳性NMOSD患者55例,治疗48周和92周时,萨特利珠单抗组AQP-4抗体阳性NMOSD患者的无复发率均为92%;此外,对于体质量为40 kg以上、年龄≥12岁的青少年NMOSD患者,同等药物剂量治疗的有效性确切,安全性与成人患者相似。SAkuraStar研究主要探讨萨特利珠单抗作为单药治疗NMOSD的有效性,共纳入95例患者,年龄18~74岁,按2∶1随机分配为萨特利珠单抗组( n=63)和安慰剂组( n=32),AQP-4抗体阳性NMOSD患者64例。治疗48周,萨特利珠单抗组AQP-4抗体阳性NMOSD患者的无复发率为83%。这两项研究的双盲期(医患双盲)结束后,患者进入名为SAkuraMoon的开放扩展期研究 [ 39 ] ,纳入111例继续使用萨特利珠单抗联合或不联合基线治疗的NMOSD患者,其中包括36例亚洲地区患者。所有患者接受萨特利珠单抗治疗的中位时间为5.9年,最长达8.9年。在第288周进行疗效分析,发现91%患者无明显复发。

目前暂无萨特利珠单抗治疗AQP-4抗体阴性NMOSD-ON的大规模临床对照研究。在上述两项研究中,较少例数的研究结果显示,萨特利珠单抗可用于治疗AQP-4抗体阴性复发NMOSD。

(2)MOG-ON:萨特利珠单抗暂未获批用于临床治疗MOGAD,但在临床实践中使用萨特利珠单抗探索性治疗MOGAD,取得良好疗效 [ 40 ] ;同时,萨特利珠单抗治疗MOGAD的Ⅲ期随机双盲、安慰剂对照的全球多中心研究在进行中;针对MOGAD患者,在尝试其他经验性治疗无效,并经综合考量利弊及患者知情同意后,可尝试使用萨特利珠单抗治疗。

2.使用方法

萨特利珠单抗推荐负荷剂量为在第0、2和4周进行前3次皮下注射给药,每次120 mg,之后每4周可重复给药,维持剂量120 mg。

推荐意见5:

1.对于年龄≥12岁的青少年及成人AQP-4抗体阳性NMOSD-ON患者,推荐萨特利珠单抗作为长期免疫治疗的一线首选药物。

2.对于AQP-4抗体阴性NMOSD-ON患者,在传统治疗失败时,经综合考量利弊及患者知情同意后,可尝试使用萨特利珠单抗。

3.对于年龄≥12岁的青少年及成人AQP-4抗体阳性MOG-ON患者,在传统治疗无效时,经综合考量利弊及患者知情同意后,可尝试使用萨特利珠单抗。

四、

依库珠单抗

依库珠单抗是一种重组人源化的IgG2/4单抗,为终端补体蛋白C5抑制剂,可防止其分裂成C5a和C5b片段参与补体级联反应,从而阻断炎性反应和膜攻击复合体形成,减少星形胶质细胞破坏和神经元损伤 [ 41 ] 。

1.临床适用疾病类型

依库珠单抗是中国首个也是唯一获批用于治疗NMOSD的补体抑制剂。2023年10月18日获批用于治疗成人AQP-4抗体阳性NMOSD。NMOSD作为依库珠单抗适应证,尚未纳入国家医保目录。

依库珠单抗治疗NMOSD的Ⅲ期临床试验(Prevent研究)结果显示,第48周治疗组98%患者无复发,而安慰剂组为63%患者无复发,相对风险降低94.2%(危险比=0.058,95%置信区间:0.017~0.197; P<0.000 1) [ 42 ] ;治疗获益持续至第144周,在为期144周的研究内,96%患者未复发,安慰剂组患者的无复发比例仅为45% [ 43 ] 。

2.使用方法

每周静脉输注900 mg,共4周;以后每2周静脉输注1 200 mg。每次输注时间控制在25~45 min。

推荐意见6:

对于成人AQP-4抗体阳性NMOSD-ON,推荐依库珠单抗作为维持期长期免疫治疗的一线首选药物。

五、

靶向生物制剂的选择和更换

(一)启动靶向生物制剂治疗的时机

NMOSD-ON每次发作均可造成不可逆性视觉功能损伤,其主要残障原因为复发后视觉功能缺损累积。NMOSD-ON在诊断后、首次发作或使用其他药物治疗失败再次发作时,应尽早开始免疫抑制或靶向治疗,并坚持长程治疗 [ 1 , 6 , 7 , 44 ] 。

对于AQP-4抗体阳性NMOSD-ON患者,应尽早首选一线靶向生物制剂开始维持治疗。可在急性期静脉输注甲泼尼龙(intravenous methylprednisolone,IVMP)逐步减量至口服后,开始使用靶向生物制剂 [ 6 , 44 ] 。小样本临床研究结果显示,对于NMOSD急性发作2周内患者,与单独采用IVMP治疗比较,使用IL-6受体抑制剂联合IVMP治疗,可显著降低患者的复发风险,并显著延缓患者的残疾进展,改善运动功能和生活质量 [ 45 ] 。研究者还发现,对于NMOSD患者,与最初采用其他经验性疾病修饰治疗(糖皮质激素、硫唑嘌呤、吗替麦考酚酯,他克莫司或RTX)并在复发后使用IL-6受体单抗治疗比较,在首次发作时即使用IL-6受体单抗治疗可显著降低患者的残疾程度 [ 46 ] 。小样本临床研究结果显示,对于NMOSD急性期患者,B淋巴细胞耗竭剂可联合IVMP治疗,但需要进一步研究联合用药的安全性。对于对急性期治疗反应不佳患者,如IVMP、血浆置换和静脉输注免疫球蛋白(intravenous immunoglobulin,IVIG),在权衡利弊并患者知情同意后,可尝试将B淋巴细胞耗竭剂或萨特利珠单抗作为急性期一线抢救治疗之一。

对于复发性MS-ON,在IVMP逐步减量至口服后,可开始使用奥法妥木单抗治疗。对于复发性MOG-ON,在IVMP逐步减量至口服后,可开始使用RTX治疗;对于复发性难治MOG-ON,可选择萨特利珠单抗或托珠单抗。

(二)靶向生物制剂单药和联合用药治疗

长期治疗NMOSD-ON应首选单药治疗。当病情复发或控制不理想时,可考虑采用联合用药治疗 [ 6 , 44 ] 。目前尚缺乏与B淋巴细胞耗竭剂联合用药的临床证据。萨特利珠单抗单药或联合传统免疫抑制剂治疗AQP-4抗体阳性NMOSD的获益显著。SAkuraStar研究的结果显示,萨特利珠单抗单药治疗AQP-4抗体阳性NMOSD 48周时的无复发率为83% [ 38 ] 。SAkuraSky研究的结果显示,对于AQP-4抗体阳性NMOSD,使用萨特利珠单抗联合基线治疗,48和92周时的无复发率均为92% [ 38 , 39 ] 。

Prevent研究结果显示,对于AQP-4抗体阳性NMOSD,依库珠单抗无论是否联合传统免疫抑制剂治疗,均可显著降低复发风险;治疗48周时,98%患者无复发 [ 42 ] 。

萨特利珠单抗和依库珠单抗单药和联合传统免疫抑制剂治疗,均有RCT和真实世界研究的循证证据。临床基于需求,使用靶向生物制剂联合患者已采用的传统免疫抑制剂治疗方案,可降低疾病复发的可能性,且不增加发生不良反应的风险,但应充分考虑使用免疫抑制剂的短期和长期安全性和耐受性。采用联合用药治疗期间,传统免疫抑制剂应根据靶向生物制剂作用的起效时间逐步缓慢减量。虽然单药治疗是首选,但是关于NMOSD治疗的随机对照试验结果表明,若患者已使用传统免疫抑制剂治疗,萨特利珠单抗或依库珠单抗可与传统免疫抑制剂联合用药治疗,但仍需要证据,尤其随机对照试验结果证实靶向生物制剂联合传统免疫抑制剂治疗的长期获益情况,并进一步评估联合用药的风险。

推荐意见7:

1.NMOSD-ON在诊断后、首次发作或使用传统免疫抑制剂治疗失败再次发作时,应尽早开始靶向治疗,首选一线靶向生物制剂,如萨特利珠单抗、伊奈利珠单抗和依库珠单抗。

2.对于复发性MS-ON或临床孤立综合征,奥法妥木单抗可作为一线药物。对于其他类型复发性DON,因属于超适应证用药,综合利弊及患者知情同意后,可尝试使用奥法妥木单抗。

3.建议在IVMP逐步减量至口服后,开始使用靶向生物制剂,并坚持长程治疗。

4.对于NMOSD-ON,应首选单药治疗。当病情复发或控制不理想时,可采用萨特利珠单抗或依库珠单抗联合传统免疫抑制剂治疗。MOG-ON应首选单药治疗,当病情控制不理想时,可采用萨特利珠单抗或托珠单抗联合传统免疫抑制剂治疗。

(三)靶向生物制剂及其与其他免疫抑制剂的更换

DON超适应证使用传统免疫抑制剂(硫唑嘌呤、吗替麦考酚酯等)和靶向生物制剂(RTX或托珠单抗)治疗,无复发或耐受性问题;可谨慎考虑使用萨特利珠单抗、伊奈利珠单抗、依库珠单抗或奥法妥木单抗治疗。

对于新诊断为DON的患者,选择使用萨特利珠单抗、伊奈利珠单抗或依库珠单抗治疗,取决于药物的类型、给药频率、给药途径、共病情况以及患者接受潜在安全风险的偏好。既往使用传统免疫抑制剂(如硫唑嘌呤、吗替麦考酚酯等)或RTX治疗的NMOSD-ON患者,治疗期间复发、出现治疗相关不良反应或出于患者意愿,可更换萨特利珠单抗、伊奈利珠单抗或依库珠单抗 [ 6 ] 。年龄≥12岁的青少年AQP-4抗体阳性NMOSD-ON患者应使用萨特利珠单抗治疗。真实世界研究结果显示,因RTX治疗无效和(或)耐受性差而换用萨特利珠单抗治疗的NMSOD患者,使用萨特利珠单抗(90%患者使用单药,10%患者使用联合传统免疫抑制剂)治疗24个月后均未复发且耐受性良好 [ 47 ] 。

从使用传统免疫抑制剂治疗更换为使用靶向生物制剂治疗的患者,不需要进行药物洗脱;应根据更换的靶向生物制剂类型,缓慢减少传统免疫抑制剂的用药量;两类药物重叠治疗时间应至少3个月。考虑使用与治疗失败药物机制类似的药物可能同样治疗无效,可优先考虑更换与治疗失败靶向生物制剂作用机制不同的靶向生物制剂。例如,萨特利珠单抗和托珠单抗均为IL-6受体靶向抗体,使用萨特利珠单抗治疗失败的患者,使用托珠单抗可能不会得到良好的治疗结果。RTX的作用机制为依靠激活CDC杀伤B淋巴细胞,而依库珠单抗依靠抑制CDC起效,基于RTX与依库珠单抗的终端补体蛋白C5抑制机制冲突,对于使用B淋巴细胞耗竭剂RTX治疗复发患者,可优先选择更换使用萨特利珠单抗治疗 [ 41 ] 。使用RTX治疗的NMOSD患者,若更换使用依库珠单抗治疗,药物洗脱时间至少3个月 [ 41 ] 。

对于具有共病的NMOSD-ON患者,选择治疗药物应考虑合并的其他自身免疫性疾病。建议合并其他IL-6介导或细胞介导自身免疫性疾病的NMOSD-ON患者,可考虑使用萨特利珠单抗或伊奈利珠单抗治疗。例如,萨特利珠单抗可作为合并类风湿关节炎患者的治疗药物。研究结果显示,使用萨特利珠单抗或依库珠单抗治疗停止后,可继续使用传统免疫抑制剂治疗,而不存在明显的安全问题,但仍需要进一步积累长期观察证据。在缺少长期观察证据的情况下,建议在使用靶向生物制剂治疗期间逐步减少传统免疫抑制剂的药量 [ 6 ] 。

对于AQP-4抗体阳性NMOSD-ON患者,建议使用靶向生物制剂进行长疗程治疗。目前尚无证据支持AQP-4抗体阳性NMOSD患者可停止使用免疫抑制剂治疗;对于治疗期间病情稳定且AQP-4抗体转为阴性的患者,目前尚无可停止使用免疫抑制剂治疗的证据 [ 6 , 36 , 48 ] 。

更换使用靶向生物制剂治疗应秉承慎重、科学、合理的原则,综合考虑患者的需求、药物的疗效和安全性、生活质量以及给药方案、途径、费用等多方面因素。只有在使用靶向生物制剂治疗无法控制病情或发生不可耐受的不良反应时,才考虑更换使用其他靶向生物制剂或传统免疫抑制剂治疗。

推荐意见8:

1.使用传统免疫抑制剂(如硫唑嘌呤、吗替麦考酚酯等)、RTX治疗失败的AQP-4抗体阳性NMOSD-ON患者,可选择使用萨特利珠单抗、伊奈利珠单抗或依库珠单抗治疗;应用这3种单抗中的1种进行治疗并达到药效作用时间,若治疗失败可更换另一种单抗替代治疗;考虑这3种单抗具有不同机制通路,可在使用1种单抗治疗停止后立即开始使用新的单抗治疗。建议对于年龄≥12岁的青少年AQP-4抗体阳性NMOSD-ON患者,应使用萨特利珠单抗治疗。

2.使用靶向生物制剂治疗DON失败时,可优先考虑更换作用机制不同的其他靶向生物制剂。

3.对于具有共病的NMOSD-ON,选择治疗药物应考虑合并的其他自身免疫性疾病。

六、

靶向生物制剂临床应用的安全性监测和管理策略

在使用靶向生物制剂治疗前,须对患者的感染相关风险进行全面评估,并在治疗期间进行持续随访。所有患者在静脉输注靶向生物制剂前,应完善胸部CT、血液常规及尿液常规检查,并进行感染相关筛查,包括乙型肝炎病毒(hepatitis B virus,HBV)、结核病筛查 [ 6 , 48 ] 。治疗前定量检测血清免疫球蛋白水平,治疗中持续监测血清免疫球蛋白水平 [ 6 ] 。对于低血清免疫球蛋白水平(<500 mg/dl)患者,在使用靶向生物制剂(主要为靶向CD19和CD20单抗)治疗期间,可能需要进行IVIG治疗或预防性治疗,应征求免疫学专家意见。在开始治疗的1年内,每4周定期监测肝功能及中性粒细胞水平。

按照国家免疫规划,DON患者应在使用新的靶向生物制剂治疗前至少4周及时接种减毒活疫苗或活疫苗,至少2周接种灭活疫苗。依库珠单抗具有增加脑膜炎球菌和包裹性细菌感染的风险,建议患者在接受依库珠单抗治疗前至少2周接种脑膜炎球菌疫苗 [ 48 ] 。不建议在使用靶向生物制剂治疗期间或治疗停止后至B淋巴细胞水平恢复正常前,接种减毒活疫苗或活疫苗;若需要接种灭活疫苗,建议在使用靶向生物制剂治疗停止后至少12周。对于疾病活动性高且迫切需要使用靶向生物制剂治疗的DON患者,不应因等待接种疫苗而推迟治疗的开始 [ 6 ] 。

卡氏肺孢子菌肺炎的危险因素包括合并获得性免疫缺陷综合征,CD4 +T细胞计数小于200个/mm 3,年龄≥65岁,合并糖尿病,使用免疫抑制剂种类≥2种,糖皮质激素使用剂量≥20 mg/d。具有多种风险因素的患者在使用靶向生物制剂治疗期间,可考虑进行预防性治疗 [ 48 ] 。甲氧苄啶-磺胺甲 唑是首选预防性治疗药物,治疗时长取决于免疫功能抑制的持续时间,通常需要进行多专科评估,包括感染性疾病、肺病和风湿病,以制订个体化管理策略。

(一)乙型肝炎

在使用靶向生物制剂治疗前,应进行乙型肝炎筛查,常规检查HBV表面抗原和核心抗体。靶向生物制剂禁用于HBV表面抗原和核心抗体阳性的乙型肝炎患者。对于HBV表面抗原阴性而核心抗体阳性的乙型肝炎患者以及HBV表面抗原阳性的病毒携带者,须在使用靶向生物制剂治疗前和治疗期间咨询肝病专科医师,尽早在使用靶向生物制剂治疗前(通常为1周)或最迟为开始使用靶向生物制剂治疗时,使用核苷(酸)类似物进行预防性抗HBV治疗,恩替卡韦、富马酸替诺福韦酯、富马酸丙酚替诺福韦均可作为首选药物 [ 6 ] 。

使用靶向生物制剂治疗期间应每1~3个月检查1次肝功能、HBV表面抗原、HBV DNA等。若使用靶向生物制剂治疗发生不良反应,立即停止治疗。核苷(酸)类似物治疗需要持续至使用靶向生物制剂治疗结束后至少18个月。考虑中国为HBV感染高发区域,推荐在使用靶向生物制剂治疗前,常规检查HBV表面抗原和核心抗体,必要时启动预防性抗HBV治疗。

(二)结核病

在使用靶向生物制剂治疗前,应进行干扰素γ释放试验(如结核感染T淋巴细胞斑点试验)或结核菌素皮肤试验(常用试剂为纯化蛋白衍生物)检查结核分枝杆菌感染情况。若检查结果为阳性,需要进一步评估疾病的活动性。靶向生物制剂禁用于活动性结核病患者和未经治疗的潜伏性结核分枝杆菌感染者。

对于活动性结核病患者,应转诊至相关专科进行治疗。对于无活动性结核病证据的潜伏性结核分枝杆菌感染者,建议在使用靶向生物制剂治疗前1个月或最迟为开始使用靶向生物制剂治疗时,由专科医师根据患者具体情况,进行预防性抗结核治疗 [ 40 ] 。

使用靶向生物制剂治疗期间应每3~6个月观察活动性结核病的症状并进行相关检查。对于发生活动性肺结核者,应停止使用靶向生物制剂治疗,转诊至相关专科进行结核病治疗。

推荐意见9:

1.在使用靶向生物制剂前,应常规进行HBV、丙型肝炎病毒、结核分枝杆菌检测,并评估活动性。若存在活动性感染,需要进行抗病毒或抗结核治疗,须在感染得到控制后开始使用靶向生物制剂治疗,并根据相关专科医师建议,定期监测感染情况。

2.在使用靶向生物制剂治疗期间,不建议接种减毒活疫苗或活疫苗,因此应在使用靶向生物制剂治疗前至少4周,根据免疫预防接种相关指南,完成活疫苗或减毒活疫苗接种;在使用靶向生物制剂治疗前至少2周接种灭活疫苗。建议患者在首次使用依库珠单抗治疗前至少2周,接种脑膜炎球菌疫苗。

3.开始使用靶向生物制剂治疗前应检测血清免疫球蛋白。对于低血清免疫球蛋白水平(<500 mg/dl)患者,在使用靶向CD19和CD20单抗治疗期间,需要进行IVIG治疗或预防性治疗。

(三)常见治疗不良反应

使用RTX治疗最常见的不良反应为静脉输注相关反应,表现为发热、寒战、头痛、恶心、喉部水肿等症状或过敏反应,多数为轻至中度,很少导致治疗中断。其他不良反应包括感染、白细胞及中性粒细胞减少、低免疫球蛋白血症、肝功能异常、肿瘤及死亡 [ 2 , 3 , 14 ] 。

使用奥法妥木单抗治疗的常见不良反应为上呼吸道感染、局部输注部位反应。在首次静脉输注后,全身不良反应的发生率最高,包括发热、头痛、肌痛、疲乏。最常见中断治疗的原因是免疫球蛋白IgM水平降低 [ 21 , 23 ] 。

使用伊奈利珠单抗治疗的不良反应包括静脉输注相关反应、尿路感染、鼻咽炎、上呼吸道感染、关节痛、背痛、头痛、易跌倒、感觉减退、膀胱炎、眼痛等,并可导致进行性和长期低免疫球蛋白血症,表现为总免疫球蛋白水平下降或单种免疫球蛋白(IgG或IgM)水平下降,中性粒细胞降低 [ 25 , 26 ] 。

在使用靶向CD20单抗治疗过程中,对于出现危及生命的静脉输注相关反应者,应立即和永久停止治疗,并给予支持性治疗;对于出现非严重静脉输注相关反应(如胸闷、喉部刺激或荨麻疹)者,可暂停静脉输注、降低静脉输注速率和(或)进行对症治疗(如口服苯海拉明、静脉输注糖皮质激素治疗荨麻疹) [ 15 ] 。

使用托珠单抗治疗最常见的不良反应为静脉输注相关反应,包括静脉输注期间血压升高,静脉输注后24 h内头痛和出现皮疹。其他不良反应包括上呼吸道感染、尿路感染、高胆固醇血症等。使用托珠单抗治疗患者多数联合传统免疫抑制剂治疗,发生严重感染的风险升高,在使用托珠单抗治疗前应仔细评估使用风险和利益,对于在使用托珠单抗治疗中发生严重感染者,应中断治疗,直至感染得到控制 [ 33 ] 。

患者对萨特利珠单抗治疗的耐受性较好,多数不良反应的程度为轻至中度,常见上呼吸道感染、尿路感染、中性粒细胞异常等。此外,使用萨特利珠单抗单药治疗的安全性与其联合传统免疫抑制剂治疗的安全性相似 [ 36 , 38 ] 。

使用依库珠单抗治疗可增加脑膜炎球菌和包裹性细菌感染的风险,常见不良反应包括上呼吸道感染、头痛、鼻咽炎等 [ 42 , 43 ] 。

(四)治疗期间检测

在使用靶向生物制剂治疗期间,至少每年检查1次HBV、丙型肝炎病毒、结核分枝杆菌感染情况;每6个月检查1次血清免疫球蛋白水平。对于存在高感染风险患者(如合并其他疾病、高残疾状态、青少年、老年、妊娠期妇女或免疫功能抑制人群),建议将上述感染相关检查的频率提升至每年至少2次。

在使用靶向生物制剂治疗期间,常规评估粒细胞、肝转氨酶和血清胆红素水平。在治疗期间的前3个月,每4周检查1次丙氨酸氨基转移酶(alanine aminotransferase,ALT)和天门冬氨酸氨基转移酶(aspartate aminotransferase,AST)水平,之后每3个月检查1次。若ALT或AST水平升高超过正常值上限5倍,并伴有胆红素水平升高,则应立即停止使用靶向生物制剂治疗,且不建议再次使用靶向生物制剂治疗。

使用靶向生物制剂治疗可降低AQP-4抗体滴度。虽然短暂性和持续性AQP-4抗体阴性的意义尚不清楚,但在可能的情况下,建议定期(每6个月)检查AQP-4抗体水平,以进一步指导后续治疗。

使用靶向生物制剂治疗的女性患者易发生尿路感染。处理方法与其他普通患者相同。对于反复发作的尿路感染,可在发作缓解期口服低剂量、长疗程的抗菌药进行预防治疗 [ 42 ] 。

使用靶向CD20单抗单药治疗,发生进行性多灶性白质脑病(progressive multifocal leukoencephalopathy,PML)的风险极低。对于临床表现和影像学检查怀疑PML者,需要检测脑脊液中约翰·坎宁安(John Cunningham,JC)病毒的DNA以协助诊断。对于长期使用靶向CD20单抗治疗的患者,建议监测外周血CD4 +T细胞计数(低于200个/μL时发生PML的风险增大) [ 15 ] 。研究结果显示,在使用伊奈利珠单抗治疗者中未见发生PML,但使用RTX治疗有发生PML的风险;并提示当临床表现和影像学检查结果怀疑PML时,行脑脊液JC病毒PCR检查有助于明确诊断。对于活动性PML,应立即中断使用靶向CD20单抗治疗。

推荐意见10:

1.在使用靶向生物制剂治疗前以及治疗期间,需要常规每年检查HBV、丙型肝炎病毒、结核分枝杆菌感染情况;每6个月检查1次血清免疫球蛋白水平。

2.在使用靶向生物制剂治疗期间,常规评估粒细胞、肝转氨酶和血清胆红素水平。在治疗期间的前3个月,每4周检查1次ALT和AST水平,之后每3个月检查1次。若ALT或AST水平升高超过正常值上限5倍,并伴有胆红素水平升高,则应立即停止且不再使用靶向生物制剂治疗。

3.使用靶向CD20单抗治疗具有发生PML的风险,可根据临床表现和脑部MRI检查结果进行诊断。对于活动性PML,应立即中断使用靶向CD20单抗治疗。

七、

妊娠期和哺乳期的靶向生物制剂治疗

DON主要累及年轻人,尤其育龄期女性。研究结果显示,妊娠后DON的复发率显著增高 [ 49 ] 。目前尚缺乏充足的关于妊娠期和产后使用靶向生物制剂治疗的临床循证数据。已有研究结果显示,母体在妊娠期使用B淋巴细胞耗竭剂治疗,出生的婴儿可发生一过性外周血B淋巴细胞耗竭或淋巴细胞减少;妊娠期静脉输注RTX可能增加流产和早产的风险 [ 15 ] 。目前尚无关于妊娠期使用奥法妥木单抗治疗相关发育风险的充分证据;动物实验结果显示,奥法妥木单抗可能穿过胎盘屏障导致胎儿B淋巴细胞耗竭。人乳汁中含有IgG,目前尚不明确奥法妥木单抗导致婴儿B淋巴细胞耗竭的可能性 [ 6 , 15 ] 。妊娠晚期使用RTX治疗和伊奈利珠单抗治疗,均可能导致胎儿出现短暂的血液学指标异常 [ 28 ] ,因此不推荐或应谨慎使用。

研究结果显示,NMOSD患者在妊娠期及围产期使用萨特利珠单抗治疗,分娩后脐带血、分娩后5和26 d母乳及婴儿血清中的萨特利珠单抗浓度均低于可检测值下限(<0.200 μg/ml),提示萨特利珠单抗经胎盘转移率低,常规治疗剂量对婴儿的影响不明显。基于萨特利珠单抗与托珠单抗的药物机制具有相似性,使用托珠单抗可治疗妊娠期类风湿关节炎的证据可能表明,萨特利珠单抗可在妊娠期使用 [ 6 ] 。有关妊娠期使用依库珠单抗治疗的临床研究结果有限 [ 50 ] 。

有生育能力的女性患者在使用靶向B淋巴细胞单抗治疗期间和停止治疗后6个月内应采取有效的避孕措施,男性患者可在其伴侣的怀孕计划期间继续使用伊奈利珠单抗治疗。在使用伊奈利珠单抗治疗中是否可进行母乳喂养,目前证据尚不足。靶向CD20单抗不在母乳中积累;依据使用靶向CD20单抗治疗的临床经验,对于具有紧急治疗需求和强烈母乳喂养需求的患者,在权衡利弊并患者知情同意后,可在使用靶向CD20单抗治疗的同时,尝试母乳喂养。

推荐意见11:

关于妊娠期和哺乳期使用靶向生物制剂治疗DON的研究数据有限。须在利大于弊且患者知情同意后,妊娠期和哺乳期NMOSD-ON患者可使用萨特利珠单抗治疗。

八、

总结与展望

本共识的内容仅代表参与制订的专家针对DON靶向生物制剂治疗的指导意见,供临床医师在工作中参考。本共识所提供的推荐意见并非强制性意见,与本共识不一致的作法并不意味不当或错误。治疗DON的靶向生物制剂发展迅速,有关中国患者人群应用靶向生物制剂治疗DON的临床研究仍在进行中,有望深入探讨靶向生物制剂在不同人群中的疗效差异及其与遗传等多方面因素的相关性。随着中国临床研究数据不断扩大和更新,临床经验不断积累,本共识有必要定期进行修订和更新。

值得关注的是,任何药物治疗方案均应在遵循个体差异的基础上实现个性化制订,这将成为推动医学发展的重要方向和实践目标。在临床工作中严格把握创新药物的适应证,使用前对患者进行仔细评估,并合理规范用药;在充分考虑药物疗效及安全性的前提下,结合国情以及患者的经济承受能力等因素,综合判断和选择用药策略,既可保障疗效,又可实现费用的合理化,从而获得最佳性价比效果。相信DON患者将会获得更为高效、安全的治疗。

形成共识意见的专家组成员:

中华医学会眼科学分会神经眼科学组

魏世辉 解放军总医院眼科医学部(组长)

钟 勇 中国医学科学院 北京协和医学院 北京协和医院眼科(副组长)

姜利斌 首都医科大学附属北京同仁医院北京同仁眼科中心(副组长)

(以下委员按姓氏拼音排序)

岑令平 汕头大学·香港中文大学联合汕头国际眼科中心(现在广东医科大学眼视光学系)

陈 洁 温州医科大学附属眼视光医院

陈长征 武汉大学人民医院眼科

范 珂 河南省人民医院 河南省立眼科医院

付 晶 首都医科大学附属北京同仁医院北京同仁眼科中心

宫媛媛 上海交通大学医学院附属第一人民医院眼科

韩 梅 天津市眼科医院

黄小勇 陆军军医大学西南医院全军眼科医学专科中心

纪淑兴 陆军特色医学中心(大坪医院)眼科

江 冰 中南大学湘雅二医院眼科

李宏武 大连医科大学附属第二医院眼科

李晓明 长春中医药大学附属医院眼科

李志清 天津医科大学眼科医院

卢 艳 首都医科大学附属北京世纪坛医院眼科

陆 方 四川大学华西医院眼科

陆培荣 苏州大学附属第一医院眼科

马 嘉 昆明医科大学第一附属医院眼科

毛俊峰 中南大学湘雅医院眼科

潘雪梅 山东中医药大学附属眼科医院

邱怀雨 首都医科大学附属北京朝阳医院眼科(现在中国康复研究中心视障康复科)

施 维 首都医科大学附属北京儿童医院眼科

石 璇 北京大学人民医院眼科

宋 鄂 苏州大学附属理想眼科医院

孙 岩 沈阳何氏眼科医院

孙传宾 浙江大学医学院附属第二医院眼科中心(现在温州医科大学附属眼视光医院杭州院区)

孙艳红 北京中医药大学东方医院眼科

王 敏 复旦大学附属眼耳鼻喉科医院眼科

王 影 中国中医科学院眼科医院

王欣玲 中国医科大学附属第四医院眼科

王艳玲 首都医科大学附属北京友谊医院眼科

肖彩雯 上海交通大学医学院附属第九人民医院眼科

徐 梅 重庆医科大学附属第一医院眼科

徐全刚 解放军总医院眼科医学部

于金国 天津医科大学总医院眼科

张丽琼 哈尔滨医科大学附属第一医院眼科

张文芳 兰州大学第二医院眼科

张秀兰 中山大学中山眼科中心

钟敬祥 暨南大学附属第一医院眼科

中国研究型医院学会神经眼科专业委员会

魏世辉 解放军总医院眼科医学部(主任委员)

钟 勇 中国医学科学院 北京协和医学院 北京协和医院眼科(副主任委员)

姜利斌 首都医科大学附属北京同仁医院北京同仁眼科中心(副主任委员)

陈长征 武汉大学人民医院眼科(副主任委员)

王 敏 复旦大学附属眼耳鼻喉科医院眼科(副主任委员)

张文芳 兰州大学第二医院眼科(副主任委员)

徐全刚 解放军总医院眼科医学部(副主任委员)

黄厚斌 解放军总医院眼科医学部(副主任委员)

李才锐 大理州人民医院眼科(副主任委员)

(以下委员按姓氏拼音排序)

岑令平 广东医科大学眼视光学系

陈 洁 温州医科大学附属眼视光医院

陈梅珠 解放军联勤保障部队第九〇〇医院眼科

范 珂 河南省人民医院 河南省立眼科医院

付 晶 首都医科大学附属北京同仁医院北京同仁眼科中心

宫媛媛 上海交通大学医学院附属第一人民医院眼科

何 宇 成都市第一人民医院眼科

侯豹可 解放军总医院眼科医学部

江 冰 中南大学湘雅二医院眼科

荆 京 首都医科大学附属北京天坛医院眼科

雷 涛 西安市人民医院(西安市第四医院)眼科

李宏武 大连医科大学附属第二医院眼科

李红阳 首都医科大学附属北京友谊医院眼科

李晓明 长春中医药大学附属医院眼科

李志清 天津医科大学眼科医院

蔺雪梅 西安市第一医院眼科

刘 勤 甘肃省人民医院眼科

刘婷婷 山东第一医科大学附属眼科医院(山东省眼科医院)

刘现忠 河南省直第三人民医院眼科

卢 艳 首都医科大学附属北京世纪坛医院眼科

陆 方 四川大学华西医院眼科

陆培荣 苏州大学附属第一医院眼科

马 嘉 昆明医科大学第一附属医院眼科

马 瑾 中国医学科学院 北京协和医学院 北京协和医院眼科

毛俊峰 中南大学湘雅医院眼科

潘雪梅 山东中医药大学附属眼科医院

彭春霞 首都医科大学附属北京儿童医院眼科

邱 伟 中山大学附属第三医院眼科

邱怀雨 中国康复研究中心视障康复科

施 维 首都医科大学附属北京儿童医院眼科

石 璇 北京大学人民医院眼科

宋宏鲁 解放军联勤保障部队第九八〇医院眼科(整理资料)

孙 伟 长沙爱尔眼科医院

孙 岩 沈阳何氏眼科医院

孙传宾 温州医科大学附属眼视光医院杭州院区

孙艳红 北京中医药大学东方医院眼科

王 影 中国中医科学院眼科医院

王海燕 西安市人民医院(西安市第四医院)眼科

王欣玲 中国医科大学附属第四医院眼科

魏 菁 河南科技大学第一附属医院眼科

吴松笛 西安市第一医院神经内科和神经眼科

肖彩雯 上海交通大学医学院附属第九人民医院眼科

闫 焱 上海交通大学医学院附属仁济医院眼科

杨 晖 中山大学中山眼科中心

于金国 天津医科大学总医院眼科

张丽琼 哈尔滨医科大学附属第一医院眼科

张曙光 郑州市第二人民医院眼科

赵 颖 山东第一医科大学附属青岛眼科医院

周欢粉 解放军总医院眼科医学部(执笔)

周孝来 中山大学中山眼科中心

邹文军 无锡市第二人民医院眼科

邹燕红 清华大学第一附属医院眼科

参考文献略

平台合作联系方式

电话:010-51322387

原文可通过杂志官网或中华医学期刊网下载阅读

本文版权归中华医学会所有

未经允许,不得转载。

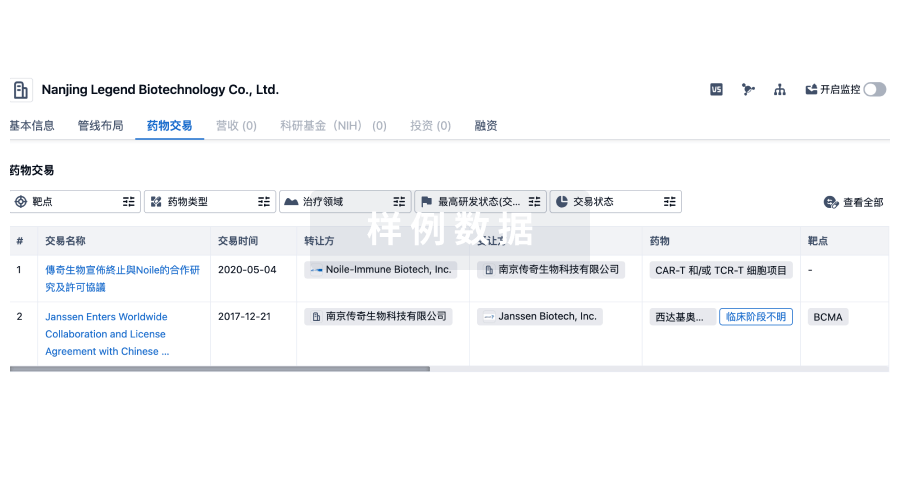

100 项与 汕头大学 相关的药物交易

登录后查看更多信息

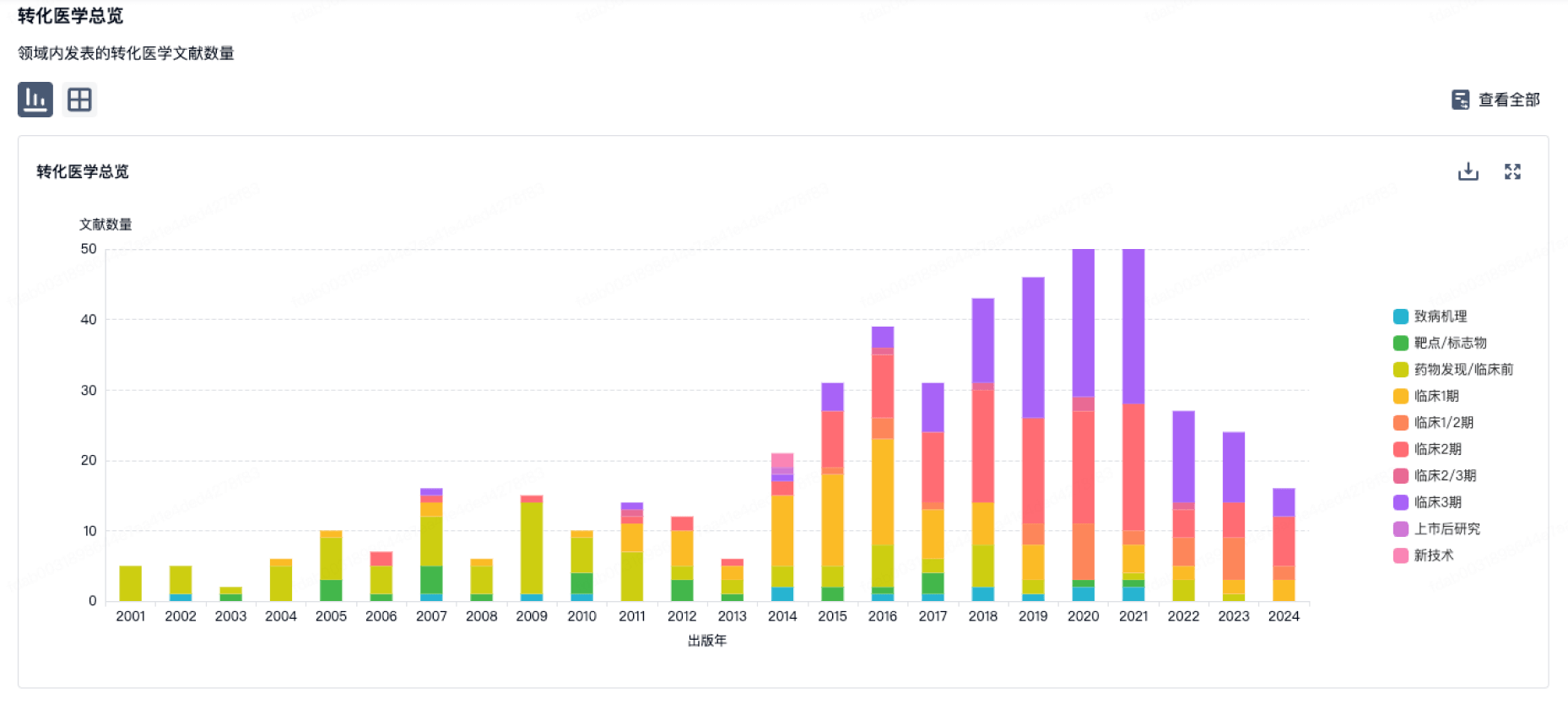

100 项与 汕头大学 相关的转化医学

登录后查看更多信息

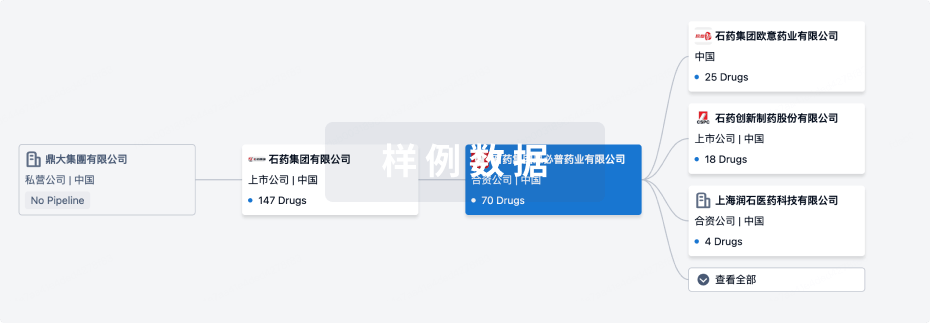

组织架构

使用我们的机构树数据加速您的研究。

登录

或

管线布局

2026年05月21日管线快照

管线布局中药物为当前组织机构及其子机构作为药物机构进行统计,早期临床1期并入临床1期,临床1/2期并入临床2期,临床2/3期并入临床3期

临床前

10

登录后查看更多信息

当前项目

登录后查看更多信息

药物交易

使用我们的药物交易数据加速您的研究。

登录

或

转化医学

使用我们的转化医学数据加速您的研究。

登录

或

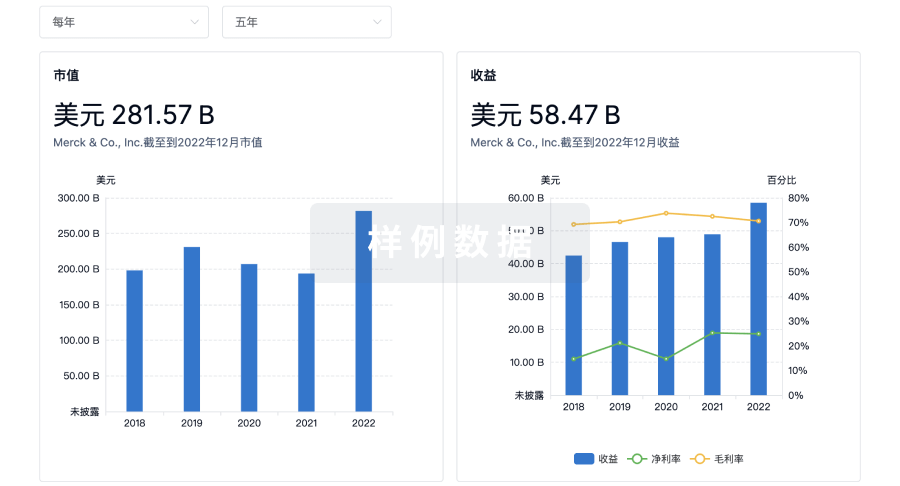

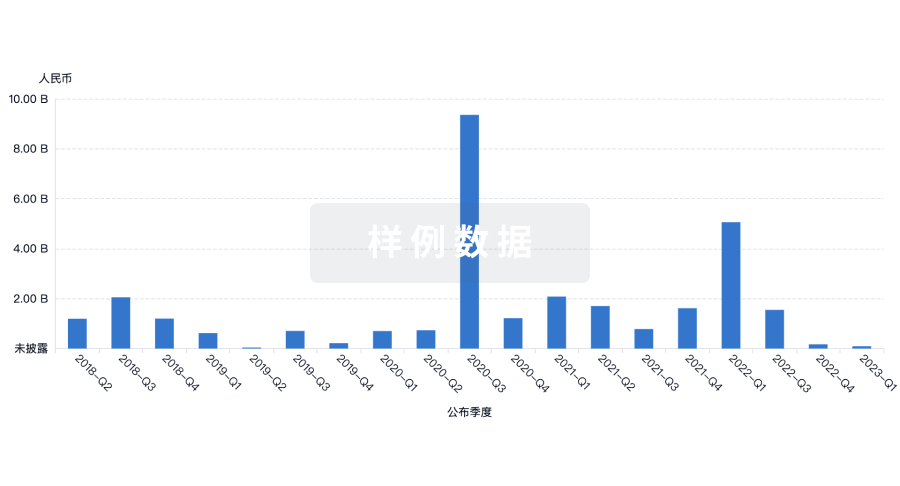

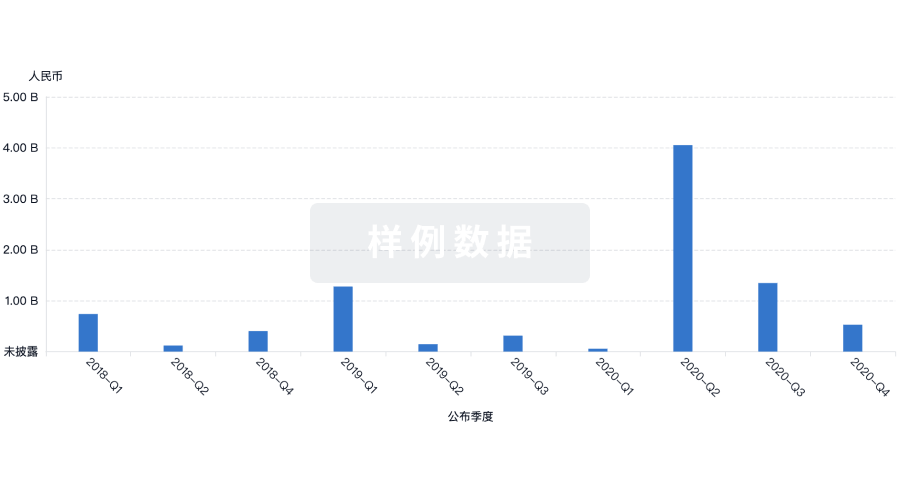

营收

使用 Synapse 探索超过 36 万个组织的财务状况。

登录

或

科研基金(NIH)

访问超过 200 万项资助和基金信息,以提升您的研究之旅。

登录

或

投资

深入了解从初创企业到成熟企业的最新公司投资动态。

登录

或

融资

发掘融资趋势以验证和推进您的投资机会。

登录

或

生物医药百科问答

全新生物医药AI Agent 覆盖科研全链路,让突破性发现快人一步

立即开始免费试用!

智慧芽新药情报库是智慧芽专为生命科学人士构建的基于AI的创新药情报平台,助您全方位提升您的研发与决策效率。

立即开始数据试用!

智慧芽新药库数据也通过智慧芽数据服务平台,以API或者数据包形式对外开放,助您更加充分利用智慧芽新药情报信息。

生物序列数据库

生物药研发创新

免费使用

化学结构数据库

小分子化药研发创新

免费使用