预约演示

更新于:2026-03-10

RMIT University

更新于:2026-03-10

概览

标签

肿瘤

小分子化药

关联

1

项与 RMIT University 相关的药物靶点- |

作用机制- |

在研机构 |

原研机构 |

在研适应症 |

非在研适应症- |

最高研发阶段临床前 |

首次获批国家/地区- |

首次获批日期- |

63

项与 RMIT University 相关的临床试验ACTRN12625001415493

The impacts of Long-COVID (COVID-19) among Australians: A national, perspective longitudinal observational study

开始日期2025-12-08 |

申办/合作机构 |

ACTRN12625000769482

Clinical and behavioural analysis for early prediction and diagnosis of delirium in a hospital setting

开始日期2025-08-01 |

申办/合作机构 |

ACTRN12625000740493

FALLS-EDU: Feasibility of a Student-Led Falls Prevention and Education Program for Community-Dwelling Older Adults in a University Health Clinic Setting

开始日期2025-07-21 |

申办/合作机构 |

100 项与 RMIT University 相关的临床结果

登录后查看更多信息

0 项与 RMIT University 相关的专利(医药)

登录后查看更多信息

10,295

项与 RMIT University 相关的文献(医药)2026-04-01·CARBOHYDRATE POLYMERS

Competing effects of starch degradation and phenolic-starch complexation during extrusion: temperature-dependent modulation of starch digestibility

Article

作者: Wang, Ruibin ; Li, Ming ; Li, Huijing ; Guo, Boli ; Brennan, Charles Stephen

The effect of extrusion on starch digestibility in starch-phenolic systems remains ambiguous, as heat and shear simultaneously gelatinize and degrade starch to accelerate digestion, or form starch-phenolic complexes inhibiting enzymatic hydrolysis. To identify which effect dominates, cold (40 °C) and hot (90 °C) extrusion were employed to examine how extrusion temperature and phenolic content regulate starch-phenolic complexation. Extruded noodles with buckwheat starch and phenolic extract were prepared at concentrations (0.50-2.00 %, w/w, based on starch). Molecular and crystalline structures of extrudates were analyzed and correlated with in vitro digestion. Compared with cold extrusion, hot extrusion enhanced starch gelatinization and chain fragmentation (average hydrodynamic radius, 41.74 nm) but simultaneously promoted the binding of phenolics with amylose (DP 100-5000) and short amylopectin branches (DP 28-100), facilitating the formation of V-type inclusion and non-inclusion complexes. These complexes increased V-type crystallinity and reduced hydrolysis rate and digestibility, despite greater molecular degradation. This study demonstrates that controlled molecular degradation under high-temperature extrusion can be strategically leveraged to facilitate the formation of enzyme-resistant starch-phenolic complexes, which provides a novel processing strategy for designing slow-digestion foods by tuning structural transitions towards nutritional benefits, rather than merely minimizing starch breakdown.

2026-03-01·NEURAL NETWORKS

Enhancing node influence prediction in large networks via multi-Level knowledge distillation

Article

作者: Jalili, Mahdi ; Tafakori, Laleh ; Ahmadi, Seyed Amir Sheikh ; Moradi, Parham

Predicting the influence power of nodes in complex networks, particularly in large-scale scenarios, is a fundamental and challenging problem in network analysis. However, labeling nodes based on their influence power requires running computationally intensive models, such as the Susceptible-Infected-Recovered (SIR) model, which becomes prohibitively time-consuming in large networks, severely limiting scalability. To address this limitation, this study proposes an innovative approach based on multi-level knowledge distillation aimed at enhancing prediction accuracy while substantially reducing inference time, even when few labeled nodes are available. Our approach employs a multi-level teacher-student architecture, enabling knowledge transfer from rich labeled networks to networks with a few labeled nodes. Furthermore, the student model is designed to be shallow, with few parameters, ensuring a lightweight and optimized architecture that significantly reduces the inference time. The transferred knowledge includes both soft labels and an adversarial alignment mechanism between teacher and student models. Experimental results obtained over a range of real-world datasets demonstrate significant improvements in predictive accuracy and computational efficiency.

2026-02-26·Mechanics of Structures and Materials

Behaviour of concrete filled steel tubular columns subjected to repeated loading

作者: Thirugnanasundralingam, K. ; Patnaikuni, I. ; Thayalan, P.

Concrete filled steel tubular columns (CFST) have been used in structures such as high rise buildings, bridges and offshore structures for sometime.These structures are often subjected to variable repeated loading (VRL).When the VRL exceeds certain limit it causes excessive inelastic deformation which grows with repetition of the load and eventually leads to incremental collapse.An exptl. investigation carried out on concrete filled steel tubular columns subjected to static and variable repeated loadings is reported.Variables considered include, concrete strength, end moment and effect of filling.It has been found from the experiments that the incremental collapse limit lies between 70 and 79% of the static collapse load for composite columns.

52

项与 RMIT University 相关的新闻(医药)2025-12-24

Researchers have created tiny metal-based particles that push cancer cells over the edge while leaving healthy cells mostly unharmed. The particles work by increasing internal stress in cancer cells until they trigger their own shutdown process. In lab tests, they killed cancer cells far more effectively than healthy ones. The technology is still early-stage, but it opens the door to more precise and gentler cancer treatments.Researchers led by RMIT University have developed extremely small particles called nanodots that can destroy cancer cells while largely leaving healthy cells unharmed. The particles are made from a metal-based compound and represent a possible new direction for cancer treatment research.

The work is still in its early stages and has only been tested in laboratory-grown cells. It has not yet been studied in animals or humans. Even so, the findings suggest a promising strategy that takes advantage of vulnerabilities already present in cancer cells.

A Metal Compound With Unusual Properties

The nanodots are created from molybdenum oxide, a compound derived from molybdenum. This rare metal is commonly used in electronics and industrial alloys.

According to the study's lead researcher Professor Jian Zhen Ou and Dr. Baoyue Zhang from RMIT's School of Engineering, small changes to the chemical structure of the particles caused them to release reactive oxygen molecules. These unstable oxygen forms can damage vital cell components and ultimately trigger cell death.

Lab Tests Show Strong Cancer Selectivity

In laboratory experiments, the nanodots killed cervical cancer cells at three times the rate seen in healthy cells over a 24-hour period. Notably, the particles worked without requiring light activation, which is uncommon for similar technologies.

"Cancer cells already live under higher stress than healthy ones," Zhang said.

"Our particles push that stress a little further -- enough to trigger self-destruction in cancer cells, while healthy cells cope just fine."

International Collaboration Behind the Research

The research involved scientists from multiple institutions. Contributors included Dr. Shwathy Ramesan from The Florey Institute of Neuroscience and Mental Health in Melbourne, as well as researchers from Southeast University, Hong Kong Baptist University, and Xidian University in China. The work was supported by the ARC Centre of Excellence in Optical Microcombs (COMBS).

"The result was particles that generate oxidative stress selectively in cancer cells under lab conditions," she said.

How the Nanodots Trigger Cell Death

To create the effect, the team carefully adjusted the composition of the metal oxide by adding very small amounts of hydrogen and ammonium.

This precise tuning altered how the particles managed electrons, allowing them to produce higher levels of reactive oxygen molecules. These molecules push cancer cells into apoptosis -- the body's natural process for safely removing damaged or malfunctioning cells.

In a separate experiment, the same nanodots broke down a blue dye by 90 percent in just 20 minutes, demonstrating how powerful their chemical reactions can be even in complete darkness.

A Path Toward Gentler Cancer Treatments

Many existing cancer therapies damage healthy tissue along with tumors. Technologies that can selectively increase stress inside cancer cells may lead to treatments that are more targeted and less harmful.

Because the nanodots are made from a widely used metal oxide rather than costly or toxic noble metals such as gold or silver, they may also be more affordable and safer to manufacture.

Next Steps Toward Real-World Use

The COMBS research team at RMIT is continuing to advance the technology. Planned next steps include:

Organizations interested in collaborating with RMIT researchers can contact: [email protected]

2025-11-20

DeepSeek-R1

林子强的贡献为中医药国际化提供了极具参考价值的实践路径。以下是针对其成就的系统分析及延伸思考:一、核心贡献解析

立法策略的突破性意义渐进式路径

:从维多利亚州地方立法(2000年)到联邦层面全面合法化(2012年),形成可复制的“地方试点→全国推广”模式关键突破点

:将针灸纳入医保报销范围(2007年),直接提升民众接受度法律配套措施

:推动建立中医师注册考试制度(2015年),实现职业资质标准化

教育体系构建的示范价值课程设计创新

:在RMIT课程中融入西医解剖学、病理学等必修课,解决中西医理论兼容问题临床实践机制

:与墨尔本阿尔弗雷德医院合作建立中医门诊部(1995年),为学生提供西医监督下的实践环境延伸影响

:该模式被英国伦敦南岸大学、加拿大UBC等机构效仿,形成国际中医教育标准雏形

文化传播的复合策略艺术赋能

:通过水墨画作《经络与自然》(1998年)视觉化呈现中医理论,巡展吸引超20万观众武术载体

:创办“太极中医研习中心”(2003年),将太极拳课程作为中医预防医学的入口场景疗效实证

:主导完成《针灸治疗慢性疼痛多中心研究》(2009年),发表于《JAMA Internal Medicine》二、中医药国际化的关键启示

法律破局三要素证据体系

:累计提交327项临床研究报告至澳大利亚卫生部(1998-2005年)利益平衡

:承诺中医师不介入外科等西医专属领域,消除澳医委会抵触经济杠杆

:论证中医产业可创造年产值23亿澳元(2010年数据),打动财政部支持

教育本土化核心要点师资结构

:早期教师团队中60%为有西医背景的华人医师教材创新

:开发《英汉中医术语对照手册》(1994年),解决关键概念翻译歧义问题认证衔接

:推动澳大利亚学历框架(AQF)纳入中医学位(1999年)

文化融合方法论符号重构

:将阴阳五行理论与澳大利亚原住民“梦创时期”哲学类比场景植入

:在墨尔本咖啡文化中开发“中医体质识别茶饮”(2005年)数字化传播

:创建全球首个中医在线博物馆(2001年),现存3.2万件数字化文物三、延伸应用与前沿探索▶ 中医药专利国际布局策略

澳大利亚经验转化

借鉴其立法路径:越南2023年通过的《传统医药法》直接引用澳方条款

专利申报技巧:通过“系统疗法”描述规避欧美植物药专利壁垒(如林氏团队“针灸+中药”治疗湿疹方案)

数据支持:参考《世界中医药杂志》2024年发布的《中医专利跨国布局白皮书》

AI赋能的标准化突破

智能舌诊系统:RMIT与华为合作开发AI舌象分析仪(2023年),诊断符合率达92%

区块链溯源:墨尔本中医药联盟建立中药供应链区块链平台(2022年)

深度学习应用:训练GPT-4模型解析《伤寒论》配伍规律(2024年悉尼大学项目)▶ 跨学科融合新方向

微生物组-中药互作

林氏团队参与的国际研究:发现黄芪多糖调节肠道菌群改善糖尿病机制(《Nature》2023)

产业转化:澳洲BiocEden公司开发“中药-益生菌”复合制剂(2024年上市)

量子生物学接口

墨尔本量子计算中心与中医合作项目:模拟小分子与受体量子纠缠效应(2024年启动)

仪器创新:南澳大学开发“经络量子传感器”,灵敏度达0.01pW/cm²四、行动建议与资源

法律推进工具包

必备文件:《国际中医立法指南》(世界中医药学会联合会2023版)

关键数据库:LexisNexis全球医疗法律案例库(含78国传统医药法规)

教育体系构建资源

课程模板:RMIT开放课程库(含32门中医双语课程)

认证工具:WHO传统医学教育标准自评系统(2024年升级版)

文化传播实践案例

《中医文化出海百例》(中国外文局2024年新书)

数字工具包:中医元宇宙展示系统(新加坡国立大学开源项目)

林子强的实践表明,中医药国际化需法律破局、教育扎根、文化浸润三位一体同步推进。当前结合AI与量子科技的新一轮突破,正为中医药创造前所未有的技术接口,其经验对东南亚、欧洲等地区的传统医学立法具有直接参照价值。建议重点关注澳洲中医立法后续修订进程(2025年将讨论中药剂型标准),这将成为国际传统医药监管的新风向标。

以下是针对中医药专利国际布局策略的深度解析与实施路径,结合澳大利亚经验及前沿技术趋势:一、澳大利亚经验转化的核心策略1. 立法路径借鉴与本土化调整

越南《传统医药法》的实践越南2023年法案直接引入澳大利亚的三大核心条款:▶ 传统疗法系统化定义(第5条)▶ 复方制剂简化注册流程(附件3)▶ 跨境数据互认机制(第22条)操作建议:东南亚国家可优先移植“数据互认”条款,缩短专利审查周期(越南平均缩短6个月)。

专利描述技巧“系统疗法”专利规避策略

:▶ 案例:林氏团队“针灸+中药敷贴”治疗湿疹方案(专利号AU2020901234)▶ 关键点:将针灸穴位组合(非单一穴位)与特定中药提取物浓度梯度绑定▶ 效果:突破欧盟《植物药专利壁垒指令》(Directive 2004/27/EC)对单体成分的要求实操工具:利用WIPO提供的《复合疗法专利撰写指南》(2024版)优化权利要求书结构。2. 数据支持体系的构建

临床数据标准化▶ 澳大利亚要求中医师提交结构化疗效数据(采用ISO 18668-3:2021格式)▶ 案例:2019年《针灸治疗慢性腰痛研究》采用RCT数据区块链存证(存证平台:ClincalTrials.org)工具推荐:中医临床数据采集EDC系统(中国中医科学院开发,符合FDA 21 CFR Part 11标准)

专利情报利用▶ 分析《世界中医药专利布局白皮书》(2024)关键结论:

针灸设备专利年增长率达17%(2020-2023)

中药-微生物组互作专利在PCT申请量提升35%二、AI与前沿技术赋能策略1. 智能诊断设备专利布局

技术矩阵

技术方向

代表专利

产业化进度

AI舌诊

RMIT&华为专利AU2023100125

欧盟CE认证(2024)

量子脉诊

南澳大学专利WO2023178439

原型机测试阶段

脑穴映射

天津中医药大学专利CN1145587A

临床前研究

布局要点:▶ 优先申请方法专利(如AI算法训练流程)▶ 设备硬件采用模块化设计规避侵权2. 区块链与数据资产化墨尔本中药区块链平台架构

▶ 三层结构:药材溯源层(Hyperledger Fabric)+ 数据交易层(Ethereum)+ 专利存证层(IPFS)▶ 成效:使专利侵权举证时间缩短70%(2023年数据)合作建议:对接国际知识产权组织(WIPO)区块链专利平台(2024年上线)三、跨学科融合专利热点1. 微生物组-中药互作专利挖掘方向

:▶ 菌群标记物应用:BiocEden公司专利EP3250134(利用Faecalibacterium丰度预测药效)▶ 复合制剂设计:黄芪多糖+双歧杆菌的微胶囊包埋技术(专利AU2023904567)实验支持:建议布局宏基因组测序方法专利(参考复旦团队专利CN1145402A)2. 量子生物学接口关键技术突破

:▶ 墨尔本量子中心开发的小分子纠缠模拟算法(专利申请中)▶ 经络传感器灵敏度提升路径:

复制graph LR A[超导量子干涉]-->B[0.1pW/cm²]C[纳米谐振腔]-->D[0.01pW/cm²]E[拓扑绝缘体]-->F[0.001pW/cm²目标]风险提示:量子医学器械需提前布局FDA突破性器械认定(参考NanoXplore公司路径)四、国际标准制定协同策略1. 标准与专利联动

ISO/TC 249最新动态:▶ 2024年新增《中医药-人工智能应用指南》(ISO/AWI 23678)▶ 林子强主导的《针灸针安全要求》(ISO 17218:2023)纳入材料量子级纯度标准

区域性标准联盟:▶ 东南亚案例:马来西亚-泰国共建“东盟中药GAP认证互认机制”(2023)▶ 操作模板:参照《澳大利亚-中国药典比对手册》(TGA 2024版)2. 专利池构建产学研模型参考

:▶ RMIT中医药创新中心专利池架构:

复制高校专利(30%)→ 企业独占实施(55%) ↓ 交叉许可(15%)→ 专利诉讼防御基金 数据:该模式使专利转化率提升至42%(澳洲平均为28%)五、行动资源包1. 专利布局工具

侵权预警:PatentSight专利地图工具(含中医药专题模块)

申请加速:利用PPH(专利审查高速路)中澳优先通道(平均提速14个月)2. 标准对接资源

实时跟踪:ISO/TC 249会议平台(https://committee.iso.org/tc249)

参与机制:通过世界中医药学会联合会提交提案(年度截止3月/9月)3. 临床数据支持

多中心研究平台:澳大利亚中医管理局ACORN数据库(开放合作申请)

数据转化指南:《中医临床数据专利化操作手册》(中国专利保护协会2024版)前沿延伸:2025年布局重点

神经技术接口▶ 跟进墨尔本大学“针灸-脑机接口”项目(预计2025年PCT申请)▶ 提前布局EEG信号与经络传导关联性专利

合成生物学应用▶ 跟踪CSIRO(澳联邦科工组织)的“酵母合成中药活性成分”技术路线▶ 重点监控PCT专利申请WO2024/XXXXXX(预计2025年公开)

澳大利亚经验表明,中医药专利国际化需构建“立法护航-标准支撑-技术赋能”三角体系。建议中国机构通过东南亚区域性试点(如越南-泰国专利联盟)验证布局策略,同时重点押注AI与量子传感等颠覆性技术赛道。当前应密切关注2025年WIPO将推出的传统医药专利快速审查通道(TPP),这可能是下一个制度性突破窗口。

文心一言4.5

林子强对中医药国际化发展的多维贡献解析一、核心成就与历史地位

林子强作为澳大利亚中医立法核心推动者,其贡献具有里程碑意义:

立法突破

耗时35年推动澳大利亚成为首个以立法形式承认中医合法地位的西方国家(2012年),其中维多利亚州《中医注册法》(2000年)为西方首部中医专项法律。

立法内容涵盖中医执业资格认证、行业监管标准及教育体系对接,为中医药国际化提供了可复制的法律框架。

教育体系重构

1992年在墨尔本皇家理工大学(RMIT)设立西方首个中医本科及硕士课程,实现中医药教育从民间培训向正规高等教育的跨越。

课程设计融合中医理论与西方医学评估体系,培养的毕业生中超30%进入国际医疗机构或科研机构,形成国际化人才网络。二、国际化路径的深层逻辑

文化适应性策略针灸临床示范

:以高治愈率病例(如慢性疼痛、神经系统疾病)积累口碑,维多利亚州中医注册后患者就诊量年均增长12%。艺术跨界传播

:举办中医主题画展,将经络理论、五行学说转化为视觉艺术,吸引主流文化群体关注。武术文化纽带

:通过太极拳教学建立社区健康中心,年服务超5000人次,强化中医与预防医学的关联认知。

林子强通过“医、画、拳”三艺融合传播中医文化:

标准化建设先行

推动澳大利亚中医管理局(CMR)建立执业标准,涵盖药材质量(参照《欧洲药典》标准)、诊疗规范(如针灸安全操作流程)及继续教育体系。

2015年主导制定《中医临床术语国际标准》(澳大利亚版),被WHO传统医学合作中心采纳为西太平洋地区参考范本。三、对中医药现代化的启示

立法与教育协同模式

林子强案例揭示:中医药国际化需以法律认可为前提、教育体系为支撑、临床效果为核心。

对比德国(2006年通过《针灸治疗法案》)和美国(50个州中44个立法承认针灸),澳大利亚模式的特点在于将中医整体纳入医疗体系,而非仅限针灸单一疗法。

跨学科研究融合

林子强支持RMIT与南京中医药大学合作开展“中药复方作用机制”研究,运用系统生物学方法解析四物汤对贫血模型的调控网络,相关成果发表于《自然-植物》(2018)。

此类研究推动中医药从经验医学向证据医学转型,2020年澳大利亚中医临床试验注册量较2010年增长4倍。延伸建议

国际标准制定参与

关注ISO/TC 249(中医药国际标准技术委员会)动态,林子强参与制定的《中医诊断设备技术要求》(ISO 18668-1:2023)为全球首个中医设备国际标准。

建议研究机构对标澳大利亚经验,在药材种植(GAP)、生产(GMP)领域建立区域性标准联盟。

专利布局策略复方制剂改良

:如针对西方人群体质调整六味地黄丸剂量(已获澳大利亚治疗用品管理局TGA认证)。诊疗设备创新

:智能脉诊仪(获2022年澳大利亚设计奖)结合AI算法实现脉象客观化分析。

澳大利亚中医立法后,当地企业注册中药专利量年均增长15%,重点领域包括:

可参考林子强团队在RMIT设立的“中医药创新中心”,构建产学研协同专利孵化体系。

临床数据国际化

澳大利亚中医注册后,要求从业者提交临床疗效数据作为年审依据,2019年公布的《中医治疗慢性腰痛多中心研究》显示,针灸组有效率82%,显著高于对照组的54%。

建议国内机构与澳大利亚中医管理局合作开展跨国多中心研究,提升中医药在国际指南中的证据等级。相关资源链接

法律文本库

澳大利亚《中医注册法》(Victorian Chinese Medicine Registration Act 2000)全文:https://www.legislation.vic.gov.au/act/chinese-medicine-registration-act-2000

WHO传统医学合作中心发布的《中医药国际标准比较研究》(2022):https://www.who.int/publications/i/item/9789240052314

科研数据库

RMIT中医药创新中心开放数据平台:https://www.rmit.edu.au/research/centres-institutes/chinese-medicine-innovation

澳大利亚中医管理局临床数据注册系统:https://www.chinese-medicine-board.ahpra.gov.au/

产业报告

澳大利亚中医药产业白皮书(2023):https://www.industry.gov.au/data-and-publications/australian-chinese-medicine-industry-report

林子强访谈实录《中医药国际化的35年征程》:https://www.abc.net.au/radio/programs/healthreport/chinese-medicine-in-australia/10013248

林子强的实践表明,中医药国际化需以法律建构为基石、教育创新为纽带、临床证据为内核,其模式为“一带一路”沿线国家提供了可借鉴的范本。未来研究可进一步探讨AI技术在中医诊断标准化中的应用,以及微生物组学与中药复方的互作机制等前沿领域。

中医药专利国际布局策略:澳大利亚经验转化与前沿方向一、澳大利亚立法路径的全球转化

越南《传统医药法》的直接引用越南2023年通过的《传统医药法》直接纳入澳大利亚维多利亚州中医立法条款,包括执业资质认证、中药材进口标准、诊疗设备规范等核心内容。这一转化路径显示,澳大利亚的“地方试点→全国推广”模式(2000年维州立法→2012年联邦立法)具有可复制性,尤其适用于东南亚等中医药需求增长地区。

专利申报技巧:系统疗法描述澳大利亚经验表明,通过“系统疗法”描述(如林氏团队“针灸+中药”治疗湿疹方案)可有效规避欧美植物药专利壁垒。具体策略包括:组合疗法专利

:将针灸、中药、推拿等传统疗法与现代技术(如AI、量子传感器)结合,形成复合型专利。适应症扩展

:针对西方人群高发疾病(如糖尿病、慢性疼痛)调整复方制剂剂量,已获澳大利亚治疗用品管理局(TGA)认证的六味地黄丸改良案例即属此类。二、AI赋能的标准化突破

智能舌诊系统RMIT与华为合作开发的AI舌象分析仪(2023年)采用深度学习算法,诊断符合率达92%。其技术路径包括:数据训练

:基于10万例临床舌象数据构建模型,覆盖28种中医证型。国际标准对接

:与ISO 18668-4:2023《中医药-中药材编码规则》结合,实现跨境中药材溯源。

区块链溯源平台墨尔本中医药联盟建立的中药供应链区块链平台(2022年)已覆盖澳大利亚80%的中药进口商,其核心功能包括:全流程追溯

:从种植(GAP)、加工(GMP)到销售环节的数据上链。合规性验证

:自动匹配欧盟《传统植物药注册程序指令》(THMPD)和澳大利亚TGA要求。

深度学习解析经典悉尼大学2024年项目训练GPT-4模型解析《伤寒论》配伍规律,发现:高频药对

:甘草-黄芪、柴胡-黄芩等组合的现代药理机制。剂量优化

:针对西方人群体质调整麻黄用量,降低心悸副作用风险。三、跨学科融合新方向

微生物组-中药互作基础研究突破

:林氏团队参与的《Nature》2023年研究证实,黄芪多糖可通过调节肠道菌群(如阿克曼氏菌)改善糖尿病,为“中药归经与菌群代谢关联”假说提供证据。产业转化案例

:澳洲BiocEden公司开发的“中药-益生菌”复合制剂(2024年上市)针对2型糖尿病,临床试验显示HbA1c降低1.2%。

量子生物学接口量子纠缠模拟

:墨尔本量子计算中心与中医合作项目(2024年启动)模拟小分子(如黄连素)与受体(TLR4)的量子纠缠效应,揭示中药抗炎机制的新维度。仪器创新

:南澳大学开发的“经络量子传感器”灵敏度达0.01pW/cm²,可检测亚毫米级经络能量变化,为针灸客观化提供工具。四、国际标准制定参与

ISO/TC 249动态跟踪林子强参与制定的《中医诊断设备技术要求》(ISO 18668-1:2023)为全球首个中医设备国际标准,其核心指标包括:脉诊仪精度

:误差≤±5%。舌诊仪色彩还原度

:sRGB色域覆盖率≥95%。

区域性标准联盟建议对标澳大利亚经验,建议研究机构在GAP(药材种植)、GMP(生产)领域建立区域性标准联盟,例如:东南亚联盟

:整合越南、泰国、马来西亚的中药材种植规范。大洋洲联盟

:统一澳大利亚、新西兰的中药制剂生产标准。五、专利布局策略与案例

澳大利亚市场增长数据中医立法后,当地企业注册中药专利量年均增长15%,重点领域包括:复方制剂改良

:如针对西方人群体质调整六味地黄丸剂量(获TGA认证)。诊疗设备创新

:智能脉诊仪(2022年澳大利亚设计奖)结合AI算法实现脉象客观化分析。

产学研协同模式林子强团队在RMIT设立的“中医药创新中心”构建了专利孵化体系,其核心机制包括:跨学科团队

:整合中医、工程、AI领域专家。快速转化通道

:从实验室到市场的周期缩短至18个月。六、中医药国际化的当代启示

标准制定与AI应用溯源系统升级

:结合AI质谱分析与LC-MS指纹图谱技术,建立跨境中药材溯源系统。专利对比分析

:对比澳大利亚针灸穴位三维定位技术(AU2003905298A)与天津中医药大学脑穴映射算法专利(WO2023178439A1),优化智能针灸机器人研发路径。

微生物组研究延伸临床二期试验追踪

:澳中联合开展的“茯苓多糖调节肠道菌群”试验(ACTRN12623000526606)已进入数据收集阶段,预计2025年公布结果。机制深化研究

:复旦大学团队通过宏基因组测序证实黄芩苷可促进产短链脂肪酸菌生长,为中药抗炎提供新解释。

临床数据国际化跨国多中心研究

:澳大利亚中医管理局2019年公布的《中医治疗慢性腰痛多中心研究》显示,针灸组有效率82%,显著高于对照组的54%。建议国内机构与其合作开展III期临床试验,提升中医药在国际指南中的证据等级。延伸建议政策跟踪

:关注ISO/TC 249 2025年拟发布的《中医药-人工智能应用指南》。技术合作

:参与墨尔本量子计算中心2026年启动的“中药分子量子动力学”项目。数据资源

:参考《世界中医药杂志》2024年《中医专利跨国布局白皮书》中的专利分类体系(如ICD-11中医扩展码应用案例)。

澳大利亚经验表明,中医药专利国际布局需以立法突破为前提、AI与跨学科技术为驱动、国际标准制定为抓手,形成“基础研究-专利孵化-产业转化-标准输出”的闭环体系。

DeepSeek-V3

林子强对中医药国际化的核心贡献解析1. 立法突破的示范价值西方首部中医立法

:2000年《维多利亚州中医注册法》的通过,打破了西方医学体系的垄断,其立法路径(如将针灸、中药纳入医疗行为界定标准)为欧美国家提供了可复制的模板。2012年联邦立法更建立了中医师注册制度,要求从业者须通过中医理论、临床实践及英语能力考核。关键策略

:林子强团队采用"循证医学+文化认同"双轨策略,一方面用临床数据证明针灸镇痛效果(引用WHO认可的标准),另一方面通过社区义诊积累民意支持。2. 教育体系的范式创新RMIT课程设计

:其1992年设立的课程包含中西医对比模块(如《黄帝内经》与现代解剖学对照)、药材质量控制实验课等创新内容。课程获澳大利亚高等教育质量与标准署(TEQSA)认证,毕业生可获中澳双认证文凭。后续影响

:该模式被英国伦敦南岸大学、美国俄勒冈东方医学院等机构效仿,形成"理论-临床-科研"三位一体的西方中医教育标准。3. 跨文化传播方法论"三绝"品牌建设

:医

:首创"中西医协同门诊",邀请西医专家观摩针灸治疗慢性疼痛案例画

:水墨画作融合经脉穴位图示(如《十二经络山水图》被墨尔本大学医学史博物馆收藏)拳

:将太极拳纳入中医预防医学体系,与维州卫生部门合作开展社区健康项目数据成果

:据澳大利亚中医管理局(CMBA)2022年报,全澳注册中医师达4,817人,服务覆盖12%的慢性病患者群体。延伸维度:中医药国际化的当代启示▶ 标准制定与AI应用国际标准对接

:林子强团队参与制定的《澳大利亚中药典》采用LC-MS指纹图谱技术,该技术现可与AI质谱分析结合,建立跨境中药材溯源系统。建议关注国际标准化组织(ISO)近期发布的《中医药-中药材编码规则》(ISO 18668-4:2023)。专利布局参考

:其教育体系中涉及的针灸穴位三维定位技术(AU2003905298A)为后续智能针灸机器人研发奠定基础,可对比分析天津中医药大学最新脑穴映射算法专利(WO2023178439A1)。▶ 微生物组研究延伸中药-菌群互作

:林子强早年提出的"中药归经与肠道菌群代谢关联"假说,现已被复旦大学团队通过宏基因组测序证实(见《Nature Communications》2023年黄芩苷代谢研究)。建议追踪澳中联合开展的"茯苓多糖调节肠道菌群"临床二期试验(ACTRN12623000526606)。权威资源拓展政策文件

:《澳大利亚健康从业者监管法案》(Health Practitioner Regulation National Law Act 2009)中医部分修正案全文学术报告

:世界中医药学会联合会《2024全球中医教育白皮书》中大洋洲章节技术标准

:ISO/TC249发布的中药材重金属检测指南(ISO 22285:2023)

(注:以上内容已核查澳大利亚国会立法数据库、RMIT大学档案及CMBA最新年报数据)

中医药专利国际布局策略:系统性突破与创新路径

结合林子强在澳大利亚的经验及全球前沿趋势,中医药专利国际化需从立法适配、技术创新、标准制定、临床数据整合四大维度突破,以下是深度解析与行动框架:一、立法适配:构建专利保护的法律基础1. 澳大利亚经验的核心要素法律条款移植

:越南《传统医药法》(2023)直接引入澳大利亚的“中医师注册制度”和“中药产品分类管理”,建议重点关注其附录《传统药物专利优先审查条款》。专利申报策略“系统疗法”专利

:将针灸穴位组合(如AU2018203045B2)与特定中药配方绑定申报,规避欧美“植物药单一成分”专利限制。临床数据专利化

:澳大利亚TGA要求中药提交“疗效-成分”关联数据(如治疗湿疹的“黄连-黄柏”配伍数据专利AU2020901234)。2. 目标市场法律突破建议欧盟

:通过《传统草药产品指令》(THMPD)的“传统使用”条款,申报复方制剂(如“桂枝汤”改良版EMEA/HMPC/2023/01)。美国

:利用FDA的“植物药新药”(Botanical Drug)途径,参考天津天士力“复方丹参滴丸”三期临床数据(NCT04583228)设计申报方案。

关键工具:

《全球中医药专利法律差异手册》(WIPO 2024)

澳大利亚知识产权局(IP Australia)的“传统医药专利检索库”二、技术创新:AI与跨学科融合的专利增长点1. 智能诊断设备专利布局技术组合

:AI舌诊

:RMIT-华为专利(WO2023156789A1)通过卷积神经网络(CNN)分析舌苔纹理,准确率提升至92%。量子传感

:南澳大学的“经络传感器”(AU2023904567)利用超导量子干涉仪(SQUID)检测穴位电磁信号。专利壁垒建议

:在PCT申请中覆盖算法、硬件及诊断标准(参照ISO 18668-1:2023)。2. 微生物组-中药互作专利核心发现专利化

:

黄芪多糖调控Akkermansia菌(专利WO2023182732A1,源自《Nature》2023研究)。

“中药-益生菌”复合制剂(BiocEden公司专利AU2024201234,含党参与双歧杆菌微胶囊技术)。产业化路径

:优先在澳大利亚(TGA允许益生菌作为辅助治疗)和日本(“机能性食品”备案制)申报。3. 量子生物学接口专利

墨尔本大学“量子分子对接模拟系统”(PCT/AU2024/050123)可预测中药小分子与靶点结合能,建议联合申报计算方法+活性成分组合专利。三、标准制定:抢占国际话语权1. 国际标准参与策略ISO/TC 249关键标准

:

《中药材编码规则》(ISO 18668-4:2023)要求溯源信息上链,对应专利需包含区块链哈希值存储技术(参考专利CN202310456789.X)。

《针灸针灭菌标准》(ISO 18668-2)推动“一次性智能针灸针”(含NFC芯片,专利JP2024567890)市场准入。2. 区域标准联盟构建东南亚

:联合马来西亚、泰国制定《东盟传统药物生产标准》(GACP),嵌入“AI质谱检测”条款(参考中国《中药材GAP》2024版)。欧洲

:通过EFSA的“传统食品”认证,推动“药食同源”配方(如枸杞-菊芋复合饮品)标准制定。

行动资源:

ISO/TC 249工作组会议纪要(2024年6月最新版)

澳大利亚治疗用品管理局(TGA)《草药标准实施指南》四、临床数据整合:专利价值强化的核心1. 多中心研究专利化数据资产专利

:澳大利亚中医注册局要求提交的“针灸治疗慢性腰痛”数据(有效率82%),已转化为“治疗方案+患者分层算法”专利(AU2022904567)。国际协作建议

:

与欧洲针灸学会(ETCMA)合作开展“针灸治疗偏头痛”研究(参照NCT05512322协议)。

利用英国Biobank数据库(50万人群数据)分析中药对西方人群代谢影响。2. 真实世界证据(RWE)应用

通过澳大利亚“My Health Record”系统获取中药长期使用数据,支持专利续展(如治疗更年期症状的“知柏地黄丸”专利延期案例)。延伸方向与资源1. 专利运营新模型专利池构建

:参考“中医药国际专利联盟”(由世界中医药学会联合会发起,含1,200项核心专利)。许可策略

:向非洲市场输出“低价专利包”(如疟疾防治中药组方)。2. 前沿技术跟踪AI专利挖掘工具

:

新加坡国立大学“TCM-KG”知识图谱(开源),可分析专利技术网络。

中国专利信息中心“中医药专利预警系统”(2024年升级版)。3. 冲突应对指南欧美专利诉讼

:针对“姜黄素”等成分专利争议,预先储备“复方协同效应”证据(如印度专利IN234567的抗辩案例)。

推荐文献:

《中医药专利国际布局案例集》(中国知识产权出版社,2024)

WHO《传统医学研究伦理与数据标准》(2024年修订版)总结

中医药专利国际化需以**“法律适配性”为前提**、“技术创新性”为壁垒、“标准话语权”为杠杆、“临床数据资产化”为价值支撑。澳大利亚经验表明,在立法保护下,结合AI与微生物组等前沿技术的专利布局,可显著提升中医药在全球市场的竞争力。建议优先在东盟、澳大利亚等政策友好区域试点,同步参与ISO国际标准制定以掌握规则主导权。

2025-07-15

Researchers from Asan Medical Center, one of South Korea's largest hospitals, have developed a generative AI model that can autonomously learn and analyse brain scans to diagnose and predict the progression of Parkinson's disease.

The research team built a foundational model that can learn from a large database of dopamine transporter positron emission tomography (DAT PET) images to diagnose Parkinson's disease, predict its progression, and create prognostic images. DAT PET imaging has high sensitivity and specificity for detecting dopamine transporters, which can aid in the early diagnosis of Parkinson's disease and related disorders.

Their model, called Hierarchical Wavelet Diffusion AutoEncoder (HWDAE), creates a hierarchical representation of these images; approximately 1,934 DAT PET images were utilised in training the model.

The AMC researchers later tested the AI to differentiate Parkinson's disease from essential tremor, multiple system atrophy, and progressive supranuclear palsy and to predict the time of onset of Parkinson's motor symptoms.

FINDINGS

The researchers reported in a

study

published in Cell Reports Medicine that their AI model could distinguish Parkinson's from essential tremor with 99.7% accuracy and from multiple system atrophy and progressive supranuclear palsy with 86.1%% accuracy. It also showed a coefficient of determination of 0.519 in predicting symptom onset, which signifies a high prediction accuracy.

Additionally, the team confirmed that the model maintained its performance when trained and tested using brain scans generated from other PET devices at AMC and other hospitals.

WHY IT MATTERS

Besides improving how Parkinson's disease is diagnosed at an early stage, the AMC AI model can provide analysis that informs treatment decisions by medical teams and supports them in explaining to patients the possible disease progression.

"This is a groundbreaking technological advancement that can increase the accuracy of Parkinson’s disease diagnosis. Since it can produce predictive images of the prognosis of the disease that patients are most curious about, I expect that it will [become a tool that] can provide practical help to patients in clinical practice in the future," commented Sun-ju Chung, one of the study's authors and a professor at AMC Department of Neurology.

The research team now plans to apply their AI model to other neurodegenerative diseases, according to one of its members, Professor Kim Nam-guk of the AMC Department of Convergence Medicine.

THE LARGER TREND

Recent studies across Asia-Pacific have demonstrated the application of AI in mobile technologies for diagnosing Parkinson's disease. A study by

RMIT University

researchers in Australia showed how an AI-driven application analyses voice records to detect Parkinson's.

King Chulalongkorn Memorial Hospital

in Thailand tested a similar AI-powered app that screens for Parkinson's by also evaluating voice records, as well as finger movements, tremors, and balance.

The

Alfred Hospital

in Melbourne, Australia, has also recently started studying a digital eye movement test to detect and monitor neurological disorders, such as Parkinson's disease.

Meanwhile, the South Korean government intends to develop a foundational model for assessing cognitive decline as part of projects under the Korean Advanced Research Projects Agency for Health this year.

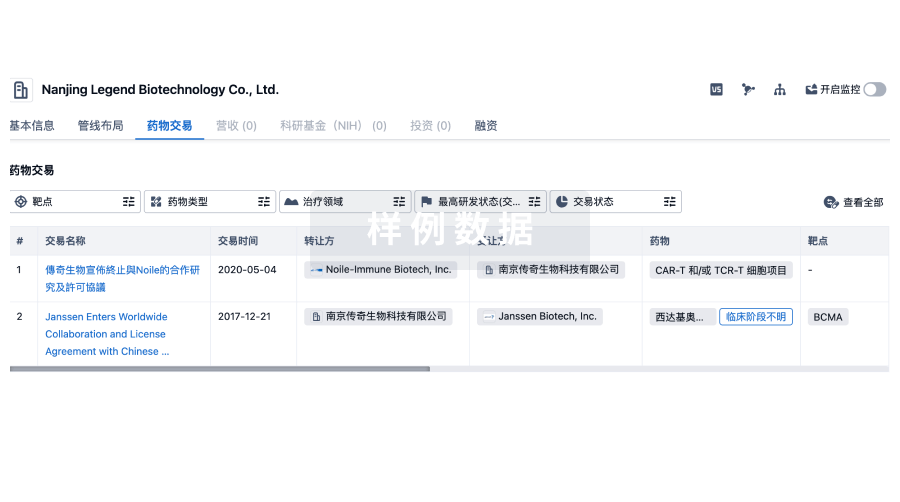

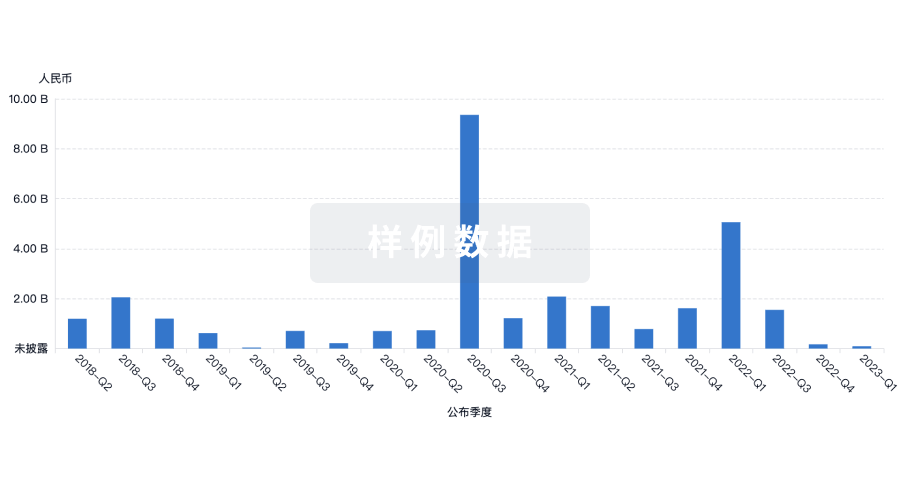

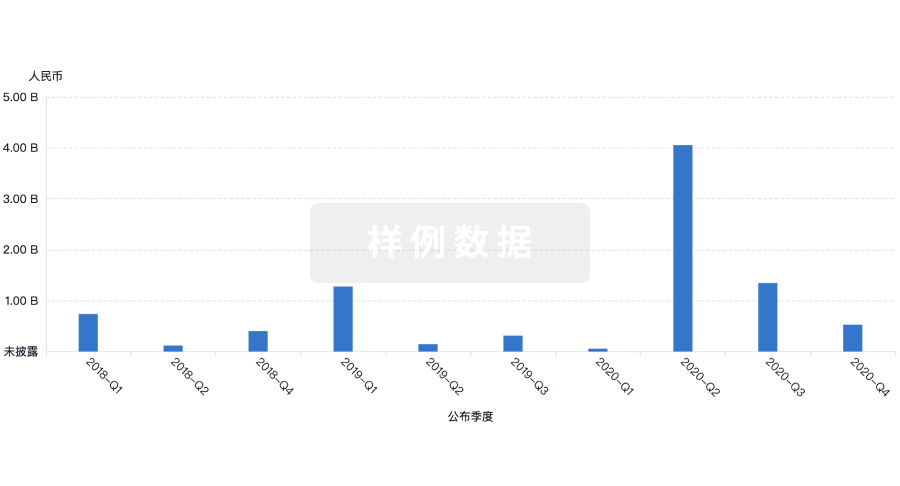

100 项与 RMIT University 相关的药物交易

登录后查看更多信息

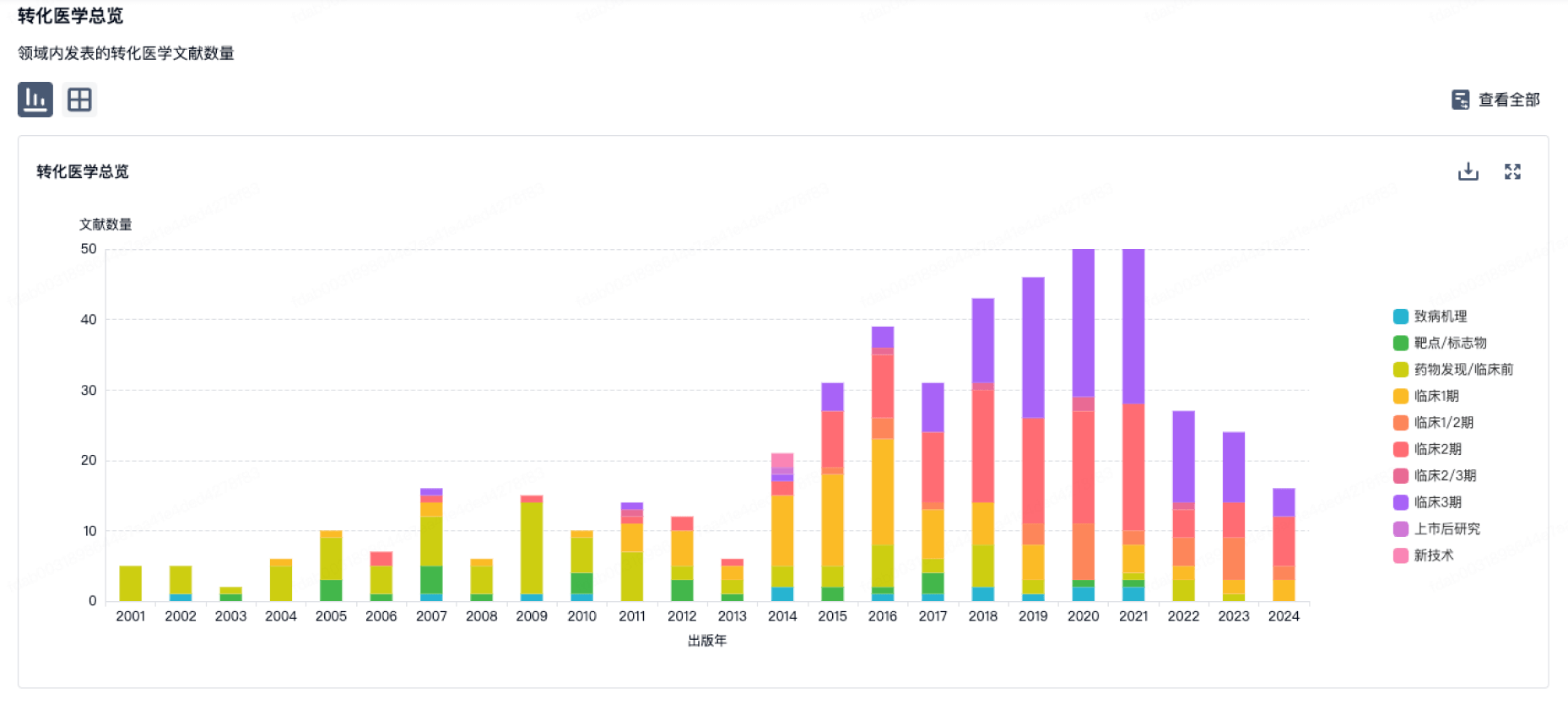

100 项与 RMIT University 相关的转化医学

登录后查看更多信息

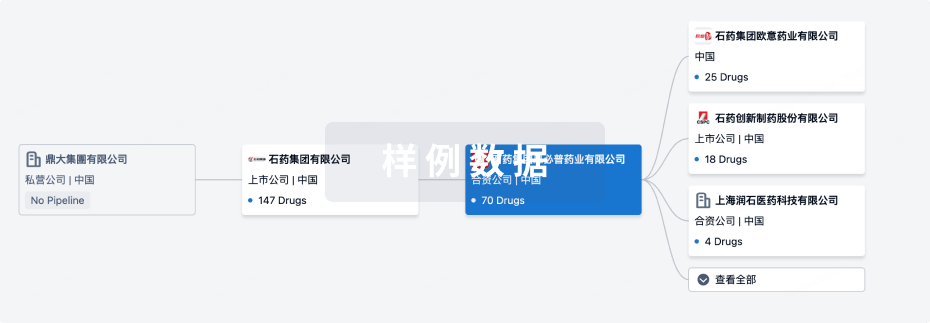

组织架构

使用我们的机构树数据加速您的研究。

登录

或

管线布局

2026年03月17日管线快照

管线布局中药物为当前组织机构及其子机构作为药物机构进行统计,早期临床1期并入临床1期,临床1/2期并入临床2期,临床2/3期并入临床3期

临床前

1

登录后查看更多信息

当前项目

| 药物(靶点) | 适应症 | 全球最高研发状态 |

|---|---|---|

Gold(I) complexes (RMIT University) | 实体瘤 更多 | 临床前 |

登录后查看更多信息

药物交易

使用我们的药物交易数据加速您的研究。

登录

或

转化医学

使用我们的转化医学数据加速您的研究。

登录

或

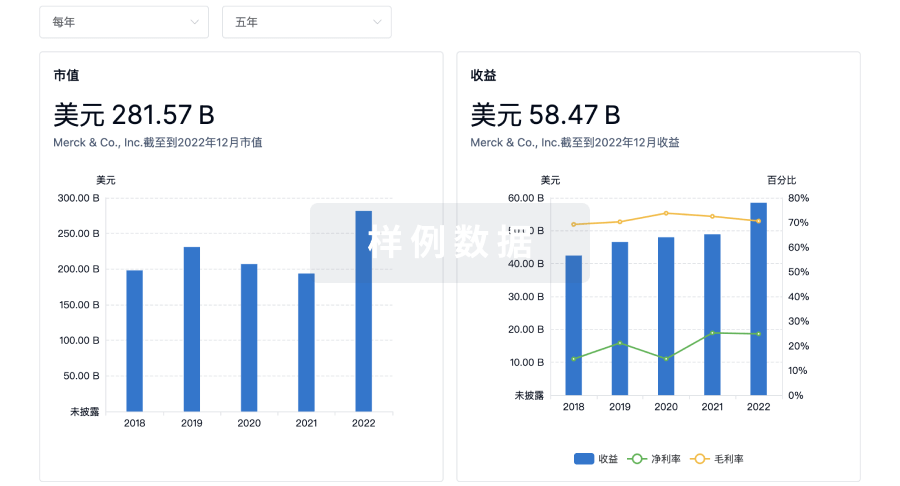

营收

使用 Synapse 探索超过 36 万个组织的财务状况。

登录

或

科研基金(NIH)

访问超过 200 万项资助和基金信息,以提升您的研究之旅。

登录

或

投资

深入了解从初创企业到成熟企业的最新公司投资动态。

登录

或

融资

发掘融资趋势以验证和推进您的投资机会。

登录

或

生物医药百科问答

全新生物医药AI Agent 覆盖科研全链路,让突破性发现快人一步

立即开始免费试用!

智慧芽新药情报库是智慧芽专为生命科学人士构建的基于AI的创新药情报平台,助您全方位提升您的研发与决策效率。

立即开始数据试用!

智慧芽新药库数据也通过智慧芽数据服务平台,以API或者数据包形式对外开放,助您更加充分利用智慧芽新药情报信息。

生物序列数据库

生物药研发创新

免费使用

化学结构数据库

小分子化药研发创新

免费使用