预约演示

更新于:2026-03-08

Musashino University

更新于:2026-03-08

概览

标签

皮肤和肌肉骨骼疾病

其他疾病

呼吸系统疾病

小分子化药

疾病领域得分

一眼洞穿机构专注的疾病领域

技术平台

公司药物应用最多的技术

靶点

公司最常开发的靶点

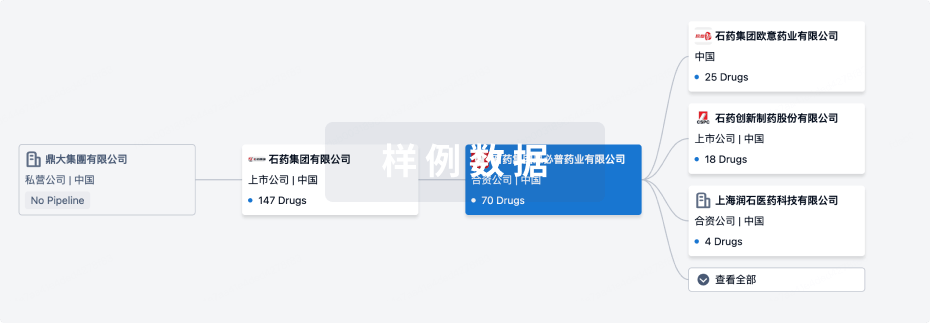

组织架构

使用我们的机构树数据加速您的研究。

登录

或

管线布局

2026年05月20日管线快照

管线布局中药物为当前组织机构及其子机构作为药物机构进行统计,早期临床1期并入临床1期,临床1/2期并入临床2期,临床2/3期并入临床3期

临床前

1

1

临床1期

登录后查看更多信息

当前项目

登录后查看更多信息

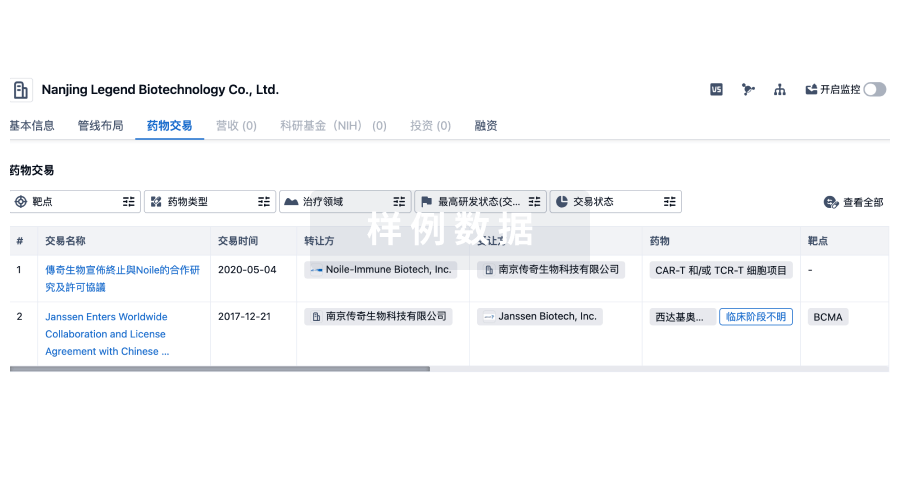

药物交易

使用我们的药物交易数据加速您的研究。

登录

或

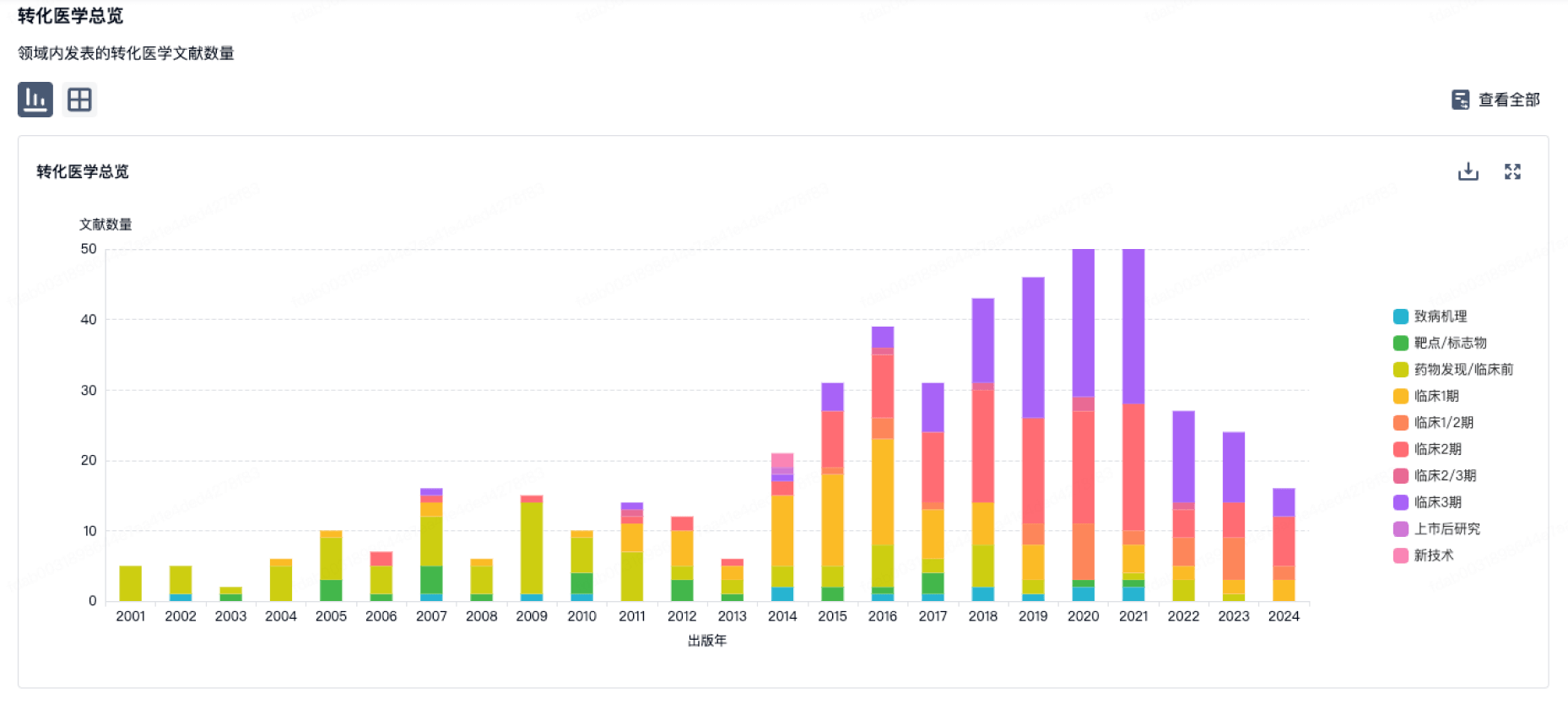

转化医学

使用我们的转化医学数据加速您的研究。

登录

或

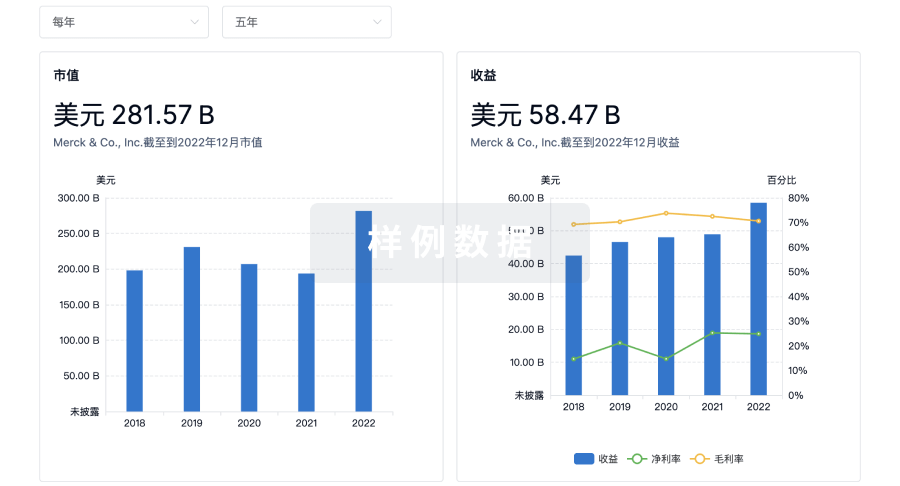

营收

使用 Synapse 探索超过 36 万个组织的财务状况。

登录

或

科研基金(NIH)

访问超过 200 万项资助和基金信息,以提升您的研究之旅。

登录

或

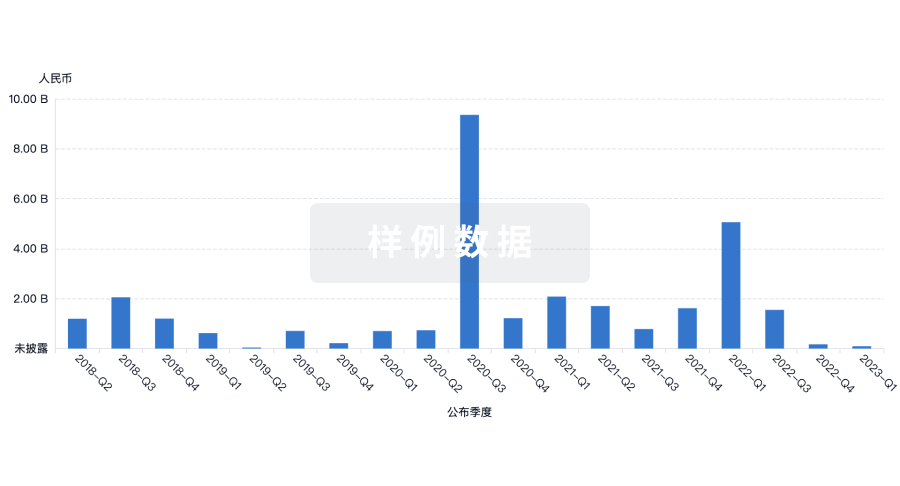

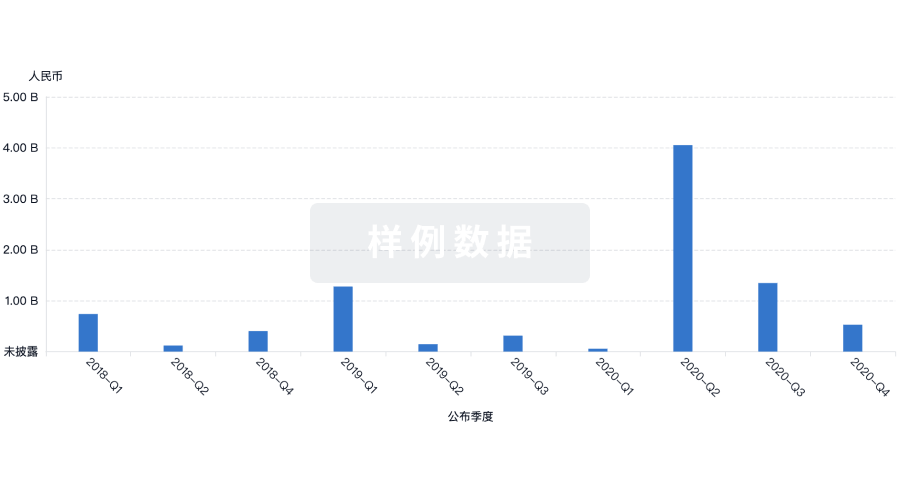

投资

深入了解从初创企业到成熟企业的最新公司投资动态。

登录

或

融资

发掘融资趋势以验证和推进您的投资机会。

登录

或

生物医药百科问答

全新生物医药AI Agent 覆盖科研全链路,让突破性发现快人一步

立即开始免费试用!

智慧芽新药情报库是智慧芽专为生命科学人士构建的基于AI的创新药情报平台,助您全方位提升您的研发与决策效率。

立即开始数据试用!

智慧芽新药库数据也通过智慧芽数据服务平台,以API或者数据包形式对外开放,助您更加充分利用智慧芽新药情报信息。

生物序列数据库

生物药研发创新

免费使用

化学结构数据库

小分子化药研发创新

免费使用