预约演示

更新于:2026-03-08

Southeast University

更新于:2026-03-08

概览

标签

肿瘤

其他疾病

神经系统疾病

小分子化药

融合蛋白

化学药

疾病领域得分

一眼洞穿机构专注的疾病领域

暂无数据

技术平台

公司药物应用最多的技术

暂无数据

靶点

公司最常开发的靶点

暂无数据

| 排名前五的药物类型 | 数量 |

|---|---|

| 小分子化药 | 19 |

| 化学药 | 2 |

| 重组多肽 | 2 |

| 基因编辑 | 2 |

| 融合蛋白 | 2 |

关联

37

项与 东南大学 相关的药物靶点- |

作用机制 Inflammation mediators调节剂 |

在研适应症 |

最高研发阶段批准上市 |

首次获批国家/地区 日本 |

首次获批日期1986-03-01 |

靶点- |

作用机制 干细胞替代物 |

在研机构 |

原研机构 |

非在研适应症- |

最高研发阶段临床2期 |

首次获批国家/地区- |

首次获批日期- |

218

项与 东南大学 相关的临床试验NCT06990477

Effect of EIT-guided PEEP On Clinical Outcomes in ARDS Patients With Higher Recuritability: a Pilot Randomized Controlled Trial

Acute respiratory syndrome distress (ARDS) is a clinical common syndrome with high mortality. Mechanical ventilation (MV) is the cornerstone of management of ARDS but can lead to ventilator-induced lung injury. Positive end-expiratory pressure (PEEP), as one of main component of MV, has been widely used in the clinical practice. However, the PEEP selection is still a difficult problem for moderate to severe ARDS patients. EIT, an imaging tool evaluating the regional ventilation distribution at the bedside, can achieve the individual PEEP selection for all mechanically ventilated patients. Our previous study found that moderate to severe ARDS patients with higher recruitability could benefit from EIT-guided PEEP. This article compared the effect of PEEP titrated guided by EIT with fraction of inspired oxygen (FiO2)-PEEP table on the clinical outcomes in patients with higher recruitability.

开始日期2026-01-28 |

申办/合作机构 |

NCT07362537

Enteral Nutrition Delivery in Prone Position Ventilated Patients With Moderate to Severe Acute Respiratory Distress Syndrome: a Randomized Controlled Trial

This pilot study is aimed to compare and assess the impact, safety, and practical utility of gastric versus postpyloric feeding in moderate to severe ARDS patients with prone position ventilation. Patients included will be randomly assigned to receive enteral nutrition either through a nasogastric tube or a nasojejunal tube. The primary endpoint is the achievement of enteral nutrition goals. Secondary endpoints include the incidence of hospital-acquired infections, the number of ventilator-free days within 28 days, ICU length of stay, ICU mortality, 28-day mortality, 60-day mortality rates, the incidence of enteral nutrition intolerance, and the rate of enteral nutrition intake.

开始日期2026-01-20 |

申办/合作机构 |

NCT07284888

Practices of Prone Positioning Ventilation in Patients With Moderate-to-Severe ARDS in Intensive Care Units: A Registry-Based Observational Study

Acute respiratory distress syndrome (ARDS) is a major cause of mortality in intensive care units. Prone position ventilation (PPV) is an important component of ARDS management and has been shown to reduce mortality in patients with moderate-to-severe ARDS. However, substantial heterogeneity exists in treatment response to PPV. Previous studies suggest that lung morphology-focal versus non-focal patterns based on chest CT-may influence responses to ventilatory strategies, but whether lung morphology modifies the effect of PPV remains unclear. In addition, the benefits and safety of PPV in patients with acute brain injury (ABI) complicated by ARDS are uncertain. Although PPV improves oxygenation, it may impair cerebral venous drainage and increase intracranial pressure, raising concerns about its use in ABI patients. Evidence from randomized trials in this population is limited and excludes patients with more severe hypoxemia or elevated intracranial pressure.

Furthermore, the optimal duration and termination criteria for PPV are not well established. While PPV improves alveolar recruitment and reduces ventilator-induced lung injury, prolonged PPV may lead to excessive sedation exposure and PPV-related complications. Identifying the appropriate timing to discontinue PPV may help balance clinical benefits and potential harms.This study is a prospective, multicenter registry enrolling patients with moderate-to-severe ARDS. The objectives are: (1) To determine whether lung morphology can guide individualized PPV strategies; (2) To evaluate the effectiveness and safety of PPV in patients with ARDS complicated by acute brain injury; (3) To investigate the optimal timing for termination of PPV through target trial emulation methods.In addition to these core objectives, the study will include other exploratory aims.

Furthermore, the optimal duration and termination criteria for PPV are not well established. While PPV improves alveolar recruitment and reduces ventilator-induced lung injury, prolonged PPV may lead to excessive sedation exposure and PPV-related complications. Identifying the appropriate timing to discontinue PPV may help balance clinical benefits and potential harms.This study is a prospective, multicenter registry enrolling patients with moderate-to-severe ARDS. The objectives are: (1) To determine whether lung morphology can guide individualized PPV strategies; (2) To evaluate the effectiveness and safety of PPV in patients with ARDS complicated by acute brain injury; (3) To investigate the optimal timing for termination of PPV through target trial emulation methods.In addition to these core objectives, the study will include other exploratory aims.

开始日期2026-01-01 |

申办/合作机构 |

100 项与 东南大学 相关的临床结果

登录后查看更多信息

0 项与 东南大学 相关的专利(医药)

登录后查看更多信息

31,107

项与 东南大学 相关的文献(医药)2026-12-31·MECHANICS OF ADVANCED MATERIALS AND STRUCTURES

Experimental investigation on the crashworthy performance of polyurethane foam-filled aluminum cans under repeated impact loads

作者: Zheng, Sen ; Wang, Yuxuan ; Li, Minghong ; Yang, Chaobin ; Xia, Mengtao ; Lin, Yuanzheng ; Zong, Zhouhong

Sustainable development is a development approach that focuses on economic growth and environmental protection.Substantial natural resources are consumed during the production of aluminum cans.However, recycled aluminum saves 95% of the energy needed to make new aluminum.Addnl., thin-walled tubular structures are widely used as energy absorbers.The current study developed a polyurethane foam-filled aluminum cans (PFACs) composite structure, which could be made of recycled aluminum and adopted as an energy absorber.Quasi-static test and repeated drop weight test were conducted to investigate the crushing performance and the mechanism under multiple impacts of this polyurethane foam (PU foam)-filled composite structure.A novel method which could be used to reasonably quantify their energy absorption performance was proposed to identify the densification displacement of PFACs.Quasi-static test results showed the significant effect of the PU foam filler in improving the energy absorption performance of PFACs comparing to the empty aluminum cans (EACs).Moreover, the composite effect between the aluminum can and the PU foam had a pos. effect on the crashworthiness of PFACs.The repeated dynamic impact test results indicated that, compared to EACs, PFACs could withstand repeated impact loads with more repeated numbers and higher impact energy levels.These findings demonstrated PFAC is a promising energy absorber which can be used to withstand repeated impact loads.

2026-12-01·Journal of Clinical Sleep Medicine

Awake speech recordings for machine learning diagnosis of obstructive sleep apnea: a Bayesian meta-analysis

Review

作者: Tan, Benjamin Kye Jyn ; Hao, Yunrui ; Toh, Song Tar ; Ong, Thun How ; Gao, Esther Yanxin ; Tan, Nicole Kye Wen ; Ng, Adele Chin Wei ; Huang, Guang-Bin ; Leow, Leong Chai ; Leong, Zhou Hao ; Phua, Chu Qin

STUDY OBJECTIVES:

Obstructive sleep apnea (OSA) is a prevalent but underdiagnosed condition linked to serious health risks. Due to the limited accessibility of polysomnography (PSG), AI (Artificial Intelligence)-based speech analysis has gained attention as a non-invasive screening tool. This Bayesian meta-analysis evaluates the diagnostic accuracy of AI models trained on awake speech and examines factors affecting performance.

METHODS:

We systematically searched Medline/PubMed, Embase, Scopus, Web of Science, and IEEE Xplore databases. Eligible studies included adults with OSA diagnosis via in-lab polysomnography or home sleep apnea tests and evaluated AI models using speech recordings. Models evaluated using random-split test sets or k-fold cross-validation were included in a Bayesian bivariate meta-analysis and meta-regression. Publication bias was examined using a selection model approach, while risk of bias and evidence quality were assessed with QUADAS-2 and GRADE.

RESULTS:

From 6,254 screened articles, 8 studies comprising 24 AI models, trained and tested on 1,060 and 825 participants were included. All studies used professional microphone recordings in the controlled hospital settings. AI models analysing awake speech recordings demonstrated pooled sensitivity and specificity of 82.9% (95% CrI: 80.0-86.4%) and 83.3% (95% CrI: 80.7-86.1%), respectively. The diagnostic odds ratio was 24.3 (95% CrI: 18.2-35.0). Higher mean age improved sensitivity. No significant effects were seen for OSA severity, model type, OSA prevalence, or male percentage. Publication bias was not evident.

CONCLUSION:

AI models trained on awake speech recordings demonstrate good diagnostic accuracy for OSA and hold potential as a practical, scalable screening tool in both clinical and community-based settings.

2026-12-01·Nano-Micro Letters

Boron-Insertion-Induced Lattice Engineering of Rh Nanocrystals Toward Enhanced Electrocatalytic Conversion of Nitric Oxide to Ammonia

Article

作者: Han, Peng ; Ling, Chongyi ; Xu, Lei ; Wang, Jinlan ; Gu, Zhengxiang ; Ma, Chen ; Xu, Xiangou ; Gu, Ping ; Chen, Weiwei ; Zheng, Long ; He, Qiyuan ; Su, Dong ; Chen, Ye ; Zeng, Zhiyuan ; Wang, Gang ; Wang, Wenbin

Abstract:

Electrocatalytic nitric oxide (NO) reduction reaction (NORR) is a promising and sustainable process that can simultaneously realize green ammonia (NH3) synthesis and hazardous NO removal. However, current NORR performances are far from practical needs due to the lack of efficient electrocatalysts. Engineering the lattice of metal-based nanomaterials via phase control has emerged as an effective strategy to modulate their intrinsic electrocatalytic properties. Herein, we realize boron (B)-insertion-induced phase regulation of rhodium (Rh) nanocrystals to obtain amorphous Rh4B nanoparticles (NPs) and hexagonal close-packed (hcp) RhB NPs through a facile wet-chemical method. A high Faradaic efficiency (92.1 ± 1.2%) and NH3 yield rate (629.5 ± 11.0 µmol h−1 cm−2) are achieved over hcp RhB NPs, far superior to those of most reported NORR nanocatalysts. In situ spectro-electrochemical analysis and density functional theory simulations reveal that the excellent electrocatalytic performances of hcp RhB NPs are attributed to the upshift of d-band center, enhanced NO adsorption/activation profile, and greatly reduced energy barrier of the rate-determining step. A demonstrative Zn–NO battery is assembled using hcp RhB NPs as the cathode and delivers a peak power density of 4.33 mW cm−2, realizing simultaneous NO removal, NH3 synthesis, and electricity output.

485

项与 东南大学 相关的新闻(医药)2026-03-07

一位深耕GPCR十五年的学者,用21篇通讯作者CNS论文,构建了从配体发现到药物开发的完整科学体系引言:一份仍在刷新的成绩单

2026年3月5日,Cell在线发表了孙金鹏教授团队关于代谢物门控血管收缩开关与玫瑰痤疮治疗的最新研究。这篇论文的发表,标志着孙金鹏教授作为通讯作者的CNS论文数量已达到约21篇(Nature 9篇、Science 2篇、Cell约10篇),且全部为回国后在国内完成的工作。

更令人瞩目的是发表速度的加速:仅2025-2026年间,团队就以通讯作者身份发表了约10篇CNS论文。从2025年初的Cell到3月的Science和Nature同月刊发,从5月的Cell到11月的Cell,再到2026年开年即连发两篇Cell——这种密集的顶刊产出,在国内生命科学领域极为罕见。

本文试图为读者全面梳理孙金鹏团队近年来的CNS论文,厘清这些工作之间的内在逻辑,分析频繁登顶CNS的深层原因,并探讨对科研工作者的启示。一、孙金鹏其人:从诺奖导师实验室到山东大学的十五年深耕

孙金鹏教授1998年毕业于中国科学技术大学(生物学与计算机科学双学位),2007年获美国爱因斯坦医学院分子药理学博士,随后在杜克大学Robert J. Lefkowitz教授(2012年诺贝尔化学奖得主、GPCR领域奠基人)实验室完成博士后研究。2011年全职回国,在山东大学建立独立研究团队,现任山东大学高等医学研究院院长,新基石研究员,国家杰出青年基金获得者。

他长期聚焦于G蛋白偶联受体(GPCR)的配体发现、药物靶点确证和膜受体药物开发,建立了内源性配体捕获和高灵敏多通路GPCR活力检测等核心技术平台,在GPCR研究领域取得了系统性的原创突破。二、CNS论文全景图:按时间线逐一解读【2021年·奠基】发现糖皮质激素的膜受体

Nature, 2021年1月7日"Structures of glucocorticoid-bound adhesion receptor GPR97-Go complex"

联合浙江大学张岩团队和中科院上海药物所徐华强团队完成。该研究首次鉴定了黏附类受体GPR97为糖皮质激素的膜受体,并解析了世界上首个黏附类GPCR与G蛋白复合物的高分辨率冷冻电镜结构。这一发现为陈宜张院士早年发现的糖皮质激素非核受体功能提供了重要的分子基础。

意义:这项工作开启了团队在黏附类受体(aGPCR)领域的系统布局,也奠定了"类固醇激素的膜受体可能是aGPCR"这一重要假说的基础。【2022年·突破】提出黏附类受体激活的"手指模型"

Nature × 2, 2022年4月13日(背靠背发表)"Structural basis for the tethered peptide activation of adhesion GPCRs""Tethered peptide activation mechanism of the adhesion GPCR ADGRG2 and ADGRG4"

两篇Nature背靠背发表,分别由孙金鹏教授和于晓教授主导。研究阐明了黏附类GPCR自激活及对机械力感知的机制,创新性地提出aGPCR激活的"手指模型",并构思出通用多肽配体拮抗剂的开发方案——通过在激动多肽的关键位点引入负电修饰,如同在"手指"上戴了一个"戒指",即可将激动剂转化为拮抗剂。

意义:为整个黏附类受体家族的药物开发提供了普适性策略。【2023年·大满贯】个人CNS大满贯年,入选中国十大科技进展论文1:揭示鱼油受体识别不饱和脂肪酸的分子密码

Science, 2023年3月2日"Unsaturated bond recognition leads to biased signal in a fatty acid receptor"

联合浙江大学张岩团队完成。GPR120(又称FFAR4)是鱼油中ω-3脂肪酸的受体,也是糖尿病的重要药物靶点。该研究解析了多个脂肪酸与GPR120-G蛋白复合物的高分辨率结构,发现GPR120配体口袋内的9个芳香族氨基酸通过π-π相互作用特异性识别不饱和脂肪酸的双键位置,不同位置的双键引导不同的下游偏向性信号转导。

核心贡献:揭示了GPCR如何通过感知脂肪酸的微妙化学差异(双键位置)来选择性激活不同信号通路,为开发精准靶向GPR120的新型降糖药奠定了结构基础。论文2:解码嗅觉受体对气味分子的感知机制

Nature, 2023年5月24日"Structural basis of amine odorant perception by a mammal olfactory receptor"

联合上海交通大学医学院李乾团队完成。嗅觉是最古老的感觉之一,但哺乳动物嗅觉受体如何识别气味分子的分子机制长期不明。该研究系统揭示了微量胺相关受体TAAR9感知胺类气味分子的分子机制及其独特的激活方式。该研究成功入选2023年中国十大科技进展新闻。

核心贡献:这是人类认识哺乳动物嗅觉受体识别机制的开创性工作,也标志着孙金鹏团队将研究视野从代谢相关GPCR拓展到了感觉受体领域。论文3:靶向TAAR1的精神分裂症候选药物

Cell, 2023年11月13日"Structural and signaling mechanisms of TAAR1 enabled preferential agonist design"

联合四川大学李乾团队和山东第一医科大学王越团队完成。微量胺相关受体TAAR1是治疗精神分裂症的新兴靶点。研究团队系统分析了不同内源性胺类激活TAAR1多种G蛋白信号的特征,解析了不同胺类激活TAAR1-Gs/Gq通路的分子机制,进而成功开发出同时具有Gs和Gq双激活活性的TAAR1小分子激动剂ZH8651,并在小鼠模型中验证了其改善精神分裂症状的作用。

核心贡献:展示了从结构解析到候选药物设计的完整研发路径,为靶向TAAR1治疗精神分裂症提供了重要参考。这一年,孙金鹏实现了个人CNS大满贯(Cell、Nature、Science各至少一篇)。【2024年·拓展】解码苦味感知的全新范式

Nature, 2024年5月22日"Bitter taste TAS2R14 activation by intracellular tastants and cholesterol"

与上海科技大学华甜/刘志杰团队联合完成。TAS2R14是人体25个苦味受体中识别苦味分子范围最广的一个。研究利用冷冻电镜发现了多个颠覆性现象:苦味物质并非从经典的胞外口袋进入受体,而是结合在胞内跨膜区;胆固醇则"反客为主"占据了经典的正构配体结合口袋;TM6胞内端通过无序-有序的构象变化精妙协调不同配体识别和G蛋白选择。

核心贡献:颠覆了GPCR配体只能从胞外进入的传统认知,揭示了苦味受体全新的配体识别范式。至此,团队已覆盖了嗅觉和味觉两大感觉模态。【2025年·井喷】一年7篇CNS,前所未有的"论文风暴"

2025年是孙金鹏团队产出最密集的一年。从1月到11月,共发表7篇CNS论文,几乎每两个月就有一篇顶刊面世。论文1:GPCR如何感知酸碱环境的进化密码

Cell, 2025年1月2日"Evolutionary study and structural basis of proton sensing by Mus GPR4 and Xenopus GPR4"

联合四川大学邓成团队完成。氢离子/质子是自然界最小的配体。研究选取了生存环境截然不同的爪蟾(水生,血液偏酸)和小鼠(陆生),解析了7个冷冻电镜结构,发现两个保守超过4.62亿年的组氨酸残基是不同物种GPR4感知质子的共同分子基础,同时揭示了爪蟾GPR4通过独特的组氨酸H159实现对更酸环境的适应。

核心贡献:将GPCR配体识别的边界拓展到最小的分子——质子,并从进化视角理解受体功能的多样性。论文2:发现雄激素的膜受体,开辟安全增肌新策略

Cell, 2025年1月30日"Identification, structure and agonist design of an androgen membrane receptor"

联合德国莱比锡大学Ines Liebscher团队完成。研究筛选鉴定了GPR133/ADGRD1为雄激素5α-DHT的膜受体,发现5α-DHT通过GPR133-Gs-cAMP通路增强骨骼肌力量。更具转化价值的是,团队通过虚拟筛选发现了小分子激动剂AP503,能够增强肌肉力量的同时避免经典雄激素受体介导的前列腺增生等副作用。研究还发现黏附类受体中存在保守的类固醇识别基序"Φ(F/L)2.64-F3.40-W6.53"和"F7.42××N/D7.46",提示许多aGPCR可能识别不同的类固醇激素。

核心贡献:确立了黏附类受体是识别类固醇激素的GPCR亚家族这一重要概念,为高效安全的雄激素替代药物开发提供了全新思路。论文3:神经酰胺加重动脉粥样硬化的膜受体机制

Nature, 2025年3月6日"Sensing ceramides by CYSLTR2 and P2RY6 to aggravate atherosclerosis"

联合北京大学孔炜、姜长涛团队和中日友好医院郑金刚团队完成。神经酰胺长期被视为心血管疾病的标志物,但其受体不明。该研究首次在心血管系统中鉴定了CYSLTR2和P2RY6为神经酰胺的内源性受体,阐明了神经酰胺通过这两个受体激活Gq和炎症小体信号加重动脉粥样硬化的机制,并解析了神经酰胺-CYSLTR2复合物结构。论文4:鉴定脂肪产热调控的神经酰胺膜受体

Science, 2025年3月13日"Metabolic signaling of ceramides through the FPR2 receptor inhibits adipocyte thermogenesis"

与Nature论文构成"姊妹篇"。研究鉴定了脂肪细胞中的神经酰胺膜受体FPR2,发现C16:0神经酰胺通过FPR2-Gi通路抑制脂肪组织产热。解析了神经酰胺-FPR2-Gi复合物结构,并通过定点突变将非神经酰胺敏感的FPR1/FPR3成功改造为神经酰胺响应型受体。

核心贡献(两篇合论):Nature和Science同月发表的这两项研究共同构建了神经酰胺信号转导的分子拓扑图谱,颠覆了脂质分子仅通过扩散作用调控代谢的传统认知,揭示了神经酰胺信号网络具有显著的组织异质性——在脂肪组织中通过FPR2抑制产热,在心血管系统中通过CYSLTR2/P2RY6加重动脉粥样硬化。论文5:为孤儿受体MRGPRE"脱孤",揭示肠道菌源胆汁酸改善血糖的新机制

Cell, 2025年5月29日"A microbial amino-acid-conjugated bile acid, tryptophan-cholic acid, improves glucose homeostasis via the orphan receptor MRGPRE"

联合北京大学姜长涛、庞艳莉、纪立农团队完成。研究发现色氨酸胆酸(Trp-CA)是2型糖尿病患者中最显著减少的氨基酸结合型胆汁酸,并鉴定了孤儿受体MRGPRE为其膜受体。Trp-CA通过MRGPRE的两条信号通路——Gs-cAMP和β-arrestin-1-ALDOA磷酸化——双重促进GLP-1分泌,改善葡萄糖稳态,且不会引起传统胆汁酸的瘙痒副作用。

核心贡献:为肠道微生物-宿主代谢互作领域提供了"菌源代谢物→GPCR受体→下游信号"的完整范式,为2型糖尿病的治疗提供了新靶点。论文6:阿尔茨海默病的精准治疗新策略——CCKBR偏向性激动剂

Cell, 2025年11月20日"Elucidating pathway-selective biased CCKBR agonism for Alzheimer's disease treatment"

联合香港城市大学贺菊芳、北京大学张勇/铁璐、香港中文大学(深圳)杜洋和北京宣武医院唐毅团队完成。该研究从临床出发,发现AD严重程度与CCKBR-Gq信号活性降低相关。通过解析CCK8s激活CCKBR与三种G蛋白(Gs/Gq/Gi)的冷冻电镜结构,揭示了受体口袋顶部、中部和底部分别主要介导Gs、Gq和Gi信号的结构基础。基于此,团队理性设计了Gq偏向性激动剂3r1,在5×FAD小鼠模型中可显著改善认知、减少Aβ斑块和Tau蛋白磷酸化,且具有更优的血脑屏障穿透性和更长的半衰期。

核心贡献:完美诠释了"从临床问题出发→结构解析→理性药物设计→动物验证"的转化医学路径,是GPCR偏向性信号理论在神经退行性疾病中的成功应用。【2026年·持续领跑】开年即连发三篇Cell论文1&2:嗅觉受体感知脂肪酸气味与抗肥胖靶点

Cell × 2, 2026年1月21日(同日发表)"Mechanistic Insights into Fatty Acid Odor Detection Mediated by Class II Olfactory Receptors""Identification of Or5v1/Olfr110 as an oxylipin receptor and anti-obesity target"

两篇Cell同日发表。第一篇联合杨帆、于晓、李乾、夏明团队,阐明了II类嗅觉受体介导脂肪酸气味感知的分子机制,延续了团队从2023年TAAR9嗅觉研究以来在嗅觉受体领域的深入布局。第二篇联合北京大学杨吉春、于晓、东南大学柴人杰、仁济医院郝勇团队,鉴定了Or5v1/Olfr110为氧化脂的受体和抗肥胖靶点,将嗅觉受体的功能从"闻气味"拓展到了"调代谢"。

核心贡献:系统完善了嗅觉受体的配体识别机制,并揭示了嗅觉受体在代谢调控中的意想不到的功能,为肥胖治疗开辟了全新的靶点方向。论文3:代谢物门控血管收缩开关与玫瑰痤疮治疗

Cell, 2026年3月5日"Metabolite-gated vascular contractility switch: OXGR1 activation mechanism enables agonist therapy for rosacea erythema"

联合中南大学湘雅医院李吉、邓智利团队和山东大学郭璐璐教授完成。OXGR1(又称GPR99)是代谢物α-酮戊二酸(TCA循环中间产物)的受体。研究揭示了OXGR1的激活机制及其在血管收缩中的调控作用,并据此开发了治疗玫瑰痤疮(酒糟鼻)红斑的激动剂疗法。

核心贡献:展示了代谢物通过GPCR调控血管功能的新范式,将基础的受体研究成功转化为皮肤科疾病的治疗策略。三、核心研究逻辑:六条交织的学术主线

梳理全部21篇CNS论文,可以提炼出六条清晰的学术主线:主线一:为孤儿受体"寻亲"——系统性配体发现

这是团队最核心的学术标签。通过自主建立的内源性配体捕获系统和高灵敏多通路GPCR活力检测平台,团队系统地为孤儿受体匹配内源性配体:

配体类型

受体

发表期刊/年份

糖皮质激素

GPR97

Nature 2021

痒觉介质

MRGPRX1

Nature 2021

胺类气味分子

TAAR9

Nature 2023

不饱和脂肪酸

GPR120

Science 2023

苦味物质/胆固醇

TAS2R14

Nature 2024

质子/氢离子

GPR4

Cell 2025

雄激素

GPR133

Cell 2025

神经酰胺

FPR2, CYSLTR2, P2RY6

Science+Nature 2025

色氨酸胆酸

MRGPRE

Cell 2025

氧化脂

Or5v1/Olfr110

Cell 2026

脂肪酸气味

II类嗅觉受体

Cell 2026

α-酮戊二酸

OXGR1

Cell 2026主线二:黏附类受体——开辟全新赛道

黏附类受体是GPCR中最"神秘"的亚家族,绝大多数是孤儿受体。团队在此领域的深度布局极具前瞻性:GPR97/糖皮质激素(Nature 2021)→ "手指模型"/力感知(Nature×2 2022)→ GPR133/雄激素(Cell 2025)。核心概念:黏附类受体是识别类固醇激素的GPCR亚家族。主线三:感觉受体图谱——从痒觉到嗅觉、味觉、酸碱感知、听觉平衡

团队系统解析了多种感觉模态的GPCR分子机制:痒觉(Nature 2021)→ 嗅觉(Nature 2023, Cell 2026×2)→ 苦味觉(Nature 2024)→ 酸碱感知(Cell 2025)→ 平衡觉(Cell Res 2025)。主线四:代谢受体与脂质信号——从脂肪酸到神经酰胺到胆汁酸

GPR120/脂肪酸(Science 2023)→ FPR2/神经酰胺/脂肪产热(Science 2025)→ CYSLTR2,P2RY6/神经酰胺/动脉硬化(Nature 2025)→ MRGPRE/色氨酸胆酸/血糖(Cell 2025)→ OXGR1/α-酮戊二酸/血管(Cell 2026)。主线五:偏向性信号——理论到应用的十年跨越

从早期提出的"笛子模型"(Nat Commun 2015),到TAAR1的Gs/Gq双激活激动剂ZH8651治疗精神分裂(Cell 2023),再到CCKBR的Gq偏向性激动剂3r1治疗阿尔茨海默病(Cell 2025),偏向性信号理论已从概念走向了实际的候选药物。主线六:从结构到药物——完整的研发闭环

几乎每项工作都遵循"配体筛选→结构解析→机制阐明→功能验证→候选药物"的完整范式。团队已有超过20个小分子和多肽完成动物水平的药代和毒理研究。四、频繁发表CNS的深层原因原因一:选择了"富矿"级方向并坚持深耕

GPCR是最大的药物靶标家族(超40%上市药物靶向GPCR),但仍有100多个孤儿受体等待"脱孤"。每发现一个重要配体,就意味着打开一个全新研究领域和药物空间。这个方向兼具基础性(细胞信号转导根本问题)和转化性(直接关联药物开发),天然适合产出高影响力成果。原因二:自主技术平台的壁垒效应

团队建立了其他课题组难以快速复制的核心技术体系:内源性配体捕获系统、高灵敏多通路GPCR活力检测、微尺度生物力激活方法、冷冻电镜结构解析能力、AI辅助配体设计。这套"组合拳"使团队在配体发现的效率上具有独特优势。原因三:高效互补的协作网络

团队构建了"核心药理学 + 结构生物学 + 疾病功能验证"的三维协作体系:

长期合作伙伴:于晓(山东大学)、杨帆(山东大学)、姜长涛(北京大学)、孔炜(北京大学)结构生物学:张岩(浙江大学)、徐华强(中科院)、华甜/刘志杰(上海科技大学)国际合作:Ines Liebscher(德国莱比锡大学)、Torsten Schöneberg;临床转化:贺菊芳(香港城市大学)、唐毅(北京宣武医院)、李吉(中南大学湘雅医院)

每个课题都能在多个维度上满足顶级期刊的综合要求。原因四:做"完整故事"而非"零散数据"每篇论文都呈现了从发现到机制到应用的完整链条——CCKBR的工作从AD患者临床样本分析出发,经过结构解析和理性药物设计,最终在动物模型中验证疗效;GPR133的工作从配体筛选开始,经过冷冻电镜结构解析,最终发现了分离增肌效果和副作用的小分子AP503。这种完整性极大提升了每篇论文的影响力。五、对科研工作者的启示启示一:选方向比选课题更重要

孙金鹏2011年回国时选择的GPCR方向,在当时并非热度最高的领域。但这个方向具备"富矿"属性:基础性强、转化空间大、问题源源不断。十五年的坚守证明,选择一个可以系统深耕的方向,远比追逐短期热点更有长远回报。启示二:方法学壁垒是持续产出的护城河

频繁产出顶刊的课题组,几乎都有独特的方法学优势。孙金鹏团队的配体捕获和活力检测系统,使他们在"发现配体"这件事上具有别人难以复制的竞争力。对于年轻PI来说,发展一套核心技术,可能比短期发论文更有战略意义。启示三:构建互补性强的合作网络

在当今科研日益交叉的时代,"独行侠"模式越来越难以产出综合竞争力强的成果。孙金鹏团队的成功经验表明,找到在结构、功能、疾病模型、临床转化等维度上互补的合作伙伴,实现"1+1>2",是提升研究影响力的关键。启示四:敢于在"冷门"赛道率先布局

黏附类受体、嗅觉受体在孙金鹏团队布局时,远没有如今的关注度。正是因为在这些领域率先建立了研究体系,团队才能在新发现不断涌现时抢占先机。科研的最高境界不是追逐热点,而是创造热点。启示五:长期主义的复利效应

21篇CNS论文不是在一两年内突然产出的,而是十五年系统积累的结果。从方法学建立到概念提出,从首个重要发现到全面开花——这种长期主义的研究策略,使得后期的产出呈现出越来越快的加速度,正如科研中的"复利效应"。结语

从2011年回国到2026年,孙金鹏教授用21篇通讯作者CNS论文证明了一件事:在中国本土做出世界顶级的系统性原创研究,不仅是可能的,而且可以持续加速!

他的研究轨迹给我们展示了一个深耕型科学家的理想路径:选对方向、建好平台、织好网络、做好每一个完整的科学故事,然后把时间交给复利。

当下一个孤儿受体被"脱孤"、下一个候选药物进入临床前研究时,我们有理由相信,孙金鹏团队的CNS论文清单还将继续延长。

注:本文基于公开发表论文及相关报道整理,论文信息截至2026年3月。文中论文数量以孙金鹏教授作为通讯作者(含共同通讯)在Cell、Nature、Science发表的文章统计。部分论文的具体发表数量因统计口径差异可能存在微小差别。

版权声明

本文转载“Neuro Next”,版权属于原作者所有,文章翻译和转载为学术传播。

团队水平有限,翻译和转载难免有不恰当之处,请批评指正,多多包涵!

往期精彩回顾:

#多组学研究

#感染与免疫

#生物医药与生态环境研究

#科技资讯与学术诚信

#衰老研究Aging

#人工智能与大数据

点赞!分享!转发!

基因疗法细胞疗法

2026-03-06

·今日头条

> 2026年3月,两项由中国团队主导、使用国产创新药的重磅研究接连登上《新英格兰医学杂志》与《临床肿瘤学杂志》。这标志着中国临床科研与本土药企的深度融合,正在为肝内胆管癌、肝癌等难治性肿瘤的辅助治疗提供突破性“中国方案”,并将患者生存期延长近一倍。

## GOLP方案:改写肝内胆管癌治疗指南

肝内胆管癌素有“小癌王”之称,即便接受根治性手术,伴高危复发因素患者的复发率仍高达约70%,5年生存率仅为25%-40%。

针对这一临床空白,**复旦大学附属中山医院樊嘉、周俭、施国明团队**首创了 **“GOLP”新辅助方案**,在手术前联合使用GEMOX化疗、靶向药仑伐替尼(乐卫玛)及君实生物研发的PD-1抑制剂特瑞普利单抗。

一项纳入178名高危患者的II/III期临床研究显示,该方案带来了显著的生存获益:

- **中位无事件生存期(EFS)翻倍**:新辅助组达**18.0个月**,而直接手术的对照组仅为**8.7个月**。

- **死亡风险降低57%**:新辅助组24个月总生存率为**79%**,对照组为**61%**。

- **手术切除更彻底**:新辅助组肿瘤客观缓解率为55%,**R0切除率高达95%**。

- **复发风险降低31%**:新辅助组中位无复发生存期为**15.4个月**,显著优于对照组的**9.7个月**。

> 该研究是全球首个多中心随机对照探索肝内胆管癌新辅助治疗的研究,填补了国际空白。

方案安全性良好,新辅助治疗阶段≥3级治疗相关不良事件发生率为**26%**,无治疗相关死亡事件,且未增加手术风险。

## TACE联合靶免:攻克中晚期肝癌治疗瓶颈

对于不可切除的中晚期肝细胞癌,肝动脉化疗栓塞术是主流疗法,但对中国每年超50万例次治疗而言,长期生存率仍需改善。**东南大学滕皋军院士团队**牵头的研究,为TACE联合国产“靶向+免疫”方案提供了高级别循证证据。

该研究纳入200例不可手术患者,其中**90%肿瘤负荷≥7厘米**,**40%伴有血管侵犯**。患者被随机分配至TACE联合恒瑞医药的卡瑞利珠单抗(PD-1抑制剂)和阿帕替尼(靶向药)组,或单纯TACE组。

关键结果显示,联合治疗带来了多重突破:

- **疾病进展或死亡风险降低66%**:根据复合标准,联合组中位无进展生存期达**10.8个月**,而单纯TACE组仅为**3.2个月**。

- **肿瘤客观缓解率翻倍**:根据mRECIST标准,联合组为**61%**,单纯TACE组为**29%**。

- **延缓治疗耐药**:联合组中位至TACE无法继续治疗的时间为**13.7个月**,远超单纯TACE组的**3.9个月**。

尽管总生存期数据尚未完全成熟,但联合组已显示出数值优势(中位OS 24.0个月 vs 21.5个月),且该方案已被纳入国家卫健委《原发性肝癌诊疗指南》。

## 国产创新药:从产业跟跑到临床领跑

两项突破性研究的背后,是**中国本土创新药**的坚实支撑。GOLP方案中的特瑞普利单抗由君实生物研发,是首个获得美国FDA批准上市的中国自主研发创新生物药;肝癌方案中的卡瑞利珠单抗则来自恒瑞医药。两者均已被纳入国家医保,大幅提升了药物可及性。

这种临床与产业的协同崛起,在2025年国家药监局的审批数据中得到集中体现:

- **全年批准76个创新药**,创历史新高。

- **国产创新药占据绝对主导**:化学药国产占比**80.85%**,生物制品国产占比更高达**91.30%**。

- **创新药对外授权交易总金额超过1300亿美元**,显示中国研发成果正获得全球认可。

## 多维影响:从患者受益到全球贡献

中国创新药参与的辅助疗法突破,其影响已超越单一癌种。例如,卡瑞利珠单抗联合同步放化疗用于高危局晚期鼻咽癌,能将3年无进展生存率从**71.3%** 显著提升至**83.4%**。这印证了国产创新药平台在多肿瘤领域的应用潜力。

从临床证据空白到登顶国际顶刊,从依赖进口到国产主导,中国肿瘤治疗正完成从“跟随者”到“贡献者”的角色转变。樊嘉院士在成果发布会上表示,相关“中国方案”未来有望被纳入国际指南向全球推广。

对于全球肿瘤患者而言,这意味着更多由临床需求驱动、本土研发支撑的有效治疗选择正在成为现实。

临床结果临床2期

2026-03-05

正在召开的全国两会上,医药创新成为热议焦点。今年的政府工作报告明确将生物医药列为“新兴支柱产业”,强调其研发应用走在世界前列,体现了国家对生物医药产业的战略重视,有望在政策、资本等方面形成更强支持合力。作为医药卫生界委员,关于创新药的发展有哪些深度解读?近日,央视网记者对话全国政协委员、我校郝海平校长,我们一起来看看吧!

近年来,中国在创新药物领域取得显著进步。2025年,我国创新药上市数量创历史新高,达76个,对外授权交易额突破1300亿美元。其中国产创新药占比高达84.29%,但真正的首创新药(即基于全新靶点设计的突破性疗法)仍显稀缺,占比仅为5.71%。这不仅制约了产业国际竞争力的提升,也限制了患者对重大疾病的治疗选择。

针对原始创新策源能力不足、基础研究成果转化率低、产业同质化严重等困境,央视网《我俩会会》两会特别栏目特邀全国政协委员、中国药科大学校长郝海平进行深度解读。

全国政协委员

中国药科大学校长

民盟江苏省委员会副主委

郝海平

央视网:您今年带来了关于促进中国原创药物产业发展的提案,提出建设人工智能药学国家实验室和原创药物研发生态区。能否先简单介绍一下,为什么您会特别关注这个领域?提案的初衷是什么?

郝海平:

近年来,中国在创新药物研发领域进步显著,能力快速提升。例如,恒瑞、信达等国内企业开发的新药被跨国企业看中,授权或合作拓展海外市场,这表明中国已接近世界领先水平,能与美国等生物医药传统强国竞争。

但另一方面,真正的原创新药仍然稀缺。这不仅是中国的问题,全球都面临类似挑战,比如针对肿瘤、心脑血管疾病、帕金森病等,缺乏具有突破性疗效的下一代药物。针对我国生物医药产业创新链、产业链、人才链资源分散、缺乏有效整合等突出问题,我提出建设人工智能药学国家实验室和原创药物研发生态区,初衷在于借助AI技术提升研发效率、促进跨学科合作,缩短从实验室到临床的路径。这两大系统工程能够为加速突破重大疾病创新疗法,进一步提升中国在全球医药创新中的话语权奠定重要基础。

央视网:据您观察,目前我国创新药产业还面临哪些制约?如“数据依赖外部”和“创新同质化”等。郝海平:当前比较突出的问题是,大学和科学家的原创发现难以转化为实际药物项目,缺乏有效的平台和生态支持。这主要体现在三方面:

一是国内数据和研究资源分散,严重依赖外部数据(库),已经威胁到我国科研创新活动的可持续性。二是研发布局过于集中在热门靶点,创新活动同质化严重。三是转化支撑乏力,从基础研究到临床应用的路径长、成本高、容错率低。

为此,需要从两方面入手:首先,建设智能药学国家实验室,利用人工智能技术不仅能够加速药物研发过程,尤其是靶标发现和药物设计环节,还能够提高创新研究的整体成功率,降低研发成本,提升容错率。目前,美国DeepMind公司的Alpha系列技术已覆盖了生命科学多个环节,中国需布局国家实验室建设,发挥新型举国体制优势,整合创新资源,打破数据孤岛,促进AI与生物医药深度融合。

其次,完善生态体系,包括专业孵化团队和早期投资基金。需要组建一支既包括科研和临床专家,也包括市场和财务专家的综合团队,共同识别有前景的技术和产品,提供一站式孵化服务。例如,中国药科大学正与地方政府合作打造“政产学研医金服”协同创新中心,汇集多领域专家,目前在心脏疾病、心律失常等领域已有实践探索。目标是实现药品注册、临床研究等全流程服务,加速新药研发。

央视网:在提案中,您提到要建设“人工智能药学国家实验室”。对于普通大众来说,人工智能和药物研发结合能带来哪些具体好处?比如,会不会让新药研发更快、更安全?

郝海平:AI可以应用到药物研发的各个环节,包括疾病机理研究、靶标发现、药物设计、处方工艺开发、生产质量控制、临床研究等全流程。人体生命系统非常复杂,而专家的知识往往受学科限制,比较片面。AI可以作为一个融合性的专家系统,整合不同领域的知识,打破传统研发边界,带来更大突破。

目前AI让药品的研发速度加快,例如在药物分子生成和化学反应优化方面,AI能将原本需要20步的化学反应简化为6-7步;还提升了安全性,通过模拟和预测降低研发风险。但在更早期的靶点发现阶段,AI作用尚不明显,因为人类对许多疾病的认知还不全面;当然,从另一个角度来讲,这更需要AI的帮助,在广阔的数据空间中挖掘有效信息。未来,AI有望通过大数据帮助解析生命复杂性,加速药物发现,让新药更安全、研发更高效。

央视网:您的另一个提案建议以南京为核心建设“国家原创药物研发生态区”。为什么选择南京?这个生态区建成后,对全国其他地区的医药创新会有怎样的带动作用?

郝海平:南京是一个被低估的城市,拥有南京大学、东南大学、中国药科大学和南京医科大学等众多高水平大学,这些学校在生命科学、生物医药和人工智能领域实力强劲,使得南京已经成为国内顶尖人才聚集地和创新高地。

此外,江苏在生物医药创新上全国领先,2025年,我国批准的创新药中近40%来自江苏,江苏的创新药出海总额占到全国的近50%。并且,地方政府如江苏省委和南京市委也高度重视支持示范区建设。

南京还可以利用长三角一体化的机会,整合上海、杭州等地的资源。生态区建成后,将树立一个样板,通过汇聚资本、技术和人才,实现从创新发现到转化落地的一站式服务。类似于改革开放中的深圳,示范区可以先试先行,再带动全国医药创新,避免各地重复建设,促进资源共享。

央视网:如果这些提案能落地,您预计未来5-10年内,中国原研药产业会出现哪些看得见的变化?普通患者在用药选择和治疗效果上能感受到哪些改善?

郝海平:我认为可能出现四个关键标志:第一,中国企业将发展成为国际领先的跨国企业,进入全球排行榜前列;第二,中国自主研发的新药会在重大疾病上取得突破,如治疗阿尔茨海默病和恶性肿瘤的药物,能显著延长患者生命、改善生活质量,甚至实现治愈;第三,中国将涌现大量创新型新药研发公司,为医药创新提供持续动力;第四,在这一生态区,大学和科研机构的科学家能无缝对接,快速将科学发现转化为新药。

对普通患者来说,这些变化意味着用药更方便,像肿瘤这样的疾病不再那么可怕,生活质量会明显提升。同时,临床使用和医保支付体系需要配套支持,如加速创新药进入临床使用,完善医保准入与支付体系。我相信,随着AI等技术缩短研发周期、降低成本,更多患者能受益于创新疗法。

来源 | 央视网

编辑 | 周倩

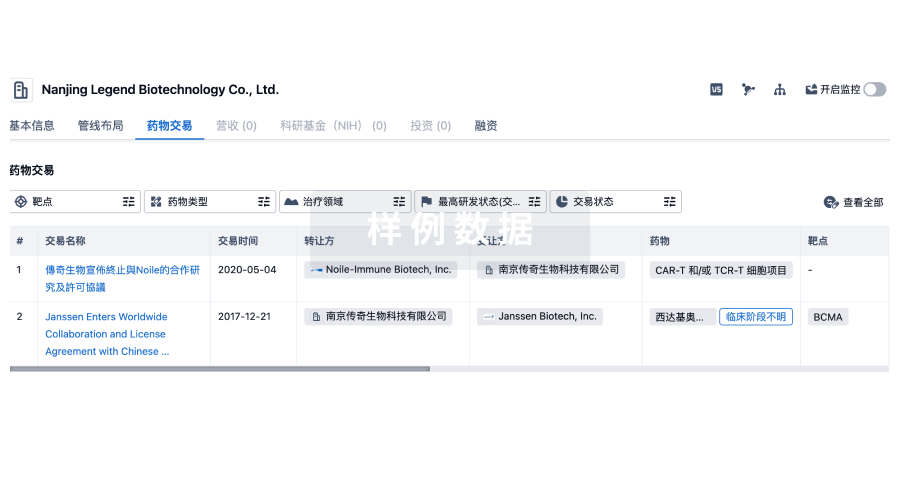

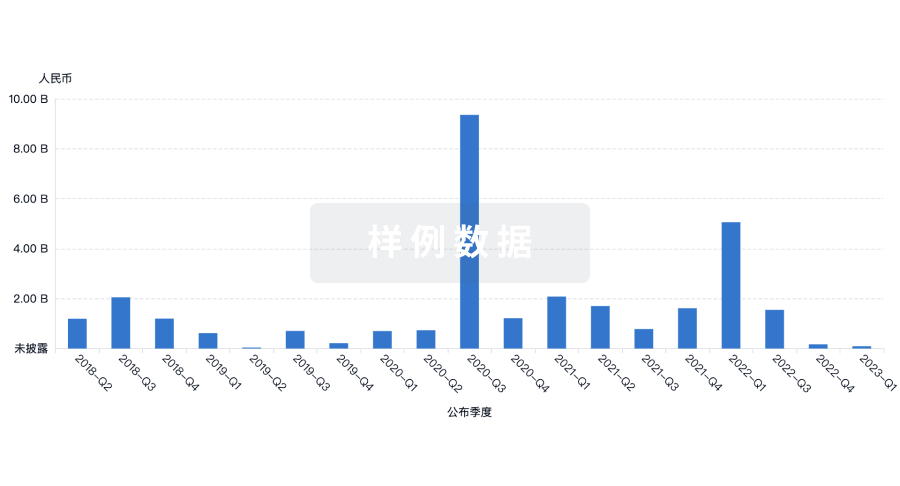

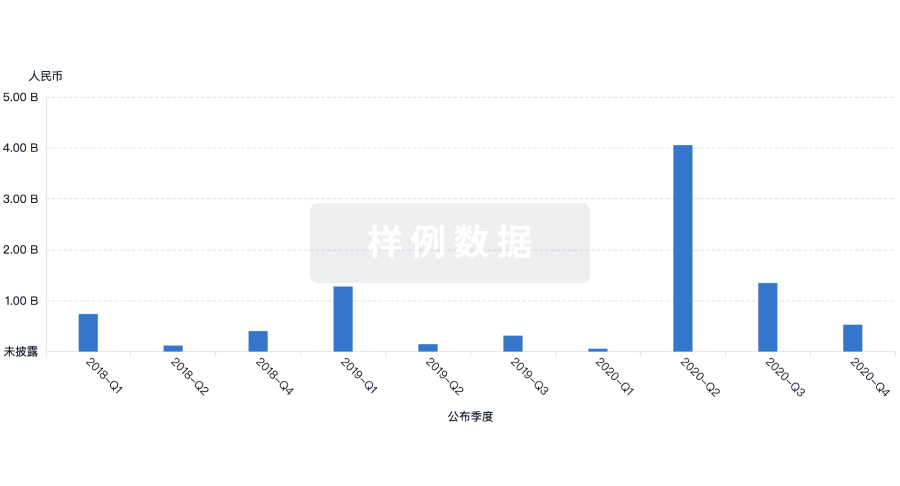

100 项与 东南大学 相关的药物交易

登录后查看更多信息

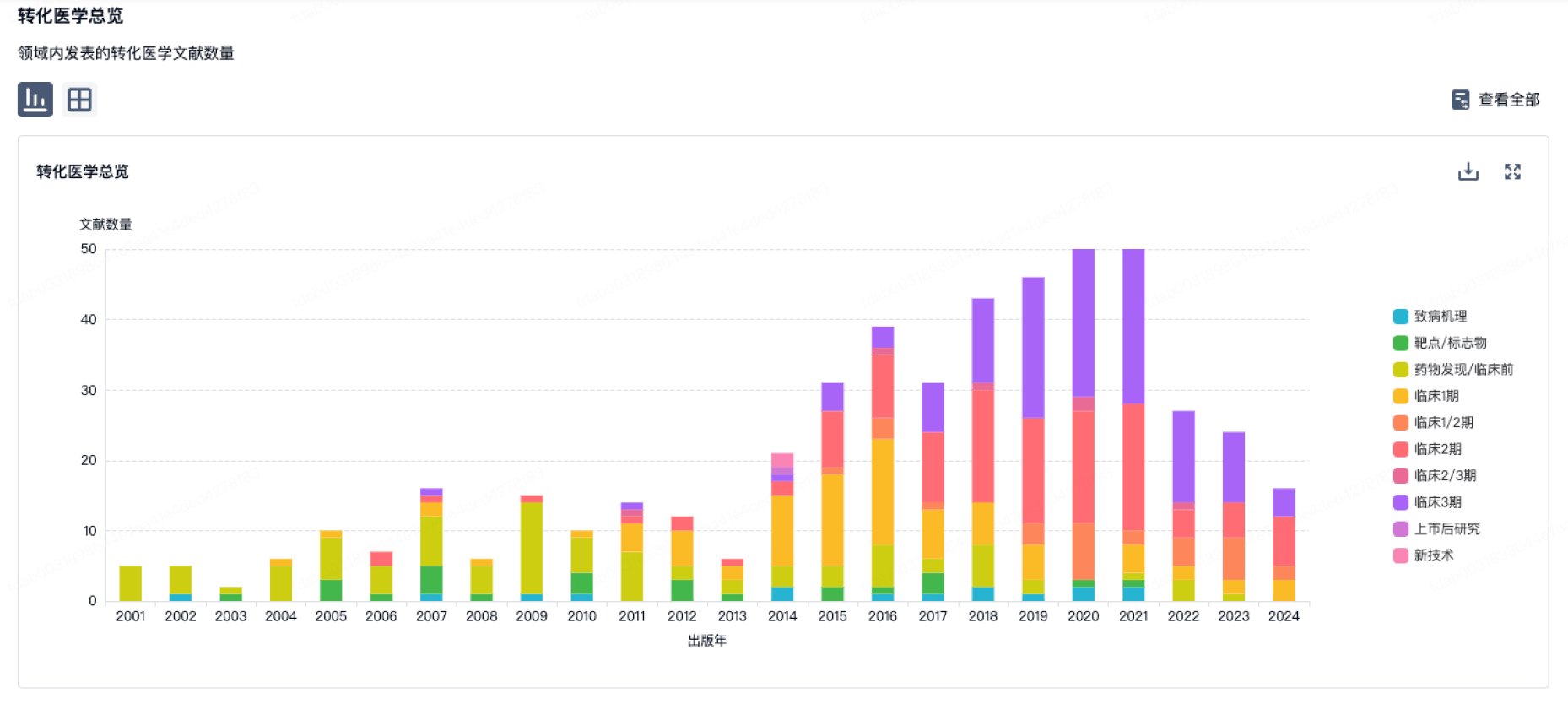

100 项与 东南大学 相关的转化医学

登录后查看更多信息

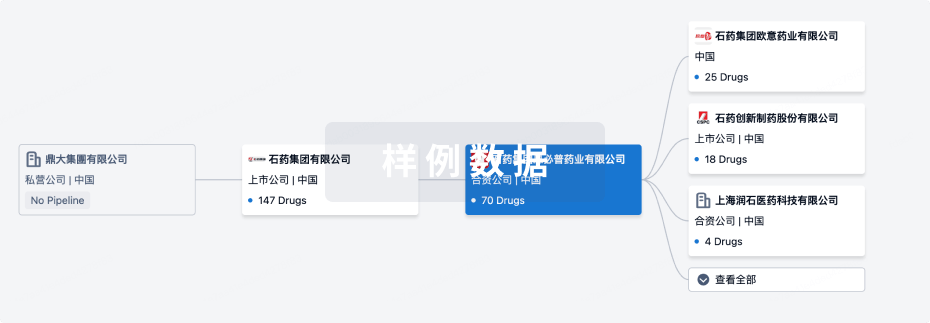

组织架构

使用我们的机构树数据加速您的研究。

登录

或

管线布局

2026年06月10日管线快照

管线布局中药物为当前组织机构及其子机构作为药物机构进行统计,早期临床1期并入临床1期,临床1/2期并入临床2期,临床2/3期并入临床3期

药物发现

15

21

临床前

临床2期

1

6

其他

登录后查看更多信息

当前项目

| 药物(靶点) | 适应症 | 全球最高研发状态 |

|---|---|---|

人脐带间充质干细胞(茵冠生物) | 急性呼吸窘迫综合征 更多 | 临床1/2期 |

VEGFA mRNA-LNP(Southeast University) ( VEGF ) | 创伤和损伤 更多 | 临床前 |

XSJ-10 ( HDACs ) | 肿瘤 更多 | 临床前 |

AAV-SchABE8e-sgRNA3 | 听力损失 更多 | 临床前 |

TA516-50-8 ( CYP7B1 ) | 急性肾损伤 更多 | 临床前 |

登录后查看更多信息

药物交易

使用我们的药物交易数据加速您的研究。

登录

或

转化医学

使用我们的转化医学数据加速您的研究。

登录

或

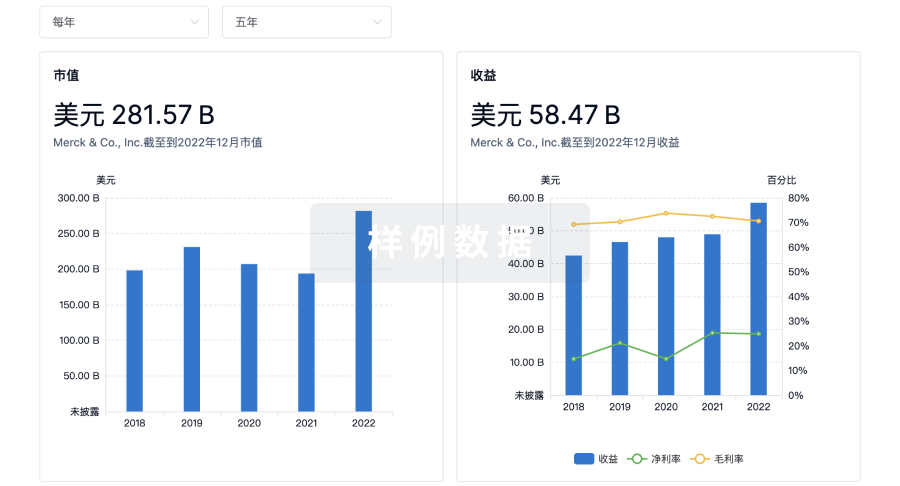

营收

使用 Synapse 探索超过 36 万个组织的财务状况。

登录

或

科研基金(NIH)

访问超过 200 万项资助和基金信息,以提升您的研究之旅。

登录

或

投资

深入了解从初创企业到成熟企业的最新公司投资动态。

登录

或

融资

发掘融资趋势以验证和推进您的投资机会。

登录

或

生物医药百科问答

全新生物医药AI Agent 覆盖科研全链路,让突破性发现快人一步

立即开始免费试用!

智慧芽新药情报库是智慧芽专为生命科学人士构建的基于AI的创新药情报平台,助您全方位提升您的研发与决策效率。

立即开始数据试用!

智慧芽新药库数据也通过智慧芽数据服务平台,以API或者数据包形式对外开放,助您更加充分利用智慧芽新药情报信息。

生物序列数据库

生物药研发创新

免费使用

化学结构数据库

小分子化药研发创新

免费使用